Sebastian Lehner

127 posts

Sebastian Lehner

@sebaLeh

Machine learner at @jkulinz

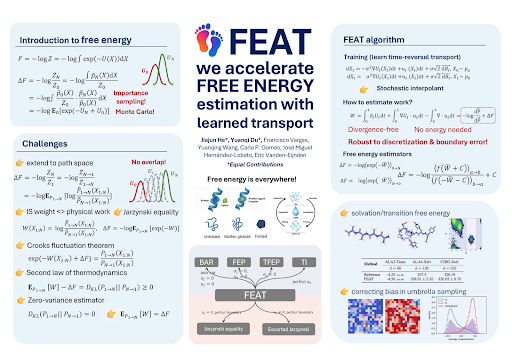

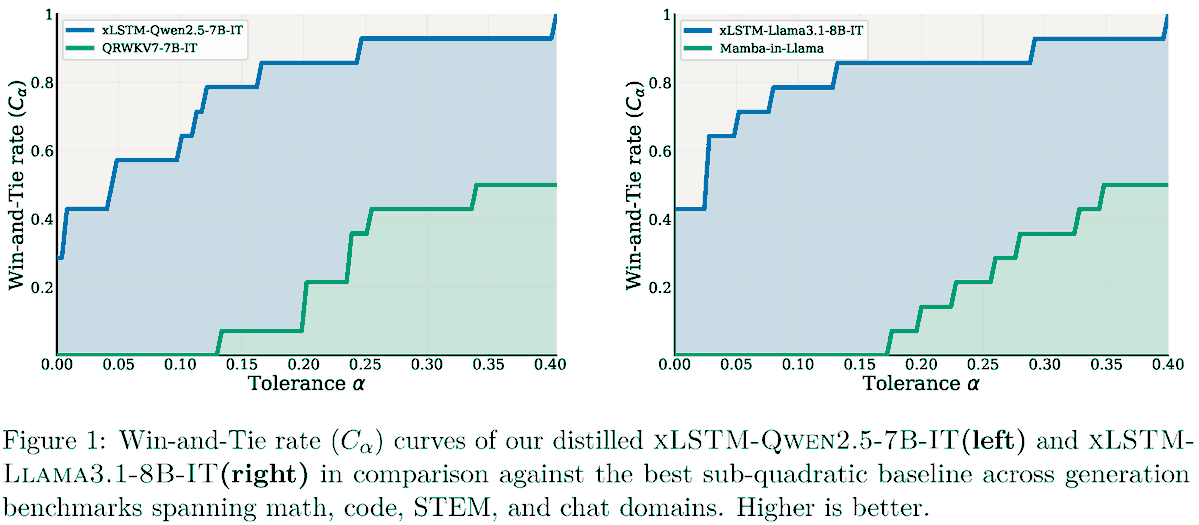

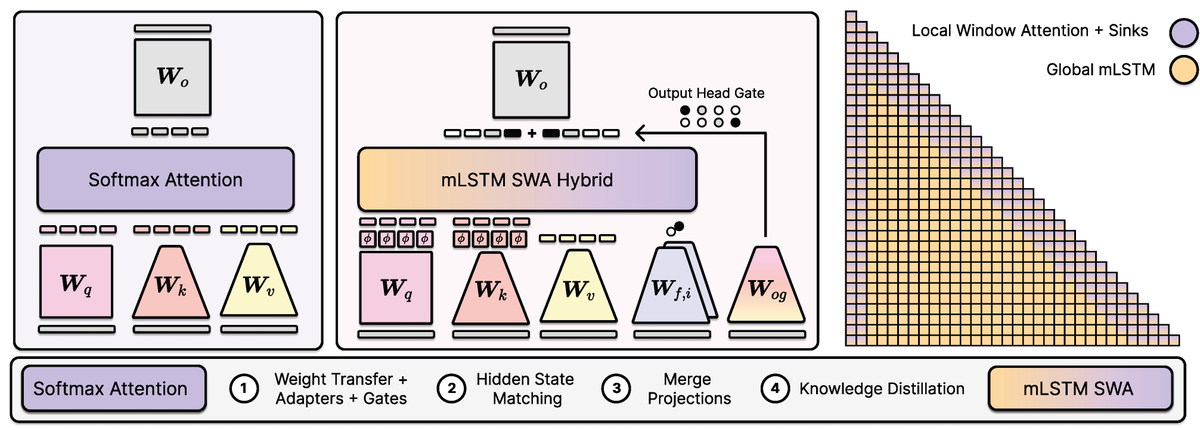

xLSTM Distillation: arxiv.org/abs/2603.15590 Near-lossless distillation of quadratic Transformer LLMs into linear xLSTM architectures enables cost- and energy-efficient alternatives without sacrificing performance. xLSTM variants of instruction-tuned Llama, Qwen, & Olmo models.

Byebye diffusion, say hello to Drifting models. Drifting models will take over diffusion models within the next year. I was told many times that we figured it all out, that there was nothing else to invent in generative AI and it was just about scaling. Wrong again and again.

🚀 Excited to share our new paper on scaling laws for xLSTMs vs. Transformers. Key result: xLSTM models Pareto-dominate Transformers in cross-entropy loss. - At fixed FLOP budgets → xLSTMs perform better - At fixed validation loss → xLSTMs need fewer FLOPs 🧵 Details in thread

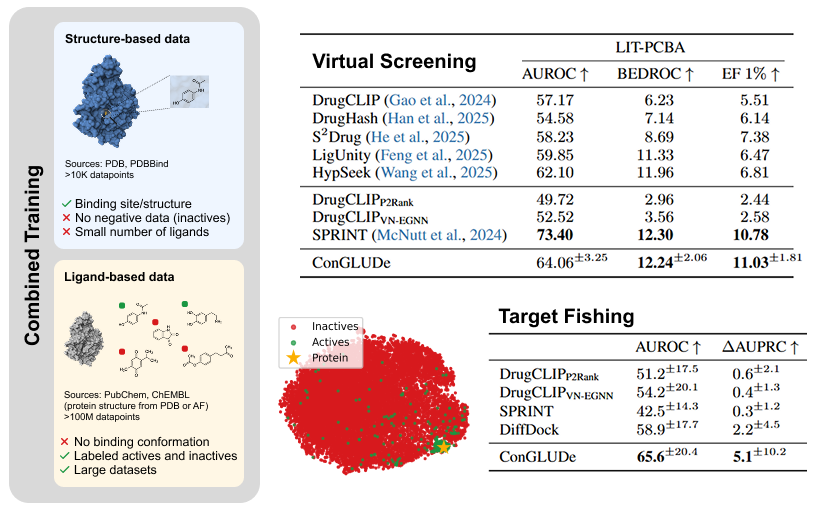

BIG BREAKTHOUGH: A new AI tool could dramatically speed up the discovery of life saving medicines. Researchers at Tsinghua University created a new system called DrugCLIP, that can screen drug molecules against human proteins at a speed that makes traditional methods look ancient. > DrugCLIP uses deep contrastive learning to turn both molecules and protein binding pockets into vectors and match them almost instantly. > It screened 500 million molecules across 10,000 human proteins, covering half of the entire human druggable proteome. > The system completed 10 trillion molecule protein evaluations in a single day, roughly 10 million times faster than classic docking simulations. > They used AlphaFold2 to generate protein structures and then refined binding pockets with a custom tool called GenPack. > The model even identified compounds for TRIP12, a protein linked to cancer and autism that has resisted traditional drug-targeting approaches. All data and models are open access, so labs worldwide can now speed up early stage drug discovery.

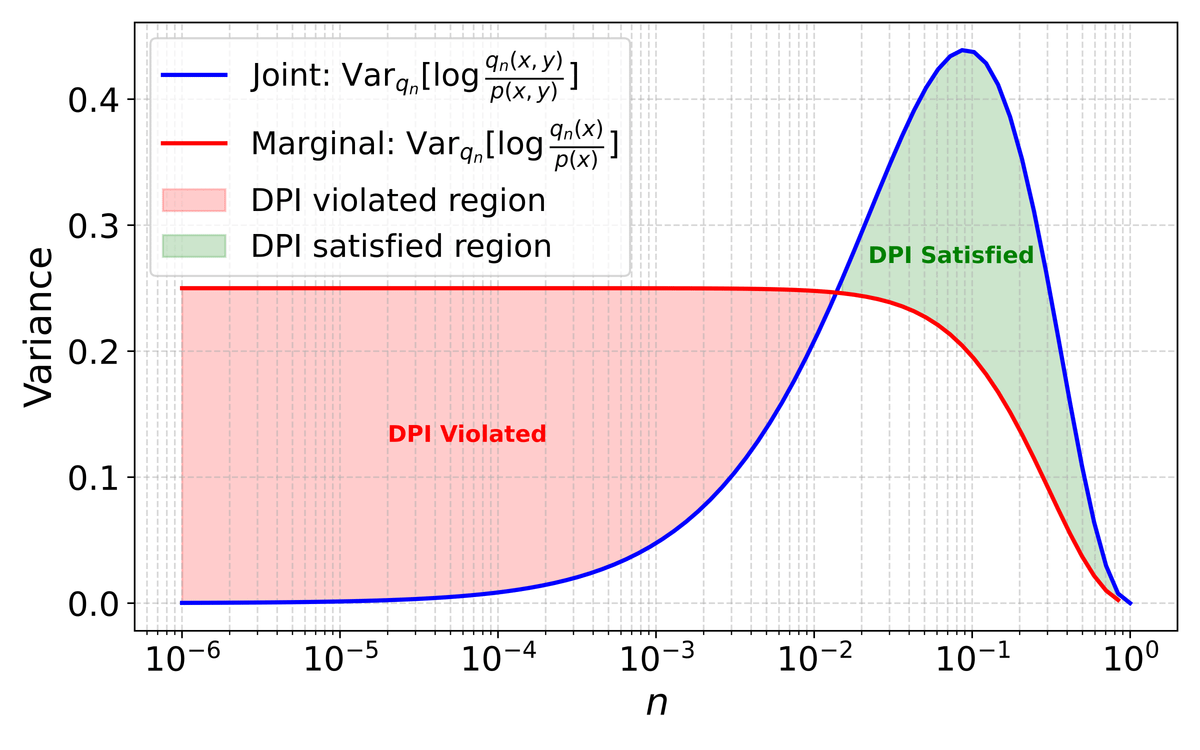

Ever experienced instabilities when using the popular LV (Log Variance) loss for training Diffusion Bridge Samplers?