∫ebitas

6.4K posts

∫ebitas

@sebas_dlmb

matemático pero esencialmente Lana stan

Katılım Şubat 2017

993 Takip Edilen7.3K Takipçiler

Sabitlenmiş Tweet

un año de esto y mi vida no podría ser más distinta,,, things do get better!!!

∫ebitas@sebas_dlmb

me quedé sin trabajo Y me cortaron en la misma semana :((

Español

∫ebitas retweetledi

∫ebitas retweetledi

∫ebitas retweetledi

Her religious manipulation would’ve worked on me I fear

urvans@urvans_

anya taylor-joy as alia atreides in dune part 3

English

∫ebitas retweetledi

∫ebitas retweetledi

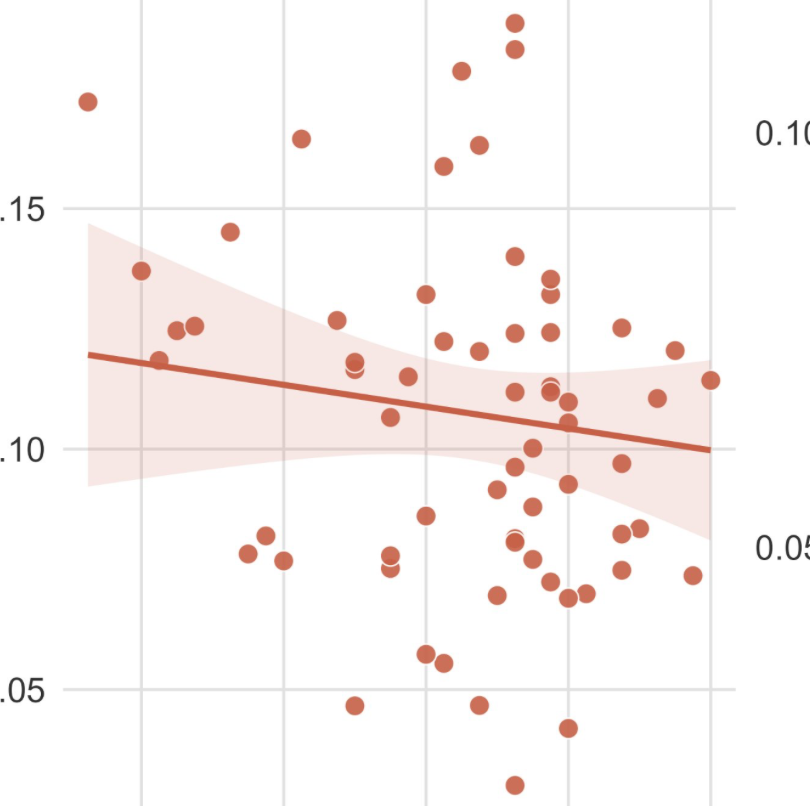

Look at the scatterplot dude, the study didn't find shit

Alex@notcomplex_

A study found a negative relationship between belief in a growth mindset and cognitive training gains. Ironically, people who believed intelligence is more fixed grew the fastest.

English

∫ebitas retweetledi

Twigs bringing out the heavy hitters from the ballroom scene for her NYC show really does highlight that she has a pulse on culture and not only platforms it but lives with it too. No shade, but she’s a genius

ICYESTTWAT@FUCCl

TWIGS BROUGHT THE BALLROOM GIRLS OUTSIDE

English

∫ebitas retweetledi

∫ebitas retweetledi

∫ebitas retweetledi

∫ebitas retweetledi

∫ebitas retweetledi

∫ebitas retweetledi

“We stole all your knowledge and art, and now we’re gonna put a meter on it and sell it back to you. You’re welcome.”

Chief Nerd@TheChiefNerd

🚨 SAM ALTMAN: “We see a future where intelligence is a utility, like electricity or water, and people buy it from us on a meter.”

English

∫ebitas retweetledi

∫ebitas retweetledi

Faggots make some noise!!!

𝐊𝐢𝐧𝐠 𝐒𝐮𝐤𝐮𝐧𝐚 ☢@King_Sukunaaa

"A crooked tree lives its own life, but a straight tree is turned into wood." —Chinese Proverb

English