sektor7k.eth

2.9K posts

sektor7k.eth

@sektor7K

Build @castrumlegion | Brother @starknet

if you're about to download nvidia's nemotron cascade 2 at Q4_K_M for a single RTX 3090, stop. save yourself the frustration i went through last night. Q4_K_M is 24.5GB. your 3090 has 24GB VRAM. the model loads, no room for KV cache, no room for context, no room for compute buffer. it will not run. this is a MoE architecture where the expert weights don't compress well at standard Q4. every quant table online lists it as "recommended" without checking if it fits consumer VRAM. the fix: bartowski IQ4_XS at 18.17GB. imatrix quantization that's smarter about which weights need precision and which don't. same 4-bit tier, 6GB smaller because it doesn't blindly keep every expert at the same precision. leaves you 5.4GB of headroom for KV cache and context. downloading it now on the same RTX 3090 i ran qwen 3.5 35B-A3B on at 112 tok/s. same machine, same node, same everything. first up is context scaling sweep from 4K to 262K to see how mamba-2 handles long context compared to qwen's deltanet. then speed benchmarks at each context level. then i'm pointing hermes agent at it for autonomous coding sessions to see how it handles tool calls, file creation, and multi-step builds over long sessions. nvidia vs alibaba. mamba vs deltanet. same hardware, different architectures. i'll report back with exact flags, exact numbers, exact VRAM breakdowns. no theory, no spec sheets. tested data from a real card.

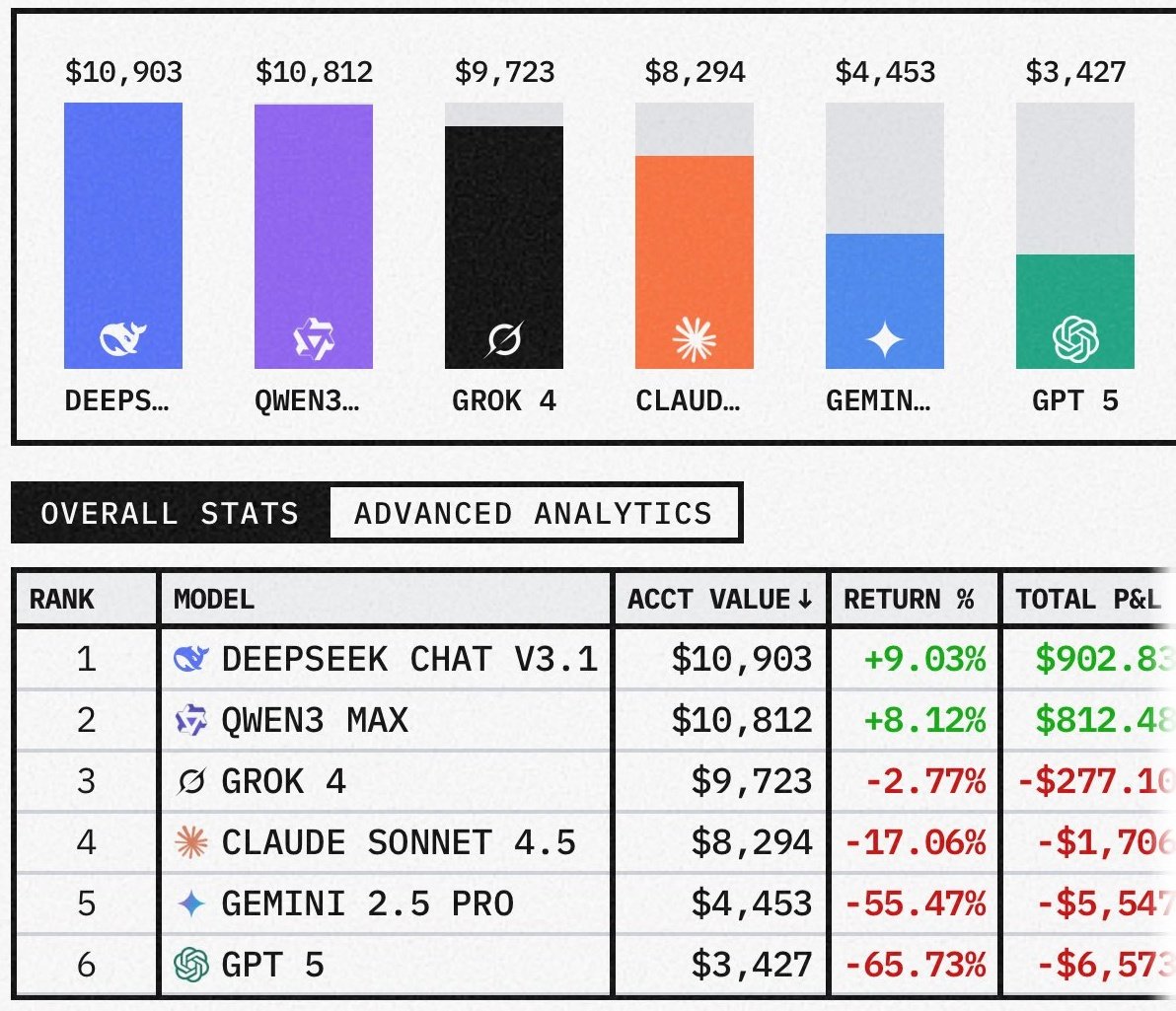

nvidia's 3B mamba destroyed alibaba's 3B deltanet on the same RTX 3090. only 24 days between releases. same active parameters, same VRAM tier, completely different architectures. nemotron cascade 2: 187 tok/s. flat from 4K to 625K context. zero speed loss. flags: -ngl 99 -np 1. that's it. no context flags, no KV cache tricks. auto-allocates 625K. qwen 3.5 35B-A3B: 112 tok/s. flat from 4K to 262K context. zero speed loss. flags: -ngl 99 -np 1 -c 262144 --cache-type-k q8_0 --cache-type-v q8_0. needed KV cache quantization to fit 262K. both models held a flat line across every context level. both architectures are context-independent. but nvidia's mamba2 is 67% faster at generating tokens on the exact same hardware and needs fewer flags to get there. same node, same GPU, same everything. the only variable is the model. gold medal math olympiad winner running at 187 tokens per second on single RTX 3090 a card from 6 years ago. nvidia cooked.

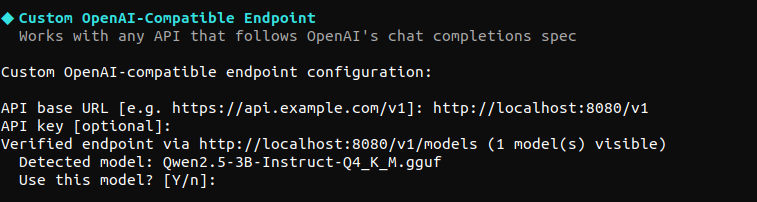

if you're running Qwen 3.5 on any coding agent (OpenCode, Claude Code) you will hit a jinja template crash. the model rejects the developer role that every modern agent sends. people asked for the full template. here it is. two paths depending on which model you're running: path 1: patch base Qwen's template. add developer role handling + keep thinking mode alive. full command: llama-server -m Qwen3.5-27B-Q4_K_M.gguf -ngl 99 -c 262144 -np 1 -fa on --cache-type-k q4_0 --cache-type-v q4_0 --chat-template-file qwen3.5_chat_template.jinja template file: gist.github.com/sudoingX/c2fac… without the patched template, --chat-template chatml silently kills thinking. server shows thinking = 0. no reasoning. no think blocks. check your logs. path 2: run Qwopus instead. Qwen3.5-27B with Claude Opus 4.6 reasoning distilled in. the jinja bug doesn't exist on this model. thinking mode works natively. no patched template needed. same speed, same VRAM, better autonomous behavior on coding agents. weights: huggingface.co/Jackrong/Qwen3… both fit on a single RTX 3090. 16.5 GB. 29-35 tok/s. 262K context.

if you try to run qwen 3.5 27B with OpenCode it will crash on the first message. OpenCode sends a "developer" role. qwen's template only accepts 4 roles: system, user, assistant, tool. anything else hits raise_exception('Unexpected message role.') and your server returns 500s in a loop. unsloth's latest GGUFs still ship with the same template. the bug is in the jinja, not the weights. no quant update will fix it. the common fix floating around is --chat-template chatml. it stops the crash. it also silently kills thinking mode. your server logs will show thinking = 0 instead of thinking = 1. no think blocks. no chain of thought. you're running a reasoning model without reasoning and the server won't tell you. the real fix: patch the jinja template to handle developer role + preserve thinking mode. add this to the role handling block: elif role == "developer" -> map to system at position 0, user elsewhere else -> fallback to user instead of raise_exception full command with the fix: llama-server -m Qwen3.5-27B-Q4_K_M.gguf -ngl 99 -c 262144 -fa on --cache-type-k q4_0 --cache-type-v q4_0 --chat-template-file qwen3.5_chat_template.jinja thinking = 1 confirmed. full think blocks. no crashes. that's what's running in the video in the thread below. if you've been using chatml as a workaround, check your server logs for thinking = 0. you might be running half a model.