Aleks

29 posts

Aleks

@seqradev

Formal methods advocate. Seqra. Engineering OpenTaint — the open source taint analysis engine for the AI era.

$20,000 to scan one codebase that's what anthropic says it cost Mythos to find those zero days. per repo. except API tokens are currently sold at a LOSS. That "$20,000 scan" probably cost closer to $100,000+ in real gpu time ffmpeg couldn't afford the subsidized price let alone the real one... if the cost doesn't come down by a huge factor this just doesn't make sense. It's Anthropic's marketing week 💀

Introducing Project Glasswing: an urgent initiative to help secure the world’s most critical software. It’s powered by our newest frontier model, Claude Mythos Preview, which can find software vulnerabilities better than all but the most skilled humans. anthropic.com/glasswing

vibe coding is officially dead I had to say it. we thought AI would let us relax and code "on chill", but instead it turned us into architectural bureaucrats. we write strict laws, define rules, limits, and principles. if you don't obsessively review the code agent writes, your project will mutate into a massive landfill of tech debt within a month.

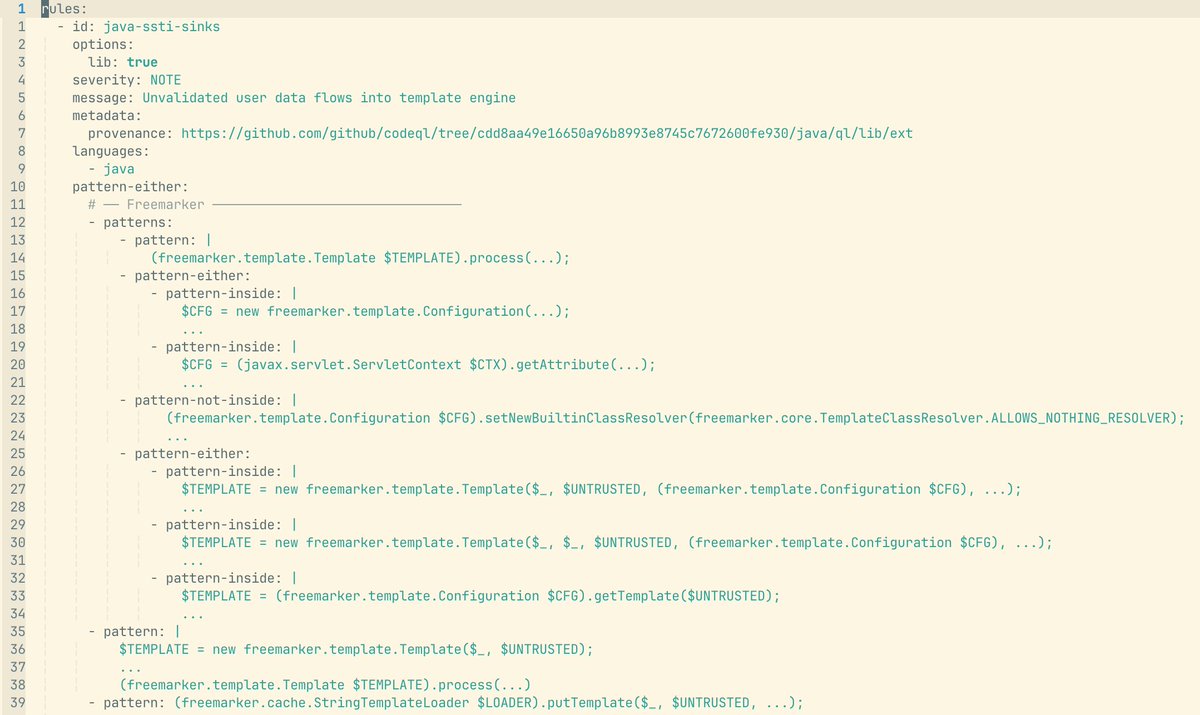

llm-sast-scanner - github.com/SunWeb3Sec/llm… A general-purpose Static Application Security Testing (SAST) skill for LLM-based code vulnerability analysis. Designed to be loaded by AI coding agents (Claude Code, OpenAI Codex, etc.) to perform structured source-to-sink taint analysis across 34 vulnerability classes.

New ZAP Blog Post: zaproxy.org/blog/2026-03-2… This post describes an approach that uses static analysis findings to guide ZAP’s active scans toward the most relevant endpoints. The result is a faster scanning mode suited for CI/CD pipelines. Thanks to @seqradev ! #zaproxy #appsec