Sabitlenmiş Tweet

Seth Fowler

1.3K posts

Seth Fowler

@sethlfowler

fatherhood. therapy. theology. sound money. curious about everything.

San Francisco, CA Katılım Ağustos 2022

573 Takip Edilen471 Takipçiler

"All of us owe a debt. That we owe a debt to the people that went overseas and gave their lives, that the United States might be prosperous, and peaceful, and free." - @VP JD Vance

English

@j_monohan What’s the creative edge you think becomes available when you handwrite?

English

Am I a Social Media Bully? *

5 questions to ponder

Online harassment is often rationalized as "just arguing" or "holding people accountable." However, cyberbullying is defined by repetition, an intent to cause distress, and an imbalance of power (such as a larger following, a mob mentality, or targeting someone who cannot easily defend themselves).

Ask yourself how much the following behaviors apply to you when you are behind a screen:

1️⃣ The Power Check: Do I target users with smaller platforms because it's an easy way to pile on without consequences? Or do I target larger accounts to self-aggrandize—specifically when I sense a vulnerability in them, or use a perceived "injustice" to rationalize my attack?

2️⃣ The Harassment Test: Do I repeatedly leave insulting comments, use mocking reposts/stitches, or send aggressive DMs to the same individual?

3️⃣ The Mob Test: Do I use my platform or group chats to coordinate dogpiles, mass-report, or direct waves of hate at a specific user—often rationalized by a sense that the target "deserves it"?

4️⃣ The Mask Test: Do I use burners, alts, or anon profiles specifically to say things I would never attach to my real name or say to someone’s face?

5️⃣ The Payoff Test: When my comments visibly upset, silence, or drive someone off a platform, do I feel a sense of satisfaction, entertainment, or a belief that "they had it coming"?

* Note: This 5-question framework is an unvalidated derivative designed for conceptual self-reflection. It is synthesized from established behavioral criteria found in peer-reviewed cyberbullying and peer-perpetration research. It is not a validated psychometric tool, a clinical diagnosis, or medical advice.

English

Libraries no longer promote neutrality as a lodestar. Instead the ALA believes librarians should serve as social justice activists.

GeekGurl2000@GeekGurl2000

open.substack.com/pub/fairforall… Libraries going "woke".

English

If you have...

a commute that drains your hours

a job that brings no fulfillment

no real friendships

no exercise routine

a marriage marked by poor communication

and a pile of unresolved conflicts weighing you down...

You may be living a life structured for instability, exhaustion, and disconnection.

How many "mental disorders" are the predictable result of a life no one was built to sustain?

English

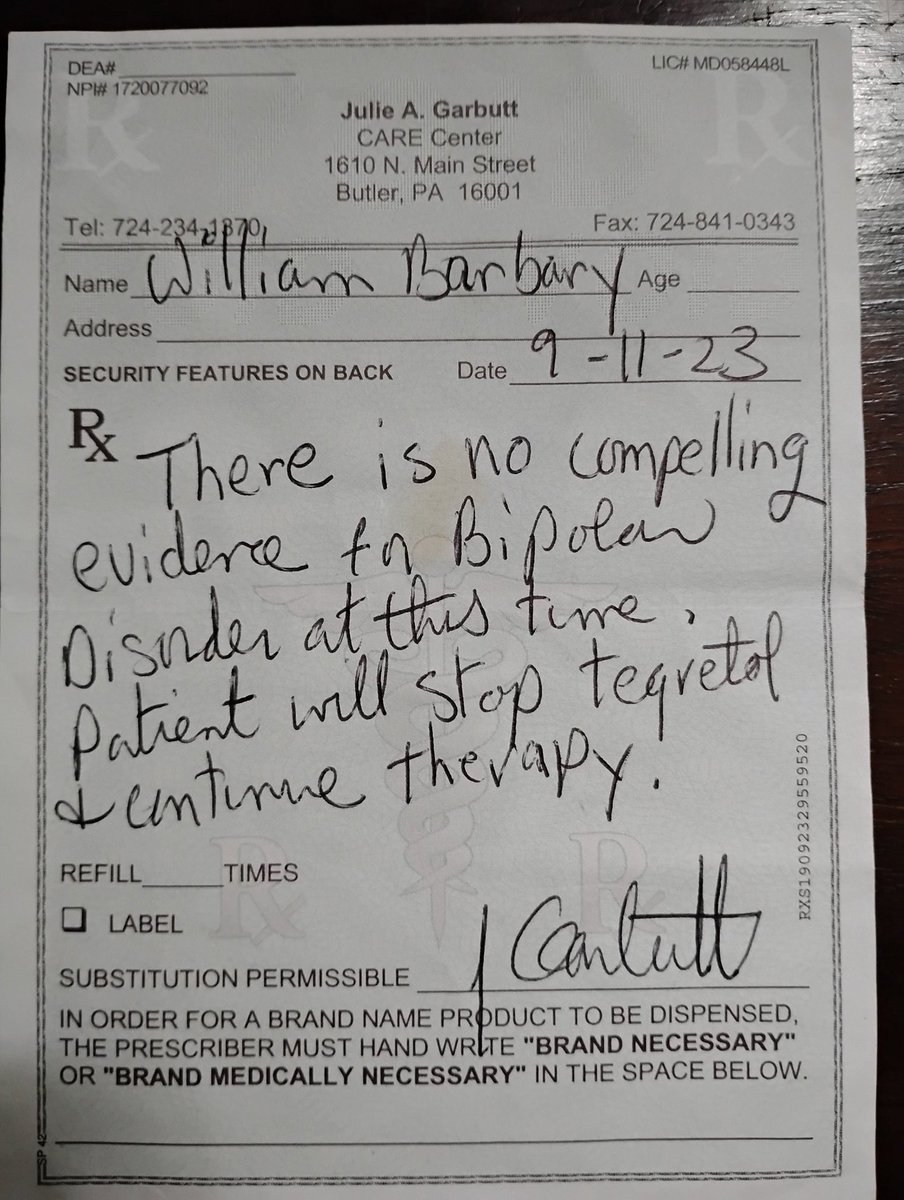

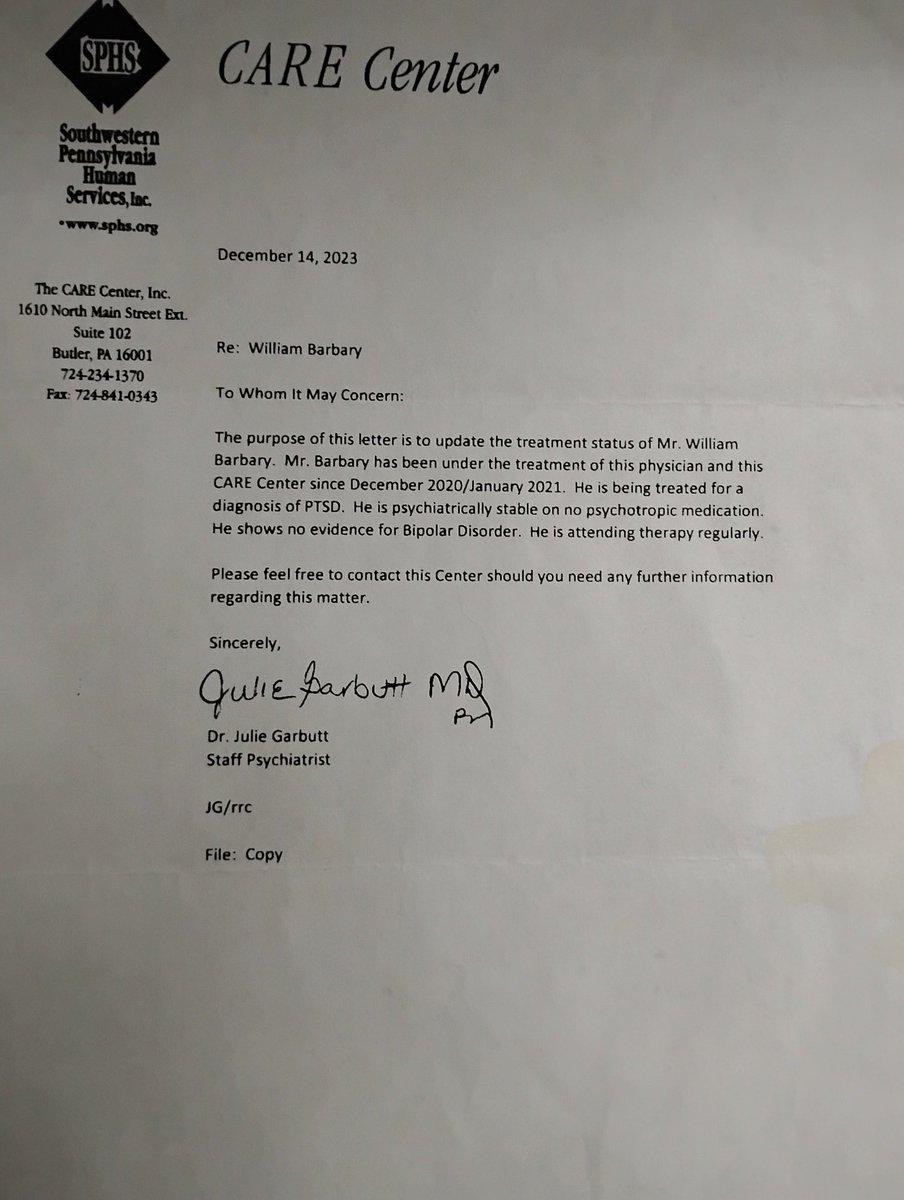

2018 Psych doctor: "You have been an alcoholic for 20 years and you are going through a divorce and custody issues and you quit your job? Even though you came in drunk, you are Bipolar."

2019 Psych nurse: "If you don't take your meds, you won't get out of this psych ward."

2020 CYS: "If you don't take your meds, you won't see your child."

2021 Social Worker: "If you don't take your meds I will tell your probation officer. I can make your life easy or a living hell."

2022 Probation Officer: "If you don't take your meds, you won't get out of jail and will remain in jail for another 14 months. You are a danger to yourself and the community. You have to admit you have a disease. It's not your fault. You have a chemical imbalance. This is something you are going have to deal with for the rest of your life."

2023 Psychiatrist: "You aren't Bipolar, you have PTSD!!! You were misdiagnosed!!! Our bad. Psychiatry is more of an art than a science."

Me: "That's ok. I didn't mind spending a total of 15 months in jail, a year and a half on house arrest, paying over $20,000 in fines, court cost, and supervised child visits, losing 6 years of proper child parent bonding, being charged with a DUI from med withdrawal and authorities thinking my meds were opiates losing my driver's license for a year trying to run a business, and numerous other criminal charges from the effects of the meds. I'm just glad I didn't get that lifelong diagnosis strain of mental illness."

Psychiatrist: "Here are some letters that should make up for all that. Just don't make them public to discredit our "life long" diagnose and medicate business model. It might give people hope and question the legitimacy of the current mental health modalities."

Obviously I am paraphrasing, but some are actual quotes. You can hear the whole story in the first 8 minutes of the video in my pinned post.

#MentalHealthAwareness

#MentalHealthMatters

#MentalHealth

English

@RatzonAdam maybe pursuit of clarity should be done when absolutely ready to address what may be revealed?

English

@sethlfowler @TheLancet Speaking to friends, family, or AI agents helps.

Some sick people are making a business out of this.

English

According to new data in @TheLancet , nearly 1.2 billion people were living with a mental disorder in 2023.

My take is that this is indicative of an issue much larger than diagnosis. The mental health industry is growing, and most therapists use approaches that rely on reframes and processing feelings, which manage symptoms rather than getting to the root.

There is a crisis of meaning, of creating rhythms that are full of stability and life, of community that goes beyond acquaintance.

I think we should be asking how we are actually defining a quality life right now, and what mental disorders even are when this many people are considered to have a mental disorder.

Mental health structures cannot scale to contain it when the lives we are living are producing symptoms faster than therapy can manage them.

So, how do you define a quality life, and are we living it?

English

@hawkngradiation @TheLancet I think the problem is that our magic book also makes it really difficult to stay focused in on the material that may be most valuable

English

@sethlfowler @TheLancet Aren’t we reading more than ever? We read so much our books only have one magic page with all human knowledge.

English

@glinsec_com @TheLancet AI agents can be helpful. I don’t think they’re quite there yet though . Still too agreeable for the time being

English

@hawkngradiation @TheLancet old ways are rooted in timeless truth and experience. one of painful losses is that people don’t read anymore…like at all

English

@sethlfowler @TheLancet Humanity has lost the art of regulation. Hard times led me to rediscover some of the old ways- Christianity, Stoic philosophy, nondual spirituality, Advaita Vedanta, and mediation. You can start learning with any AI. Say yes to humanity’s lost code and find inner peace.

English

France bans Zyns and other nicotine pouches - with violators facing 5 years in prison and a shocking fine trib.al/5zR2Slz

English