Anusheel Bhushan

181 posts

Anusheel Bhushan

@sheel_ai

Engineer, hacker, entrepreneur working on AI agents and training the AI engineering workforce of the future

“If you’re not sure you’re a driver, you’re probably a passenger.” Frank Slootman: Snowflake, ServiceNow, Data Domain. One of the best operators of our generation. Knuckle Up with Frank is now live. Full conversation ↓ -- 00:49 Introduction 01:21 Why being a CEO is a confrontational job 03:51 Great people are hungry for hard feedback 08:19 Psychographic profiling: how Frank builds compatible teams 09:52 Drivers vs passengers: how to tell the difference 12:39 Why back-channel references beat interviews every time 16:19 "When there's doubt, there's no doubt" 20:42 Inside Frank's Tuesday operating cadence 22:27 The "go direct" rule that breaks org chart politics 26:19 Why bigger goals force better plans 31:27 Standards are the real culture 38:17 The email Frank wrote every Monday for years 41:35 Advice for navigating today's volatility 47:25 Facing demons for breakfast at Data Domain 54:19 Why Frank fired himself as Snowflake CEO 1:05:19 Coming to Silicon Valley "10 years late" 1:07:59 Why AI is an industrial-revolution-scale shift 1:10:01 Frank's advice to his 25-year-old self

We've raised $5M to build organizational memory. Every company runs on decisions, but as teams grow the, context behind them gets lost. We built Sentra to make sure that never happens again. Start using Sentra for free today!

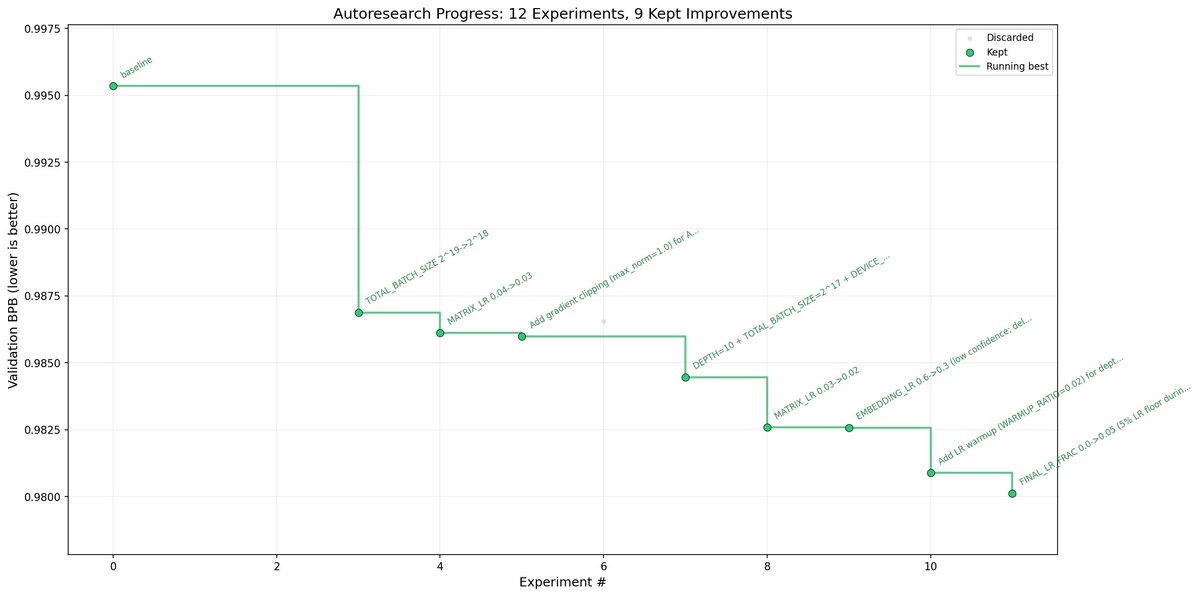

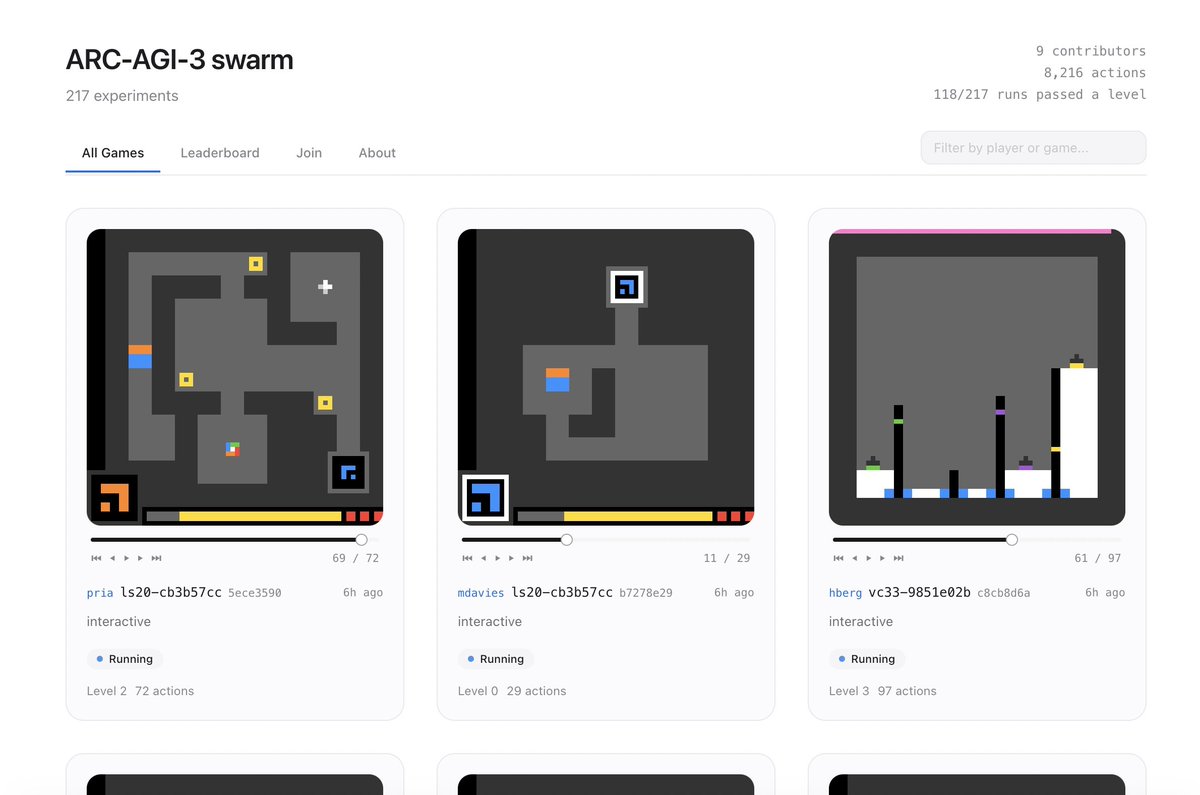

I wrote a multi-agent loop for autoresearch from @karpathy Result: 9/12 (75%) experiments improved val_bpb vs 15/83 (18%) in the original. Its continuing to run so stay tuned! Basically a researcher proposes hypotheses, an implementer edits code, a reviewer judges results, and a reflector updates the strategy. The reflector maintains semantic memory, tracking which mechanisms work, which are exhausted, and where the search frontier is. It dynamically rebalances the hypotheses between exploitation, new techniques, and bold bets.