Shrini Kulkarni

7.2K posts

Shrini Kulkarni

@shrinik

What if ... Really? .... So what?

Pune, India Katılım Aralık 2008

179 Takip Edilen1.1K Takipçiler

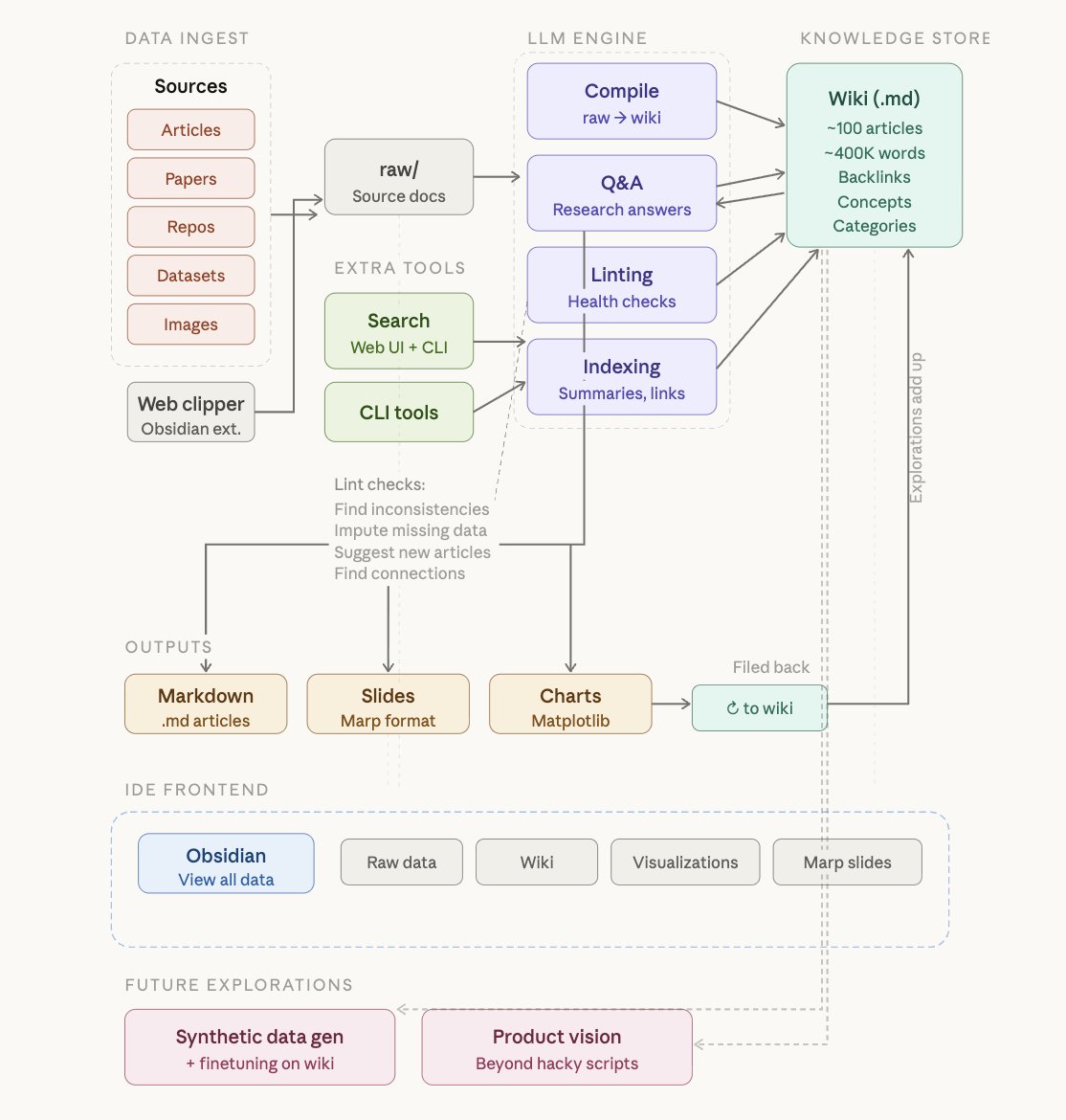

@himanshustwts how is this different from konwledgegraph/ontology ? Looks like RAG extended to me - is that how it is?

English

Indusind bank credit card - Your customer service is so bad - first to get through it is difficult, at times I get busy tone. Then after crossing all hurdles - you make me wait for more than 20 mins with music that goes on and on.

@MyIndusIndBank Fail.

English

At #testtribe conference in bangalore. Looking for meeting friends in #testing world

English

@jess_ingrass @awesome_testers @maaretp Correct - who should and who does work hard to improve testing skill set? we are back to argument on who does it.

English

@maaretp @awesome_testers @jess_ingrass That is a great way to acknowledge others condition and express empathy

English

Wow some many testers in #agile ? I hope they will be talking about testing .... not writing some code !!!

Lisandra Armas@lisyarmas

Thankful to be considered on this list of amazing testers. ☺️💗 My goal is always to think about how to contribute to the testing community and how to do my bit. 💪 #testing #accessibility #mobile #UX

English

@QualityFrog @FionaCCharles In the same way as counting steps you walk is measure of your physical activity

English

Because counting activity in Microsoft apps is a good measure of employee productivity?

What could possibly go wrong?

forbes.com/sites/rachelsa…

English

@maaretp @johannarothman Everybody's job = Whole team's job. Programmers do not (typically) go around say let me improve my testing skills. Do they? But testers are often boast of programming skills

English

@maaretp @johannarothman I have problem with thinking only writing code is testing and testing is everybody's job. The thought process seems to testing does not have any special skills.

English

@maaretp @johannarothman Thinking testing as a service keeps us from agile teams. Fair enough. Let us consider testing as core part of how we develop software - what if agile teams think testing = writing code ?

English

@michaelbolton @xflibble @RachelNotley @jonathan_kohl you mean pants hindering breathing? pants and mask are doing similar function?

English

@michaelbolton @xflibble @RachelNotley @jonathan_kohl Personal experience and arriving at "conclusion" by reasoning. Covering nose with some cloth is a hindrance

English

@shrinik @xflibble @RachelNotley @jonathan_kohl What have you seen or heard that makes you believe that a mask makes you breathe sub-optimally?

English

@xflibble @RachelNotley @jonathan_kohl Also consider some long term effects of masks. Generally most of us breath shallow. with pollution out there amount of fresh air and oxygen is small. now put mask. Is it ok if you breath sub-optimally say for 6 months...about 5 hrs a day?

English

@shrinik @RachelNotley @jonathan_kohl Masks seem to help. A minor inconvenience for the healthy to protect those less healthy. People are voicing their annoyance at selfish assholes.

English

@maaretp What is best way to sell a bug (meaning doing good testing to find an illusive/deep hidden bug and then) ? this bug is $1000 worth if left "unfixed" ?

English

online eating.... like online swimming lessons... in #covid times

The New Yorker@NewYorker

A cartoon by Adam Douglas Thompson.

English