siggibecker

5.7K posts

siggibecker

@siggibecker

siggi wyrd https://t.co/GatgKzeiY2…

Düsseldorf Katılım Ekim 2007

50 Takip Edilen422 Takipçiler

Sabitlenmiş Tweet

siggibecker retweetledi

@TomSolidPM @karpathy Co-evolution more in the sense of Mary Watkins inner dialog than Lickliders tool sense.

English

This is the future of knowledge work and most people don't see it yet.

The "second brain" era was about collecting. This next phase is about the LLM actually maintaining and evolving your knowledge for you.

The key insight: folders + markdown + AI beats any proprietary app. Your system outlives every tool.

English

siggibecker retweetledi

LLM Knowledge Bases

Something I'm finding very useful recently: using LLMs to build personal knowledge bases for various topics of research interest. In this way, a large fraction of my recent token throughput is going less into manipulating code, and more into manipulating knowledge (stored as markdown and images). The latest LLMs are quite good at it. So:

Data ingest:

I index source documents (articles, papers, repos, datasets, images, etc.) into a raw/ directory, then I use an LLM to incrementally "compile" a wiki, which is just a collection of .md files in a directory structure. The wiki includes summaries of all the data in raw/, backlinks, and then it categorizes data into concepts, writes articles for them, and links them all. To convert web articles into .md files I like to use the Obsidian Web Clipper extension, and then I also use a hotkey to download all the related images to local so that my LLM can easily reference them.

IDE:

I use Obsidian as the IDE "frontend" where I can view the raw data, the the compiled wiki, and the derived visualizations. Important to note that the LLM writes and maintains all of the data of the wiki, I rarely touch it directly. I've played with a few Obsidian plugins to render and view data in other ways (e.g. Marp for slides).

Q&A:

Where things get interesting is that once your wiki is big enough (e.g. mine on some recent research is ~100 articles and ~400K words), you can ask your LLM agent all kinds of complex questions against the wiki, and it will go off, research the answers, etc. I thought I had to reach for fancy RAG, but the LLM has been pretty good about auto-maintaining index files and brief summaries of all the documents and it reads all the important related data fairly easily at this ~small scale.

Output:

Instead of getting answers in text/terminal, I like to have it render markdown files for me, or slide shows (Marp format), or matplotlib images, all of which I then view again in Obsidian. You can imagine many other visual output formats depending on the query. Often, I end up "filing" the outputs back into the wiki to enhance it for further queries. So my own explorations and queries always "add up" in the knowledge base.

Linting:

I've run some LLM "health checks" over the wiki to e.g. find inconsistent data, impute missing data (with web searchers), find interesting connections for new article candidates, etc., to incrementally clean up the wiki and enhance its overall data integrity. The LLMs are quite good at suggesting further questions to ask and look into.

Extra tools:

I find myself developing additional tools to process the data, e.g. I vibe coded a small and naive search engine over the wiki, which I both use directly (in a web ui), but more often I want to hand it off to an LLM via CLI as a tool for larger queries.

Further explorations:

As the repo grows, the natural desire is to also think about synthetic data generation + finetuning to have your LLM "know" the data in its weights instead of just context windows.

TLDR: raw data from a given number of sources is collected, then compiled by an LLM into a .md wiki, then operated on by various CLIs by the LLM to do Q&A and to incrementally enhance the wiki, and all of it viewable in Obsidian. You rarely ever write or edit the wiki manually, it's the domain of the LLM. I think there is room here for an incredible new product instead of a hacky collection of scripts.

English

siggibecker retweetledi

siggibecker retweetledi

@karpathy i made a makrdown package to help ppl become poweruser of obsidian x ai where i operationalized some of what you describe: superpaper.ai

there's a also a skill for superpaper you can install using `npx skills add superinterface-labs/superpaper`

English

siggibecker retweetledi

Trump is not America gone wrong.

He is America gone honest.

A nation built on stolen land, slave labor, permanent war, and industrial myth was never going to age into wisdom.

It was always going to rot into narcissism.

It was always going to confuse bullying with leadership.

It was always going to mistake wealth for virtue and violence for destiny.

Trump is that rot speaking in capital letters.

English

siggibecker retweetledi

siggibecker retweetledi

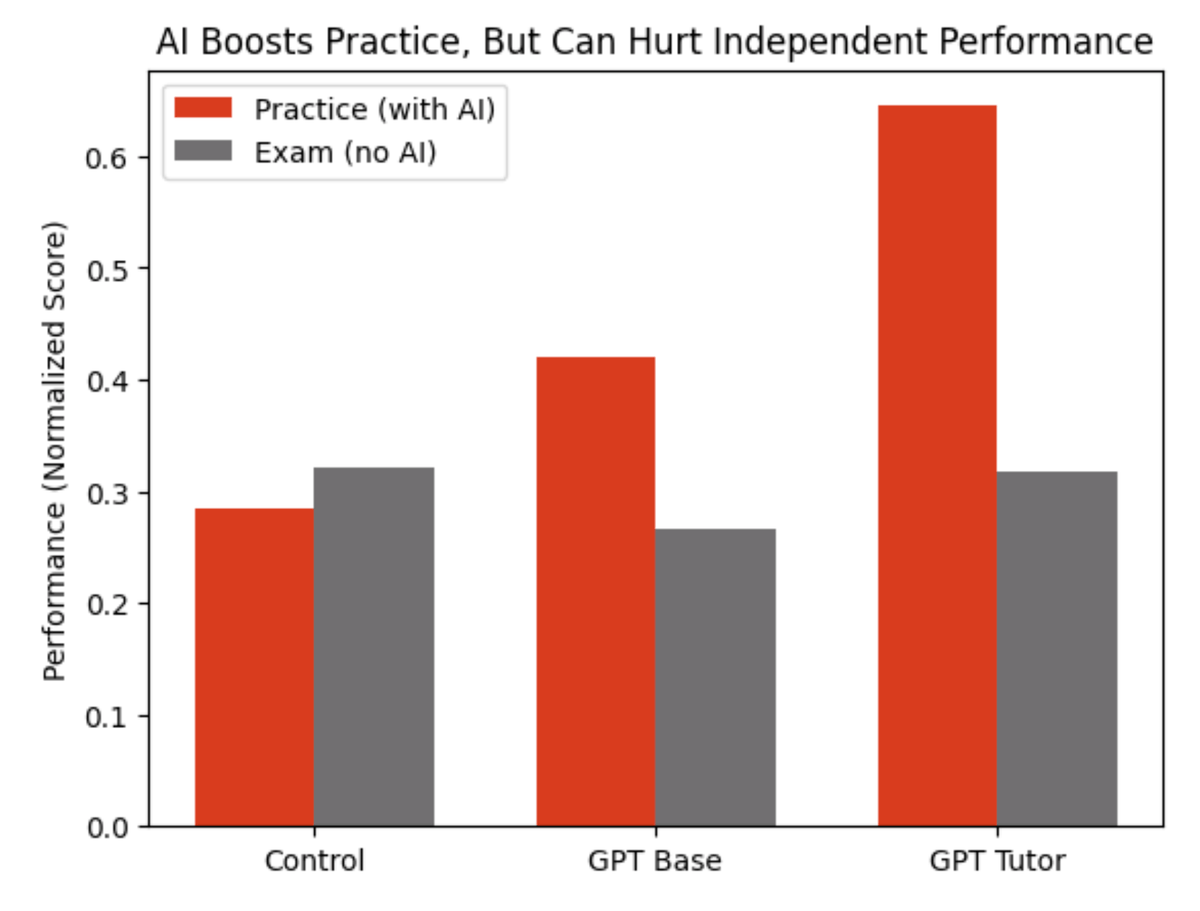

Wharton researchers gave nearly 1,000 high school math students access to ChatGPT during practice problems

Result: chatGPT is the perfect trap.

Look at the red bars.

Students with ChatGPT crushed their practice sessions.

The basic ChatGPT group solved more problems and those on the "tutor" version did even more.

Now look at the gray bars. That's the exam.

No AI allowed.

The ChatGPT group scored 17% worse than kids who practiced with zero technology.

And the fancy tutor version?

No better than working alone.

The researchers called AI a "crutch."

When they analyzed what students actually typed into ChatGPT, most of them just wrote - “What’s the answer?”

The kicker: students who used ChatGPT believed it hadn't hurt their learning.

They were confidently wrong.

This is the AI trap in education.

Outsourcing your thinking.

Of course, lots of half-baked AI literacy curricula being rolled out in schools now

Let’s of course ignore that basic literacy (the ability to read) is possible for <50% of 8th graders

Source: Bastani et al. (2025), "Generative AI Can Harm Learning," PNAS

English

siggibecker retweetledi

siggibecker retweetledi

siggibecker retweetledi

Caligula level.

There is a mathematics to this.

As the malignant narcissist disintegrates, he must destroy everything around him because he must always be dominant in every context—no exceptions.

The closer he feels to his own end, the more compelled he is to end the world.

Jim Stewartson, Decelerationist 🇨🇦🇺🇦🇺🇸@jimstewartson

Erich Fromm invented the term malignant narcissism: “It is a madness that tends to grow in the lifetime of the afflicted person. The more he tries to be a god, the more he isolates himself from the human race; this isolation makes him more frightened, everybody becomes his enemy, and in order to stand the resulting fright he has to increase his power, his ruthlessness, and his narcissism.”

English