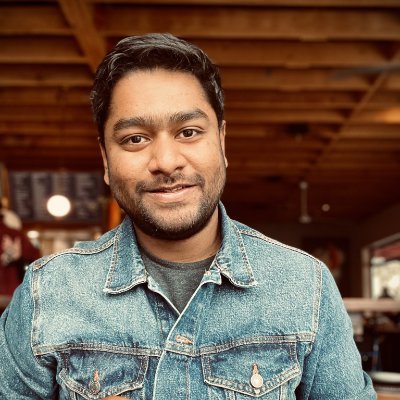

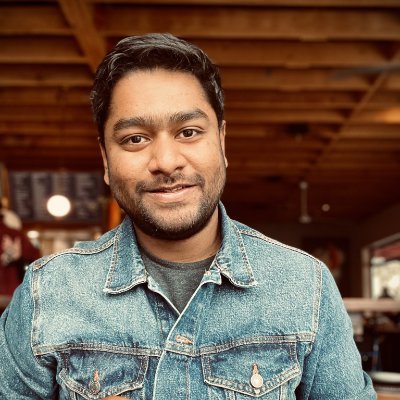

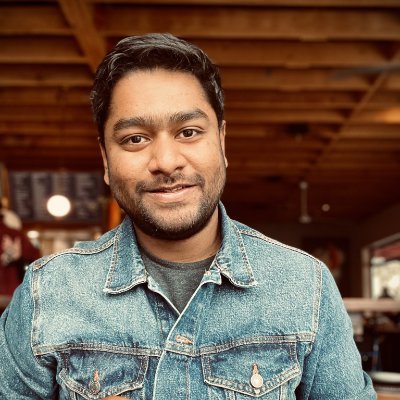

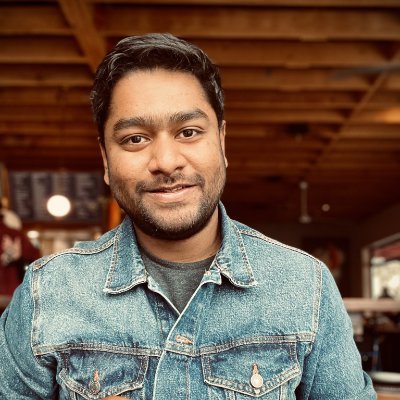

Vijay Veerabadran

738 posts

Vijay Veerabadran

@simple_cell_

Research @Meta @RealityLabs | Prev: Ph.D. from @CogSciUCSD

Introducing DroPE: Extending the Context of Pretrained LLMs by Dropping Their Positional Embeddings pub.sakana.ai/DroPE/ We are releasing a new method called DroPE to extend the context length of pretrained LLMs without the massive compute costs usually associated with long-context fine-tuning. The core insight of this work challenges a fundamental assumption in Transformer architecture. We discovered that explicit positional embeddings like RoPE are critical for training convergence but eventually become the primary bottleneck preventing models from generalizing to longer sequences. Our solution is radically simple: We treat positional embeddings as a temporary training scaffold rather than a permanent architectural necessity. Real-world workflows like reviewing massive code diffs or analyzing legal contracts require context windows that break standard pretrained models. While models without positional embeddings (NoPE) generalize better to these unseen lengths, they are notoriously unstable to train from scratch. Here, we achieve the best of both worlds by using embeddings to ensure stability during pretraining and then dropping them to unlock length extrapolation during inference. Our approach unlocks seamless zero-shot context extension without any expensive long-context training. We demonstrated this on a range of off-the-shelf open-source LLMs. In our tests, recalibrating any model with DroPE requires less than 1% of the original pretraining budget, yet it significantly outperforms established methods on challenging benchmarks like LongBench and RULER. We have released the code and the full paper to encourage the community to rethink the role of positional encodings in modern LLMs. Paper: arxiv.org/abs/2512.12167 Code: github.com/SakanaAI/DroPE

How can we build more robust and safe world models? 🤔 Our position paper, World Models Must Live in Parallel Worlds 🌍, tries to answer this question. Find us at NeurIPS 2025: 🗓️ Dec 7 📍 Upper Level Ballroom 20D #neurips2025 w/ @Sahithya_Ravi @VeredShwartz, Leonid Sigal 1/5

🚨 Appearing as a #NeurIPS2025 D&B spotlight(~3%) Could VLMs guess your next prompt for a wearable AI agent? We present WAGIBench, the 1st large-scale Goal Inference Benchmark for Wearable Agents w/ audiovisual, digital & longitudinal context! Paper: arxiv.org/abs/2510.22443 1/