SakaiSec

361 posts

if your skill depends on dynamic content, you can embed !`command` in your SKILL.md to inject shell output directly into the prompt Claude Code runs it when the skill is invoked and swaps the placeholder inline, the model only sees the result!

Technology proven ✅ Market fit proven ✅ Now it’s time to SCALE 🏹🚀 Together with @dfjgrowth and @northzoneVC, we just announced a $120M funding round, valuing XBOW at over $1B. Our Autonomous Hacker was trained by elite human security experts. In 2025, we proved it can safely and effectively operate in real production environments. Today, over 100 customers worldwide already trust XBOW to protect their most critical assets. As AI allows attackers to move faster, defenders need the same continuous speed and capabilities. This milestone allows us to bring autonomous offensive security to the entire industry at the exact moment it’s needed most. Read more: bit.ly/4lA033O

xLSTM Distillation: arxiv.org/abs/2603.15590 Near-lossless distillation of quadratic Transformer LLMs into linear xLSTM architectures enables cost- and energy-efficient alternatives without sacrificing performance. xLSTM variants of instruction-tuned Llama, Qwen, & Olmo models.

日本語特化LLM「Rakuten AI 3.0」提供開始 watch.impress.co.jp/docs/news/2093…

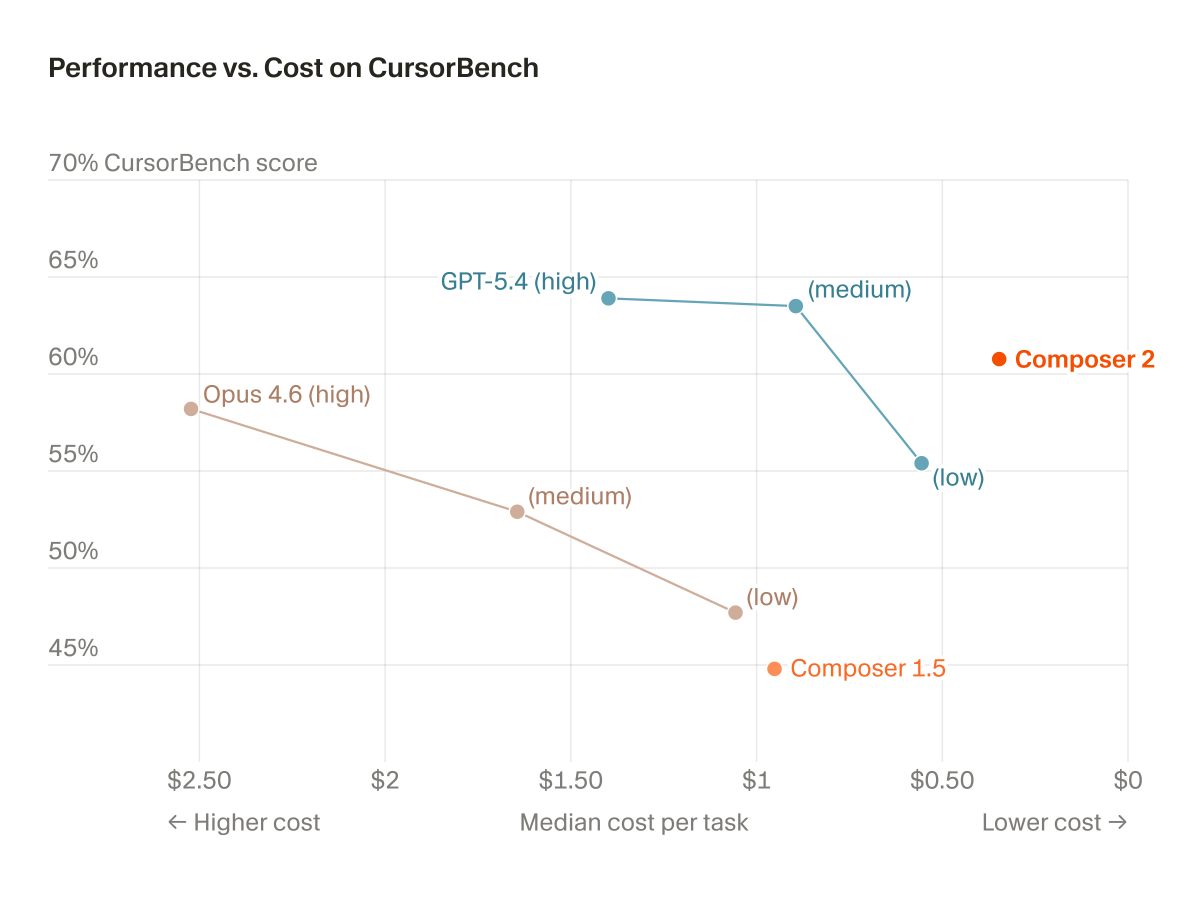

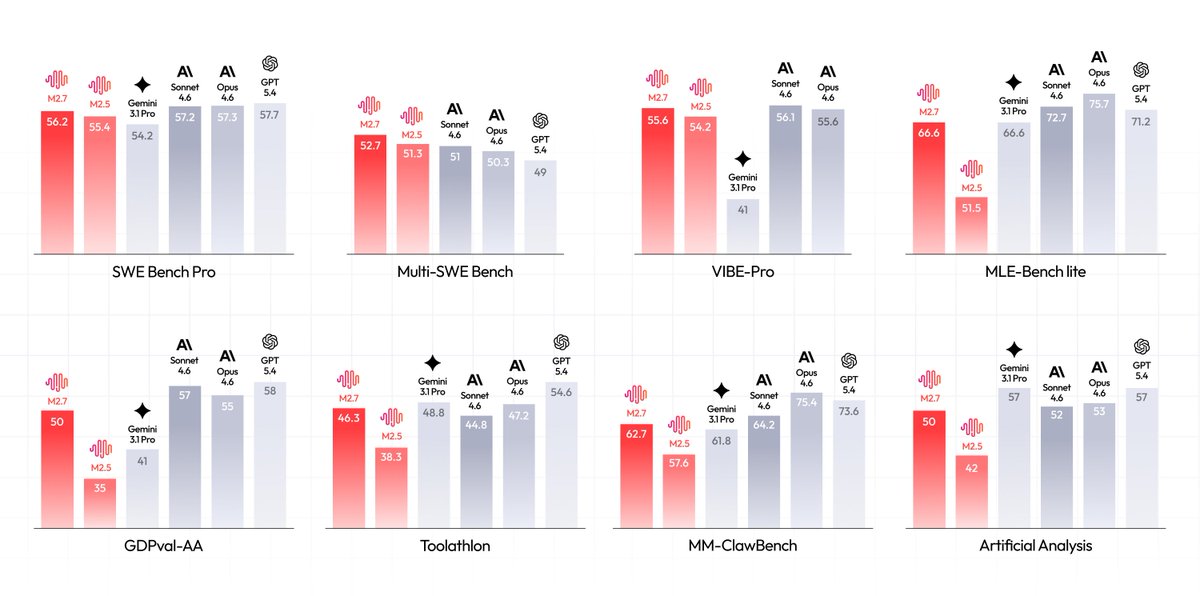

MiniMax M2.7 is out on web! Check it out here: agent.minimax.io

Introducing 𝑨𝒕𝒕𝒆𝒏𝒕𝒊𝒐𝒏 𝑹𝒆𝒔𝒊𝒅𝒖𝒂𝒍𝒔: Rethinking depth-wise aggregation. Residual connections have long relied on fixed, uniform accumulation. Inspired by the duality of time and depth, we introduce Attention Residuals, replacing standard depth-wise recurrence with learned, input-dependent attention over preceding layers. 🔹 Enables networks to selectively retrieve past representations, naturally mitigating dilution and hidden-state growth. 🔹 Introduces Block AttnRes, partitioning layers into compressed blocks to make cross-layer attention practical at scale. 🔹 Serves as an efficient drop-in replacement, demonstrating a 1.25x compute advantage with negligible (<2%) inference latency overhead. 🔹 Validated on the Kimi Linear architecture (48B total, 3B activated parameters), delivering consistent downstream performance gains. 🔗Full report: github.com/MoonshotAI/Att…