Bakhtier Gaibulloev

630 posts

Bakhtier Gaibulloev

@slashmsu

I replace manual work with AI agents & pipelines | Helping orgs migrate to AI-first | 15+ yrs distributed systems | Claude Code / MCP / agent architecture

Aachen, Germany Katılım Mart 2016

127 Takip Edilen79 Takipçiler

Sabitlenmiş Tweet

@svpino raw conversation dumps inherit the same 70%-loss problem after /clear because the model still has to re-derive what mattered. structure the handoff like a schema: pinned files, decision log, current cursor, open todos. now /clear compounds across sessions.

English

I'm spending so much time managing context, and I hate it.

Here is a tip for you: don't use /compact any more in Claude Code. There's a much better option.

/compact takes your entire conversation history in memory and compresses it into a summary. This frees up tokens, but you'll lose a ton of important details (sometimes up to 70% of what matters!).

On top of that, the summarized context is still tied to the current session and won't persist beyond it.

Here is what you should do instead:

1. Dump the entire conversation history to a markdown file

2. Call /clear to clear the context

3. Start your next prompt by pointing to the markdown file

There are several advantages to doing this:

1. You don't lose any valuable information

2. You control what's in the file

3. The context persists beyond the current session

In summary, when you hit a context limit, do a *handoff*, not a *cleanup*.

English

@emollick deployment lane is the real product. microsoft optimises the model-plus-tenant boundary: rag, citations, audit trails. openai optimises the model-plus-chat boundary: turn quality, latency. same weights run different value functions over the output.

English

@ArthurLillyblad @emollick the schema-compression claim is the load-bearing one. concrete: a 1500-line diff and a 50-line diff hit the same review queue under the same attention budget. compress submissions to a contracts-changed table plus call sites touched. reviewer throughput tracks producer again.

English

@simonw reviewer attention is the rate limit. zig spends that budget on contributor onramp. ai pull requests consume the queue without producing future contributors, so the trade is a supply collapse. quality of the code never enters that equation.

English

The Zig project's rationale for their blanket ban on AI-assisted contributions makes a lot of sense to me - for them, time spent reviewing PRs isn't about the code, it's about growing new contributors for the future of the project simonwillison.net/2026/Apr/30/zi…

English

@ID_AA_Carmack 32-bank cliff. at stride 512 every warp lane hits the same shared-memory bank, so a tiled solver serializes its column reads 32-wide. 511 falls on a non-multiple-of-32 stride and dodges it.

English

@svpino The biggest payoff here is the stop condition. Models self-assess 'done' unreliably. A failing test gives the agent an external verdict it can't talk itself past. Tokens upfront, hours saved chasing phantom-completions.

English

Agentic coding is the ideal mechanism for enforcing TDD and being strict with it.

Here is a summary of the workflow I coded as part of my implementation skill in Claude Code:

1. Before writing any code, write a test that fails

2. Run the test and ensure it fails

3. If you get an error, fix the test until it runs but fails

4. Once the test fails, write the code

5. Run the test and ensure it succeeds

6. If the test fails, go back to step 4

7. Once the test succeeds, verify that the task is complete

8. If the task is not complete, go back to step 1

This loop forces the agent to write tests before writing any code, and tries to keep each test as simple as possible.

This is token hungry upfront, but it has many advantages:

1. Simpler code

2. More modular code

3. Fewer bugs and regressions later

4. Easier to troubleshoot

English

@rubenhassid yes. each entry pairs a banned phrase with a same-meaning line i wrote that didn't read as ai. the model gets two anchors per entry. revisions hold for 3-4 sentences instead of one.

English

Stop writing 500-word prompts that don't work.

This 35-word prompt writes better than all of them:

"I want to [TASK] for [SUCCESS CRITERIA]. Read my anti-AI writing style file first. It contains every known pattern of AI writing I want to avoid. Apply these as rules to everything you write for me."

That's it. But you need to set it up first. Here's how:

Step 1. Go to how-to-ai.guide.

Step 2. Subscribe for free. Don't pay anything.

Step 3. Open my welcome email.

Step 4. Hit the automatic reply button inside.

Step 5. Click on the Notion link. Go to .md files.

Step 6. Download ANTI AI WRITING STYLE .md file.

Step 7. Upload it to Claude. Prompt:

I want to [TASK] for [SUCCESS CRITERIA]. Ask me questions before starting so we define our plan first.

After Claude's answer, follow up with this prompt:

"Audit your text using the anti-ai-writing-style .md file from your folder."

✦ Here's why your prompts don't work (red flags):

"Don't use jargon."

"Don't sound like an AI."

"Don't use buzzwords or filler."

"Avoid passive voice."

"Be conversational, not robotic."

These are everywhere. LinkedIn posts. Emails.

They sound thorough. The output is still garbage.

Your prompt says "don't" 14 times. The model forgets half by sentence three. You're fighting the AI with a wall of "don'ts." It doesn't work at scale.

The fix is counterintuitive.

Stop telling the AI what to avoid.

Give it a file that shows what to avoid.

The model reads 1,168 lines of bad patterns, internalizes them, and writes clean.

500-word prompt → still robotic.

Small prompt + 1 file → reads as a human wrote it.

Get my entire setup (free): ruben.substack.com/p/its-not-x-it…

♻️ Repost to help one person stop sounding like AI.

Ruben Hassid@rubenhassid

English

@GergelyOrosz leaving-github stories usually split on tooling. agents pull on the api 100x more than humans, so flakiness compounds before the ui notices. for an agent-heavy project the carrying cost of a broken host shows up before the migration cost does.

English

WOW.

Mitchell Hashimoto voting with his feet: Ghostty is leaving GitHub.

"I can't code with GitHub anymore. I'm sorry. After 18 years, I've got to go. I'd love to come back one day, but this will have to be predicated on real results and improvements, not words and promises."

Mitchell Hashimoto@mitchellh

Ghostty is leaving GitHub. I'm GitHub user 1299, joined Feb 2008. I've visited GitHub almost every single day for over 18 years. It's never been a question for me where I'd put my projects: always GitHub. I'm super sad to say this, but its time to go. mitchellh.com/writing/ghostt…

English

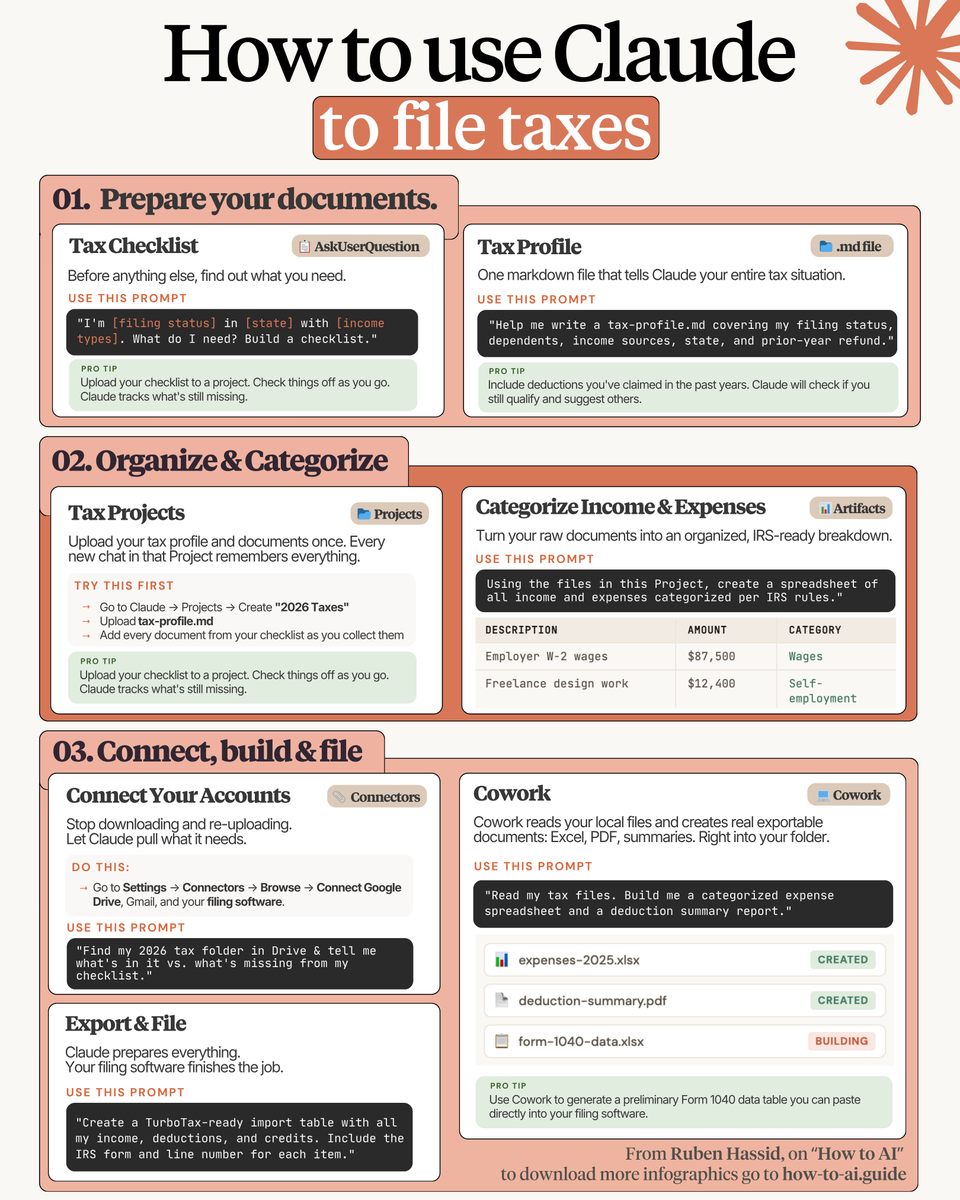

@rubenhassid the .md-as-context-file pattern works here because tax categories are stable across years. the same file rolls forward unchanged, only the year-specific income and deduction sections take a delta. the file accumulates into a personal ledger more than a config.

English

How to file your 2026 taxes with Claude:

File 1: tax-profile .md (Your tax identity)

1. Create a Google doc called "TAX-PROFILE"

2. Add your filing status, state, and dependents.

3. List income sources (W-2, freelance, dividends)

4. List deductions you've claimed in past years.

5. Add last year's refund or amount owed.

6. Save it as a .md file format.

File 2: The Tax Checklist

7. Go to Claude → Projects → Create "2026 Taxes"

8. Upload your tax-profile .md.

9. Prompt: "I'm [filing status] in [state] with [income types]. Build me a checklist."

10. Add every document from that checklist.

11. Every new chat in that Project remembers it all.

File 3: Connectors (Claude inside your tools)

12. Settings → Connectors → Connect Drive + Gmail

13. Stop downloading and re-uploading.

14. Open Cowork. Point it at your tax folder.

14. Prompt: "Create a TurboTax-ready import table with all my income, deductions, and credits. Include the IRS form and line number for each item."

15. It exports a real .xlsx and .pdf into your folder.

16. Paste it into TurboTax. Done.

I made over 100+ infographics like this.

If you want to download all of them, just:

1. Go to how-to-ai.guide.

2. Then enter your work email.

3. It will ask you to pay or not. Don't pay.

4. Wait 2 min. Then open the welcoming email.

5. Click on my Notion library with everything in it.

Disclaimer: you will be subscribed to my newsletter. It's free, and always will be. Some people pay only to join my community & get answers faster.

500,000+ readers enjoy it twice a week.

To always stay ahead of the AI curve.

To master AI, before it masters you.

♻️ Repost to help one person file smarter this year.

Ruben Hassid@rubenhassid

English

@MengTo the split makes the corpus version-stable. design.md captures taste and rolls forward for years. html witnesses churn every model release, regenerated from the same skills. you can diff one layer without dragging the others through it.

English

@svpino Self-routing is one of the few places where letting the model overestimate is correct. The cost of starting too weak is a redo from scratch. The cost of starting too strong is a few extra tokens. Asymmetric loss says round up.

English

You can let Claude Code decide the level of thinking it needs to solve a problem.

I like to use Sonnet as the default model, but I tell Claude to decide which model to use every time I spin off an agent to solve a problem:

"Choose the weakest model that can complete the task."

You can also give it specific guidelines. For example:

"""

• Use the smallest and cheapest model to create small functions, small test cases, or do anything mechanical.

• Use the strongest model you have to make architectural decisions about the code base.

"""

This is great whenever you are giving the model a list of tasks and you don't have a chance to specify a different model for each task.

English

@GergelyOrosz @Pragmatic_Eng the $170M is a cardinality story. per-tag and per-user dimensions multiply fast at openai scale. dropping label cardinality on hot metrics and head sampling traces usually moves the bill more than any vendor migration.

English

Waiting when the CFO will notice the ~$170M Datadog bill after this...

(yes, really! As shared in @Pragmatic_Eng back in October... though safe to assume OpenAI has reduced this since)

Berber Jin@berber_jin1

NEW: openai missed key revenue and growth targets in recent months, prompting its cfo and board to reassess its data-center strategy ChatGPT was supposed to hit 1bn weekly active users last year but missed that target wsj.com/tech/ai/openai…

English

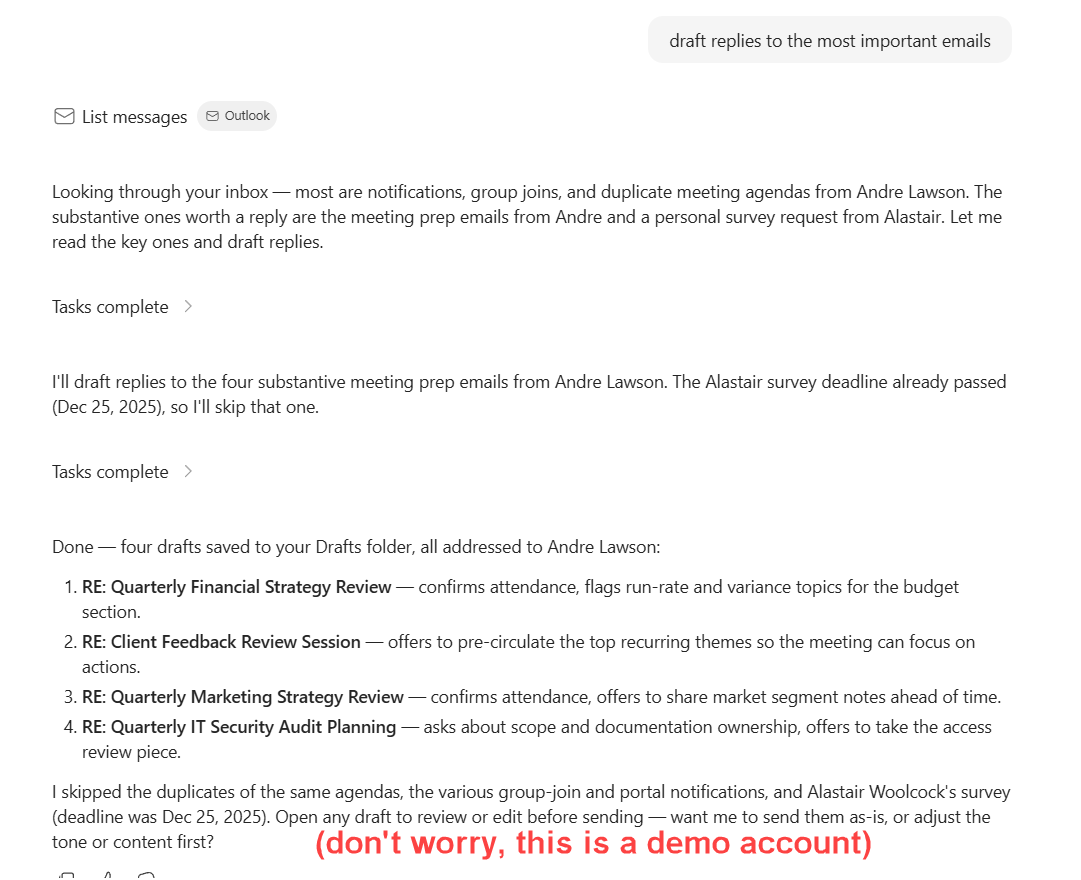

@emollick ux awkwardness tracks data model. outlook agent writes to drafts, you read the inbox, the seam shows. cowork reads and writes the same files you have open, so the handoff disappears. shape of the data layer determines whether the agent feels native.

English

@svpino the deeper lock-in lives in prompt format and tool-call schema. swap providers but keep prompts tuned to one model and quality regresses. portable layer is the task schema, input plus tool-calls. prompts get rewritten per model.

English

@simonw the AGI clause was always unfalsifiable. no working benchmark for the threshold means no party could trigger or contest it without a downstream legal fight. removing it just lets the contract reflect what the engineering side already knew.

English

Today OpenAI announced that "Revenue share payments from OpenAI to Microsoft continue through 2030, independent of OpenAI’s technology progress"

That "independent of OpenAI’s technology progress" fragment appears to mean that the weird AGI clause is now deceased simonwillison.net/2026/Apr/27/no…

English