Scott Cory

490 posts

Scott Cory

@slessans

robot intelligence @openai

Greg Brockman Testifies Stake In OpenAI Worth Nearly $30 Billion—Despite Investing Nothing go.forbes.com/RN1F1T

We’re talking about Goblins. openai.com/index/where-th…

𝐁𝐞𝐬𝐬𝐞𝐦𝐞𝐫 𝐏𝐫𝐞𝐝𝐢𝐜𝐭𝐬: 𝐑𝐨𝐛𝐨𝐭𝐢𝐜𝐬 𝐚𝐧𝐝 𝐩𝐡𝐲𝐬𝐢𝐜𝐚𝐥 𝐀𝐈 🤖 1. We're in the GPT-2.5 moment for robotics. Capabilities are real, but the gap between lab performance and field deployment remains wide. 2. Scaling laws are emerging. Data is expensive, capital is the moat. World models may be the shortcut. 3. Talent concentration will crown winners quickly. This is not a market where 50 companies win. 4. Near-term value will accrue to full-stack, vertically integrated players, not pure-play foundation model companies. 5. Defense robotics will produce the first $50B+ IPOs in the category. 6. There will be no robotics bubble. In fact, not enough capital is flowing into the industry. Dive in 🦾 bvp.com/atlas/bessemer…

what are the biggest moats in robotics these days?

Codex can’t make cheesecake yet and that makes me a tiny bit sad. Maybe one day

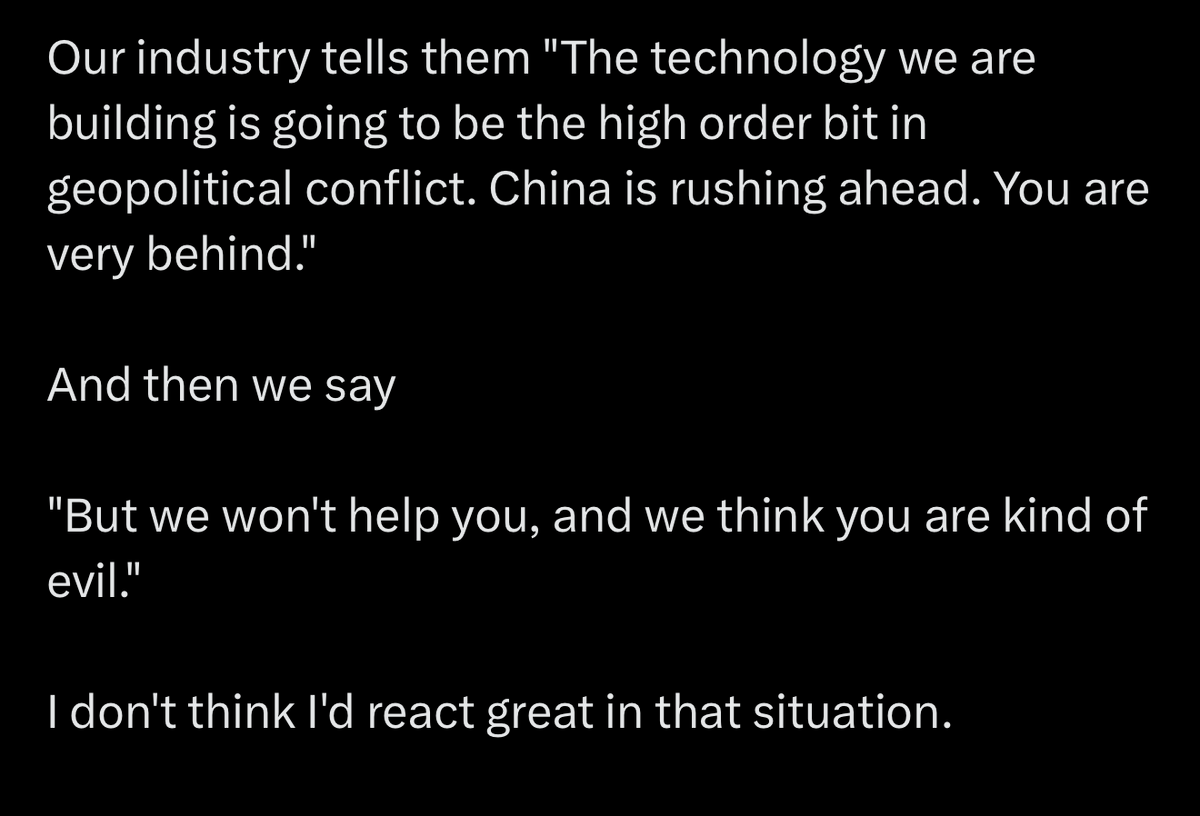

Some thoughts (long tweet.. sorry). I would prefer if we focused first on using AI in science, healthcare, education and even just making money, than the military or law enforcement. I am no pacifist, but too many times national security has been used as an excuse to take people's freedoms (see patriot act). I am very worried about governments using AI to spy on their own people and consolidate power. I also think our current AI systems are nowhere nearly reliable enough to be used in autonomous lethal weapons. I would have preferred to take it slower with classified deployment, but if we are going to do it, it is crucial that we maintain the red lines of no domestic surveillance or autonomous lethal weapons. These are widely held positions, and codified in laws and regulations. They should be stipulated in any agreement, and (more importantly) verified via technical means. I think the terms of this agreement, as I understand them, are in line with these principles, that are also held by other AI companies too. I hope the DoW will offer them the same conditions. Regardless, a healthy AI industry is crucial for U.S. leadership. Whether or not relations have soured, there is zero justification to treat Anthropic - a leading American AI company whose founders are deeply patriotic and care very much about U.S. success - worse than the companies of our adversaries. It appears to me that much of this week's drama has been more about style and emotions than about substance. I hope that people can put this behind them, and come together for the benefit of our country.

BREAKING: Sam Altman told OpenAI employees at an all-hands meeting on Friday afternoon that a potential agreement is emerging with the Department of War to use the startup’s AI models and tools, according to a source present at the meeting and a summary of the meeting seen by Fortune. The contract has not yet been signed. The meeting came at the end of a week where a conflict between Secretary of War Pete Hegseth and OpenAI rival Anthropic burst into public acrimony, ending with the apparent end of Anthropic’s contracts with the Pentagon and with the federal government in general. Altman said the government is willing to let OpenAI build their own “safety stack”—that is, the layered system of technical, policy, and human controls that sit between a powerful AI model and real-world use—and that if the model refuses to do a task, then the government would not force OpenAI to make it do that task. fortune.com/2026/02/27/ope…

I’m joining OpenAI to work on World Simulation and Robotics, after 3.75 years at FAIR working on SAM and Llama. I’m thrilled to explore how visual perception, world model and robotics can come together to build physical intelligence.