Sneha

221 posts

Sneha

@SnehaRevanur

founder @EncodeAction 🇺🇸

BREAKING: Suspect throws Molotov cocktail at OpenAI CEO Sam Altman's home, according to company spokesperson

Scoop: OpenAI is backing an Illinois state AI bill that would shield AI labs from liability for critical harms caused by their AI models—such as mass deaths or financial disasters—as long as they weren't intentional and the labs have published safety reports on their website.

Introducing Project Glasswing: an urgent initiative to help secure the world’s most critical software. It’s powered by our newest frontier model, Claude Mythos Preview, which can find software vulnerabilities better than all but the most skilled humans. anthropic.com/glasswing

The heads of the big AI labs continue to insist that their products are going to take all your jobs, and also pose various catastrophic risks

🚨🚨@sama tells me he feels such URGENCY about the power of coming AI models that @OpenAI is unveiling a New Deal for superintelligence - ideas to wake up DC He says AI will soon be so mindbending that we need a new social contract 👇Altman's top 6 ideas axios.com/2026/04/06/beh…

The heads of the big AI labs continue to insist that their products are going to take all your jobs, and also pose various catastrophic risks

Some of the most underinvested areas in frontier biology that could accelerate civilizational progress: - Cheap, large-scale DNA synthesis (writing entire chromosomes or full organisms) - Real-time, non-destructive RNA sequencing in living cells - Highly accurate AI-powered polygenic scores for complex traits (disease risk, cognition, longevity) → enabling full genome design - Ultra-precise, multiplex genome editing (far beyond CRISPR) with minimal off-target effects, scalable across millions of cells - Safe, efficient, tissue-specific in vivo delivery systems - Safe and effective human germline engineering - Accelerated clinical trials via testing on decedents (with consent) - Next-gen human enhancement: muscle, cognition, mood — beyond GLP-1s - Ectogenesis / artificial wombs Who’s actually building in these areas? Drop names, companies, or researchers below 👇

The British Government is a complicated beast. Dozens of departments, hundreds of public bodies, more corporations than one can count... Such is its complexity that there isn't an org chart for it. Well, there wasn't... Introducing ⚙️Machinery of Government⚙️

Very interesting. Economists and AI experts have very similar forecasts on what AI will be able to do in 20 years. But the AI experts think it will have a much bigger effect on the economy than the economists do. Why? Because economists study this stuff 🤷♂️

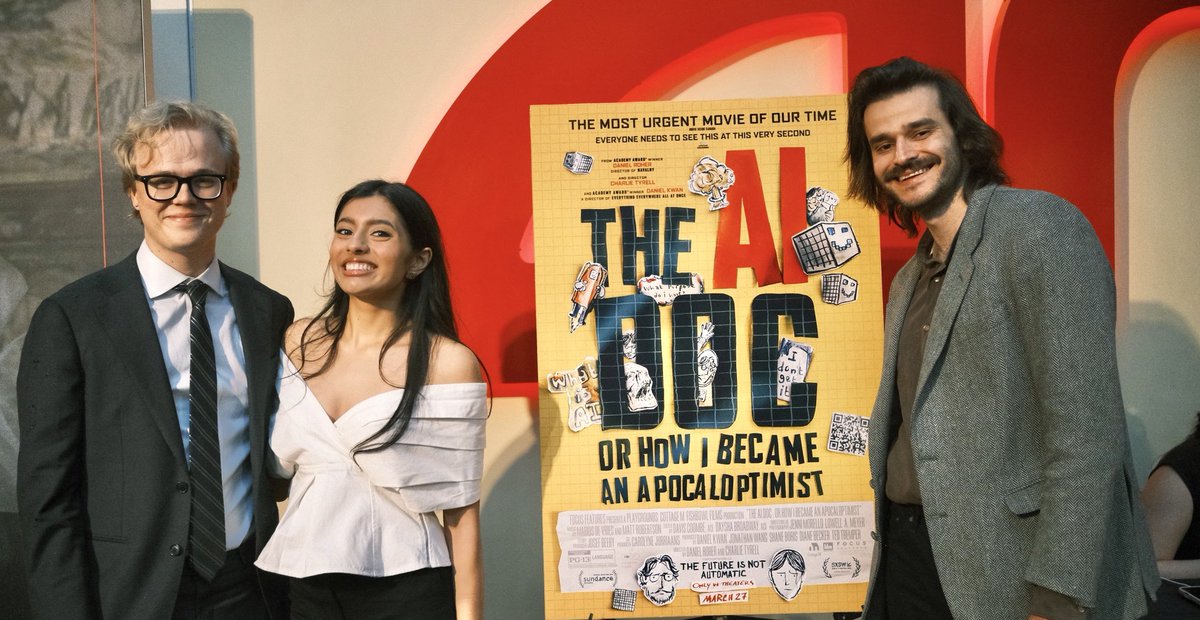

I’ve been to AMC Georgetown twice. Once in 2022 to watch Everything Everywhere All At Once, and again this weekend to watch the Everything Everywhere All At Once director’s new movie about AI. This time, I got to see myself on the screen. When I was interviewed for @theaidocfilm in fall 2024, I was cautiously optimistic. It is really hard to make an evergreen movie about the fastest changing technology ever, and to feature a bunch of people who disagree with each other (intensely) and yet make them all proud. But the filmmakers killed it. The AI Doc is informative and moving and also just a genuinely fun watch. My message - that there’s a bridge humanity must cross to reach an amazing future, and we can act urgently to safely get to the other side - was represented well. I didn’t feel pigeonholed or caricatured at all. There is no better feeling than seeing my friends and family all fired up from a movie that masterfully distills what I’ve been talking their heads off about for years. The AI Doc is truly a must watch. Go run to a theater near you - I’m excited to hear what you think :)

8) Is permissionless innovation a partisan right-wing thing? No, permissionless innovation is not a partisan term or movement. At least it shouldn’t be. In my book and other writings, I have pointed out how the Clinton administration’s 1997 Framework for Global Electronic Commerce is probably the most concise articulation of permissionless innovation that any government has ever promulgated. “The Internet should develop as a market driven arena not a regulated industry,” it noted, while “governments should avoid undue restrictions on electronic commerce.” It also said “parties should be able to enter into legitimate agreements to buy and sell products and services across the Internet with minimal government involvement or intervention.” Finally, “where governmental involvement is needed,” the Framework continued, “its aim should be to support and enforce a predictable, minimalist, consistent and simple legal environment for commerce.” That's the permissionless innovation vision in a nutshell. People on opposite sides of the political spectrum are often united in the belief that permissionless innovation is crucial to prosperity and human flourishing. For example, while they both once worked at PayPal, Reid Hoffman and David Sacks went down very different paths politically after that. Hoffman became the co-founder of LinkedIn and a leading support of Democrats and President Biden. By contrast, Sacks became a successful venture capitalist and a strong supporter of Donald Trump, going on to serve as AI and crypto czar in the Trump administration. They disagree bitterly about many issues. Despite their differences, however, both Hoffman and Sacks agree that permissionless innovation powered the digital revolution and can propel the AI revolution next. “Permissionless innovation in AI is working more effectively than ever,” Reid Hoffman argued in a 2025 tweet. “It's what will keep the US at the forefront of Al development. It is arguably the most important time to move quickly. Anti-tech critics who insist on hitting the brakes, full stop, are misguided,” he argued. David Sacks concurs, noting in a late 2025 podcast that, “the thing that has really made Silicon Valley special over the past several decades is permissionless innovation.” By contrast, what is being contemplated for AI “is an approval system for both software and hardware” where “you have to go to Washington to get permission before you release a new model." This would “drastically slow down innovation and make America less competitive,” Sacks argued. At the same time, however, the concept of permissionless innovation has also come under fire from technocrats on both left and right. Left-leaning scholars at Brookings celebrate what they see as “the end of permissionless innovation,” and progressive academics have attacked the concept relentlessly on many different grounds. Meanwhile, some conservatives such as Trump-appointed FTC commissioner Mark Meadow decry permissionless innovation as “a progressive impulse, not a conservative one.” He argues it “is antithetical to the conservative’s considered preservation of custom and tradition and our commitment to the rule of law.” Sometimes the concept of permissionless innovation has even come under fire from groups that nominally support expanding innovation opportunities, but find something distasteful about the term—at least as they (mis)conceptualize it. For example, two top officials at the Foundation for American Innovation say permissionless innovation amounts to little more than a “legitimizing facade for anarcho-capitalists, tech bros, and cynical corporate flacks” and represents a “shallow ideological slogan.” And this comes from a group with "innovation" in its title! There are two things that generally unify the varied critics of permissionless innovation. The first is a fundamental misunderstanding of what the term represents, or an intentional effort to equate it with anarchism or a self-serving corporate agenda, even though it is nothing of the sort. Properly understood, permissionless innovation is about creating a policy environment conducive to new entry, entrepreneurialism, creative destruction, and openness to ongoing disruption of the status quo. It is all too often the case that established players and “corporate flacks” are the ones standing in the way of progress. I’ve often joked with people that, when I retire, I will be writing one final book entitled: “Why Businesses Make the Worst Capitalists.” I’ve spent decades fighting corporations, trade associations, and other special interests who only care about defending their own narrow interests, and not a broader environment of innovation freedom. They are every bit as bad as the extremist academic reactionaries who rail against permissionless innovation and, in many cases, those private interests do more damage because of how politically connected they are. True defenders of permissionless innovation understand that our greatest fight is often against those who would use the power of government to protect themselves from technological change while throwing their competitors under the bus. Yet, for whatever reason, many critics of permissionless innovation like to rail against the term when they see cronyist corporations or special interests gain advantage using political leverage. That is a plainly incorrect understanding of the term. The second factor that often unifies opponents of permissionless innovation is a technocratic impulse to control the future according to some sort of grand blueprint or elitist design. In The Future and Its Enemies, Postrel explained how the opponents of dynamism (another word for what permissionless innovation embodies) are unified by a strong distaste of “a future that is dynamic and inherently unstable” and that is full of “complex messiness.” The critics simply cannot tolerate that inherent messiness and, therefore, they look to soothe us “with the reassurance that some authority will make everything turn out right,” she argued. “They promise to make the world safe and predictable, if only we will trust them to design the future, if only they can impose their uniform plans,” Postrel noted. This is precisely why we see “horseshoe theory” at work in such a major way in modern tech policy debates. At some point, the reactionaries and technocrats on the edges of the political spectrum bend around and meet at a destination called CONTROL. Of course, they all have a different central blueprint, and disagree about the motivations and methods for how to get to that final destination. But, nominally, they all hate the idea of permissionless innovation because it embraces the benefits of “complex messiness,” bottom-up evolutionary processes, spontaneous order, and freedom of choice. Paternalistic elitists just cannot tolerate any of that because it runs counter to their desired control plans for society.

I’ve been to AMC Georgetown twice. Once in 2022 to watch Everything Everywhere All At Once, and again this weekend to watch the Everything Everywhere All At Once director’s new movie about AI. This time, I got to see myself on the screen. When I was interviewed for @theaidocfilm in fall 2024, I was cautiously optimistic. It is really hard to make an evergreen movie about the fastest changing technology ever, and to feature a bunch of people who disagree with each other (intensely) and yet make them all proud. But the filmmakers killed it. The AI Doc is informative and moving and also just a genuinely fun watch. My message - that there’s a bridge humanity must cross to reach an amazing future, and we can act urgently to safely get to the other side - was represented well. I didn’t feel pigeonholed or caricatured at all. There is no better feeling than seeing my friends and family all fired up from a movie that masterfully distills what I’ve been talking their heads off about for years. The AI Doc is truly a must watch. Go run to a theater near you - I’m excited to hear what you think :)