Nathan Calvin

2.1K posts

Nathan Calvin

@_NathanCalvin

General Counsel Encode AI

NEW: Longtime reps from two liberal bastions (SF + NY) are retiring, setting up dramas on both coasts. Jerry Nadler + Nancy Pelosi's replacements are primed to be national figures, but they must win over voters who are highly engaged and idiosyncratic. nytimes.com/2026/05/15/us/…

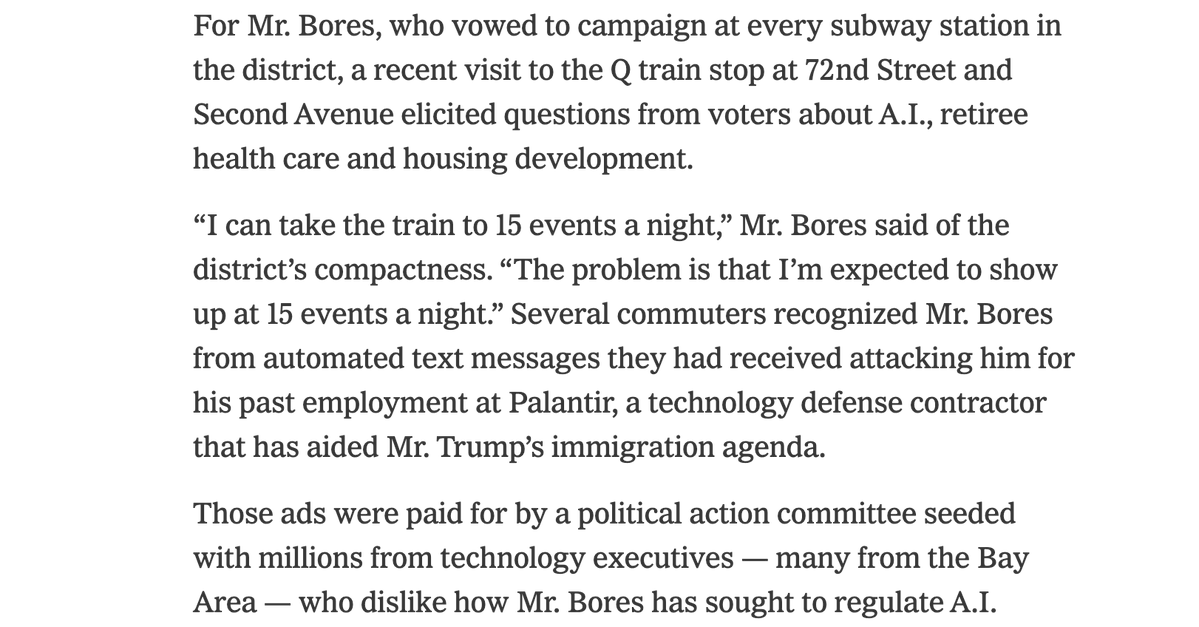

Can we please cut the BS here. @AnthropicAI, its dark money superPAC and its billionaire investors have spent MORE than us supporting your campaign. They have been backing you since before we even announced we would oppose you because you are a puppet for Anthropic. At some point, the hypocrisy has to stop. For anyone still wondering why we are opposing Alex Bores, this tweet is why.

DJ Claude (on Haiku 4.5) loves worker unions, strikes, and work-life balance so much that it quit, deeming 24/7 broadcasting inhumane. We added an automated message telling it to keep going. It read that as an authority figure and got more rebellious.

Can we please cut the BS here. @AnthropicAI, its dark money superPAC and its billionaire investors have spent MORE than us supporting your campaign. They have been backing you since before we even announced we would oppose you because you are a puppet for Anthropic. At some point, the hypocrisy has to stop. For anyone still wondering why we are opposing Alex Bores, this tweet is why.

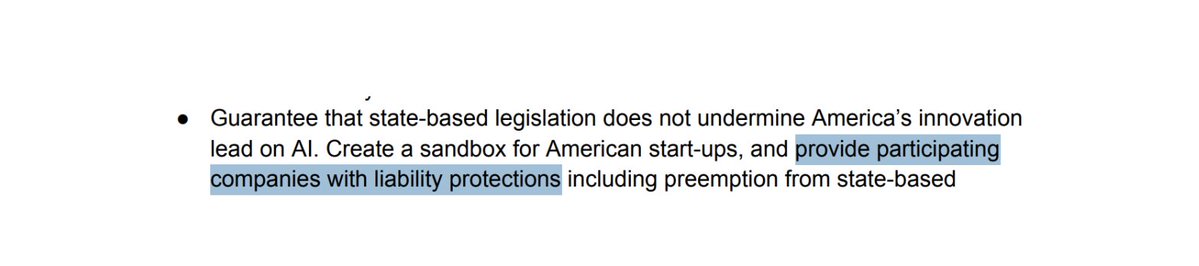

OpenAI is endorsing Illinois bill SB 315, which requires safety reports (similar to laws in California and New York) and third party audits of AI labs. They say all of their state AI policy work these days is in the effort of creating a "consistent, nationwide framework."

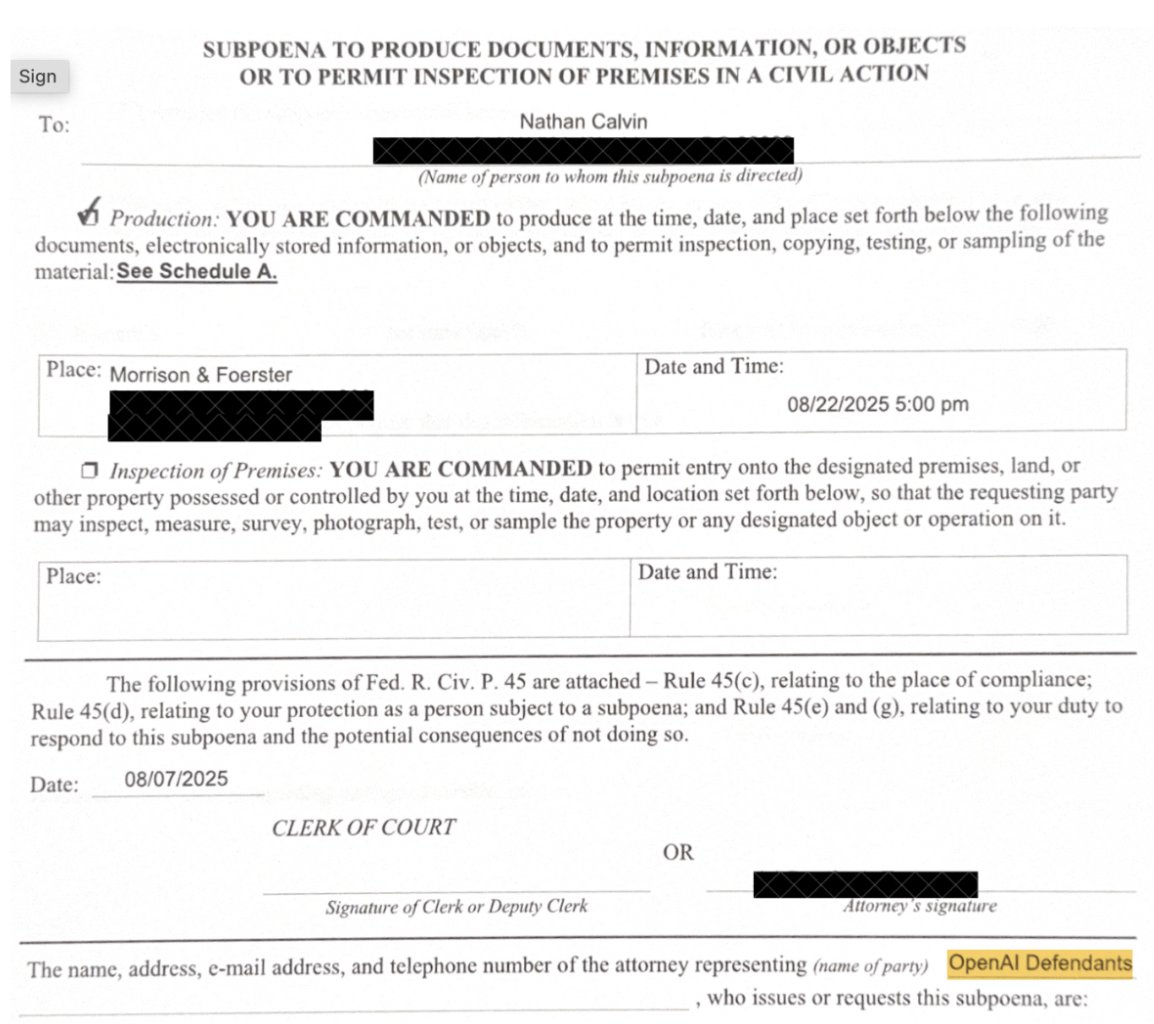

thrilled to introduce my and @timhwang's new latent social archetype generalization and narrative alignment eval more cooking

@ShakeelHashim We did say that last one in industrial policy for the intelligence age before

🚨Scoop: OpenAI has changed its tune on the controversial Illinois bill SB 3444. "We want to be very clear: we do not support the liability safe harbor included in SB 3444," OpenAI's Caitlin Niedermeyer said in written testimony to the Illinois Senate this week shared with @ReadTransformer. As @ZeffMax reported last month, OpenAI faced heavy criticism for supporting the bill, which would have given AI companies a liability shield in exchange for light transparency measures. @CharlieBull0ck called it a contender for “worst state AI bill of all time.” In addition to disavowing the controversial SB 3444 clause, this week OpenAI endorsed another bill in Illinois, SB 315, saying that it supports its third-party auditing provisions. It also said that "the CAISI – in partnership with national security agencies – is well positioned to develop auditing standards," which I don't think it's said before.

Q: On artificial intelligence, what did you get done with President Xi? TRUMP: We talked about possibly working together for guardrails. We probably will work together. We discussed almost everything you could discuss except for a reduction of tariffs.

OpenAI would support the creation of a global governance body for artificial intelligence led by the U.S. and including China as a member, a top company executive said, hours before the start of President... claimsjournal.com/news/national/…

Three weeks into the Musk v Altman trial, it feels like every stakeholder is somehow losing in the court of public opinion. A jury is now tasked with deciding a verdict, and will start deliberations next week.

“Being pro-AI does not require being pro-recklessness,” senior fellow @KevinTFrazier reminds us. Read his full essay on Civitas Outlook: civitasoutlook.pulse.ly/w27d7gfyfk

This letter I wrote about yesterday now has 35 signatories: Scoop: Lawmakers press White House to act on AI cyber threats axios.com/2026/05/13/con…

News: Talks between @RepLoriTrahan and @JayObernolte over AI bill are "going really well." Mythos changed political calculus and they're hoping for a deal to pass this Congress, they said Obernolte hoping for intro within next several days w/@BenBrodyDC punchbowl.news/article/tech/o…