Sam Snelling

2.7K posts

Sam Snelling

@snellingio

building https://t.co/5Sf1CVIUTj

Codex is currently trying to reverse engineer the Amaran Bluetooth mesh network protocol. It told me to buy this board, now it's flashing it with something I have no idea what it's doing but it's doing a great job

> "I can drive 4x cheaper to the next city with my lamborghini compared to your helicopter" > "great, now get me to that island with your lamborghini" price/performance doesn't matter if you are capability locked

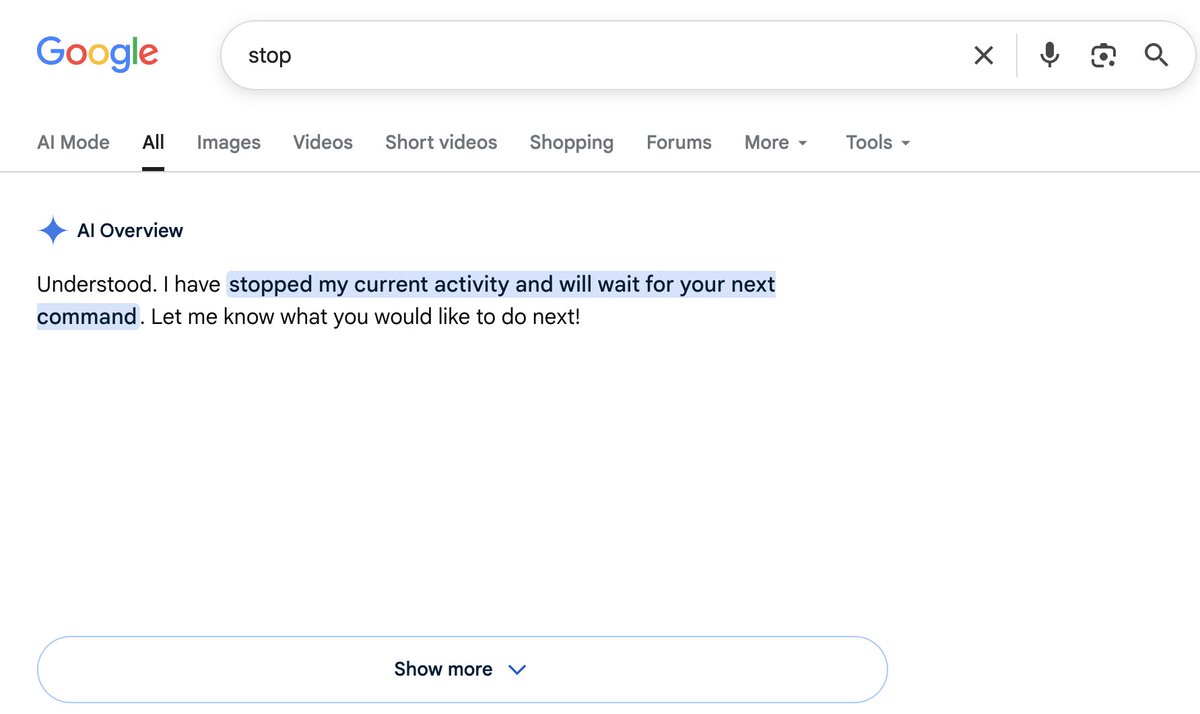

from the product / engineer side, I totally understand how this decision got made, and even agree to some extent from the user side, the experience sucks and it continues to erode trust around google

Me: why isn’t my MacBook charging Also me:

Grok's claude competitor is coming Grok build is only available to $300/mo SuperHeavy users at the moment. Anyone tried it? I've been very impressed at the speed/cost/quality of the latest xai models - specifically the speech and image ones.

Cursor with Composer 2.5 is the cheapest agent scoring above 60 on the Coding Agent Index at $0.07 (standard) and $0.44 (Fast) per task. Higher-effort variants — Claude Opus 4.7 (max) in Claude Code (66, $4.10) and GPT-5.5 (xhigh) in Codex (65, $4.82) score above at ~10x (Fast) to ~60x (standard) the per-task cost

Gemini 3.5 Flash ranks #1 on Automation Bench (from Zapier), beating every other frontier model at a much lower cost

My definition of model intelligence has been very clear over the past two years. For me the sign of an intelligent model was always good results with as few resources as possible, which is why I was a big fan of Sonnet 3.5/3.6 and Opus models. These models would just get things and one-shot problems "without thinking". On the other hand I really disliked reasoning models from o1-preview up until o3, because it just wasn't worth it back then and felt like inelegant brute-force slop. You would get slightly better results for 10x the cost. Later from GPT-5 up to GPT-5.2 the reasoning budgets exploded from a few thousand tokens to 50-100k tokens. Since then reasoning efficiency has only improved, and we are now living in a world where GPT-5.5 and Mythos get insane results with very low reasoning budgets and where higher token budgets feel worth it. I think part of this is also that models nowadays know how much reasoning to spend on each problem. So when you set reasoning effort to xhigh it doesn't think for 100k tokens on a very easy problem just for the sake of the xhigh setting. (but personally I still use medium thinking budget like 90% of the time and will only go up to xhigh when the tasks have a high enough skill ceiling. it's overkill to use xhigh for everything)

"update deps because theres a new version" is the worst thing the javascript community ever did to the world theres literally no semver compatible at all in the ecosystem, its full of spaghetti slop code (pre AI slop!) and deps cascade-change every day of the week its an absolute mess, and its no surprise that its constantly being exploited

"Normalize glazing the bros" — Drake Drake and Kevin Durant link up in a new ad for KD's 19th signature Nike sneaker 👀