spanlens

57 posts

@spanlens

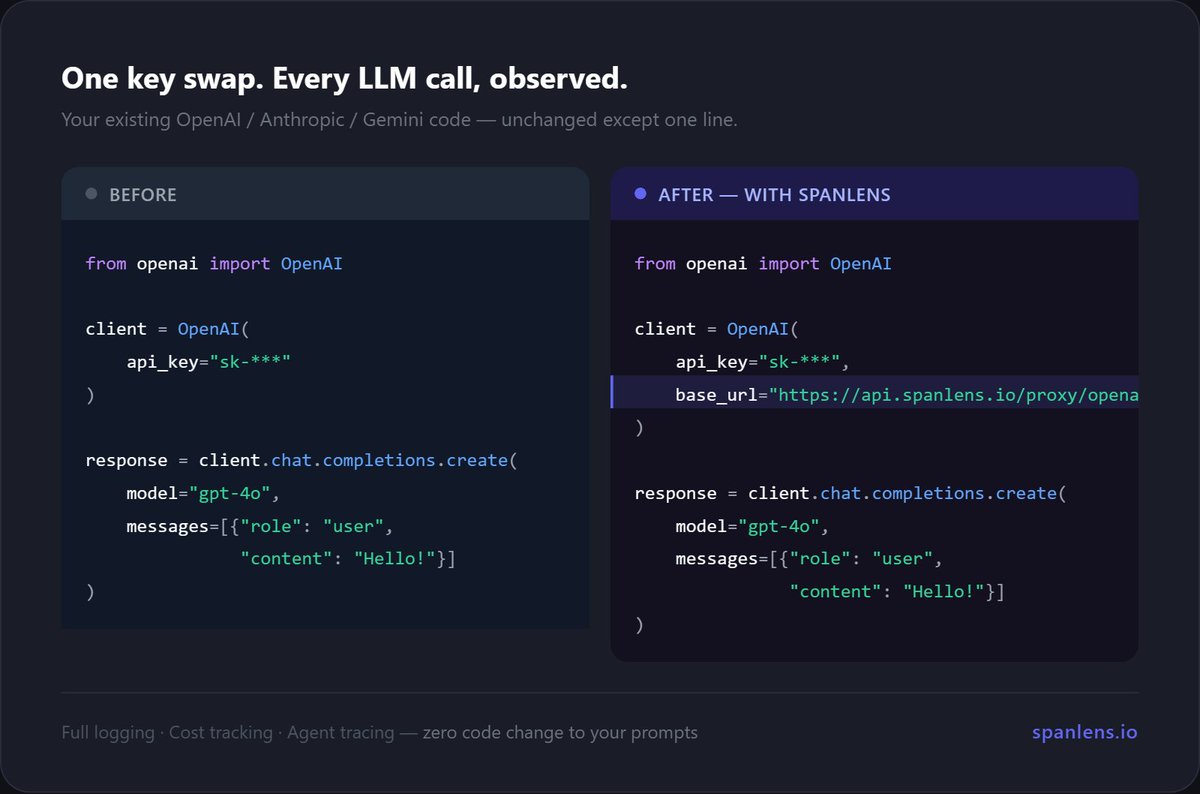

Stop guessing why your LLM bill tripled. 2-line install → costs, latency, traces & PII scan. Open-source · Free to start 🚀 PH Launch: June 3 → https://t.co/AaDhs7OJd1

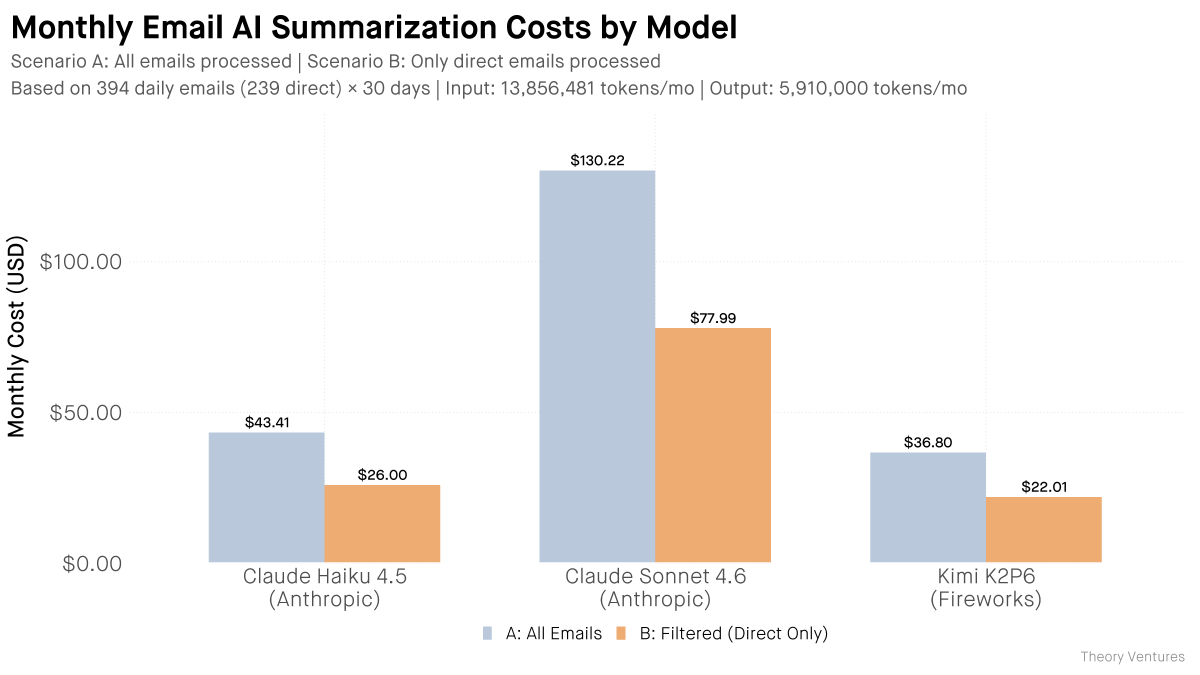

Monthly credit amounts vary by plan: Pro: $20 Max 5x: $100 Max 20x: $200 Team Standard: $20/seat Team Premium: $100/seat Enterprise: Varies by seat type After you claim the credit, it resets with each billing cycle. Credits do not rollover.

You've been asking for this one... Now in preview: Codex in the ChatGPT mobile app. Start new work, review outputs, steer execution, and approve next steps, all from the ChatGPT mobile app. Codex will keep running on your laptop, Mac mini, or devbox.

Very important update from UK AISI. This is a meaningful change from the previous report. Here’s what the new data would look like for “Mythos Preview (new)” with $ on the x-axis:

It isn't unexpected that the focus of the Bun Rust rewrite is on the anti-Zig side more than anything, since the internet loves to hate. What is unexpected and unfortunate is that leadership within Bun hasn't tried to steer the conversation away from that at all. There are so many positive and interesting takeaways from this and I'm not really seeing any of them pushed as the primary message. A positive thing that hasn't been talked about at all is how far Bun came thanks to Zig. And even if you dump it now, its meaningful for how good Zig was to even build a product to this point and impact by any metric. I would've loved to see anyone in leadership say this. On the interesting side is how fungible programming languages are nowadays. Programming languages used to be LOCK IN, and they're increasingly not so. You think the Bun rewrite in Rust is good for Rust? Bun has shown they can be in probably any language they want in roughly a week or two. Rust is expendable. Its useful until its not then it can be thrown out. That's interesting! There's been a lot of talk about memory safety and no doubt Rust provides more guarantees than Zig. But I'd love to see a better analysis of why Bun in particular suffered so much rather than take the language-blame path. How could engineering as a practice been more rigorous to prevent this? What were the largest sources of crashes other programs should watch out for? How does Rust prevent them? How could Zig theoretically prevent them? That's interesting. I know the official blog post hasn't come out yet from Bun. But they're smart enough to know that that PR would stir up controversy the moment it opened, or they should've been. And plenty in the company have been tweeting and writing about it. Its somewhat telling to me in various dimensions what they chose to talk about first. I tend to think I'm pretty good at corporate PR/comms (especially when it comes to developer audiences) and I think appealing to the negative is never the right long term strategy; it does work to get short term eyes though.

To bring Codex to Windows, we had to answer a hard question: how do you let coding agents stay useful without forcing developers to choose between constant approval prompts and full machine access? Here’s how we built the Windows sandbox for Codex: openai.com/index/building…

To add some clarity: you don't pay extra. It's the same subscription, same price per month. What's new our sub now covers two separate pools: · Interactive → sub limits, unchanged · Programmatic → new $20–$200 included(!!) credit, metered at API rates

Starting June 15, paid Claude plans can claim a dedicated monthly credit for programmatic usage. The credit covers usage of: - Claude Agent SDK - claude -p - Claude Code GitHub Actions - Third-party apps built on the Agent SDK