Sergey Pozdnyakov retweetledi

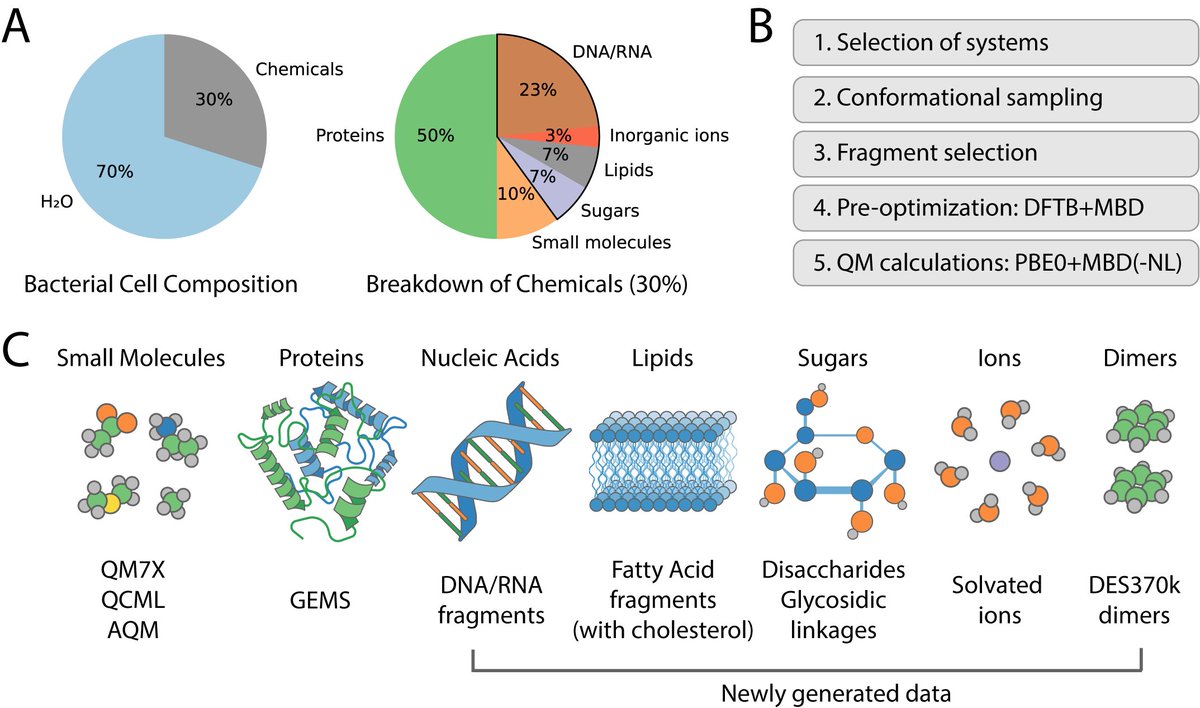

💫 As promised, we just released on GitHub the weights of the #FeNNixBio1 foundation machine learning model for drug design! 💫

Weights: github.com/FeNNol-tools/F…

FeNNol GPU code: github.com/FeNNol-tools/F…

The models are distributed under the open source ASL license (i. e. restricted to non-commercial academic research).

You can also check the updated version of the preprint that includes a unified transformers architecture as well as the full computation of the Freesolv hydration free energies dataset etc...

doi.org/10.26434/chemr…

Happy holidays and merry Christmas everyone! 🎅 🎄

Sorbonne Université / CNRS

@qubit_pharma

#machinelearning #moleculardynamics #drugdesign #compchem #GPU #biophysics

English