Quinn Slack

12.1K posts

Quinn Slack

@sqs

CEO & Member of Technical Staff @ampcode, founded @sourcegraph

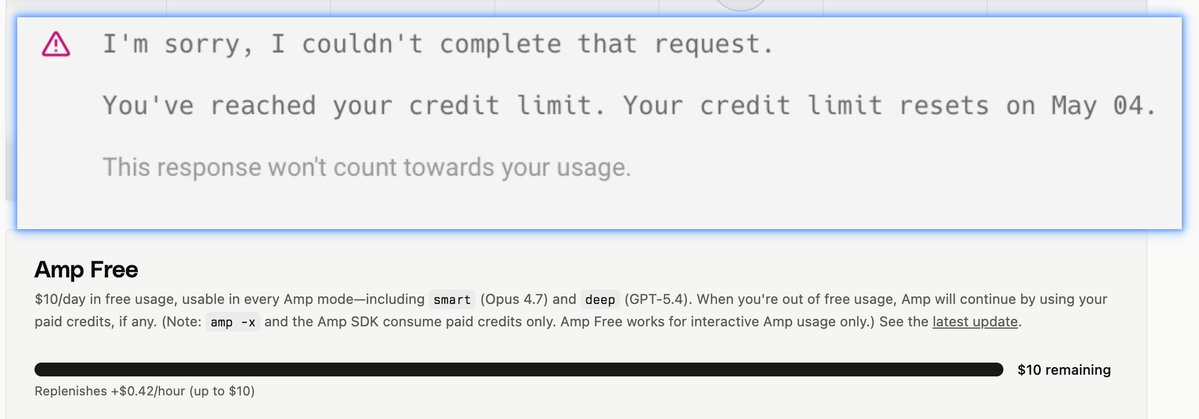

You can use OpenAI GPT-5.5 in Amp's deep mode with this in ~/.config/amp/settings.json: { "amp.internal.model": { "deep": "openai:gpt-5.5" } } Will be the default soon. Why not yet? Because... For now, usage of GPT-5.5 uses OpenAI's Safety Retention Policy, which means non-zero data retention by OpenAI in the (~0.05%) case of inputs that OpenAI's classifier identifies as severe cybersecurity abuse risk. We're working with OpenAI to lift this requirement, which as far as we know applies to use of GPT-5.5 in other agents as well. (Has anyone seen this disclosure from other agents?)

More people would be pro datacenter if every one had a beautiful open-to-the-public heated pool.

Trying out AmpCode and Factory today. My requirement: needs to be at least 5-10x better than Codex for me to actually make the switch