stableAPY.hl

2.5K posts

stableAPY.hl

@stableAPY

Building HyperFolio - HyperEVM Portfolio Tracker

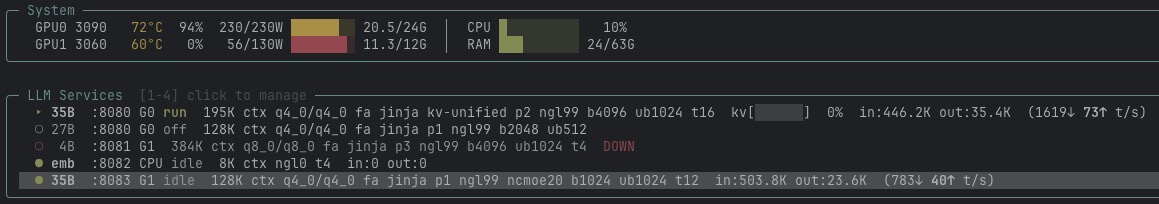

After testing out Qwen 3.6 35B on my 3060, it's Gemma 4 26B A4B UD-IQ4_XS's turn sadly it seems that it requires more CPU offloading than Qwen, leading to lower prefill and decode speeds while it felt counterintuitive, it's actually pretty logical even though Gemma is 26B vs 35B for Qwen, the 4B active parameters lead to higher CPU offload I'll still try it out to see how good the model is. I've been hyperfocused on Qwen and it's time to see what other labs have to offer

small GPU owners, 3060's fellows, 8 or 12gb VRAM chads, > if you're using llama.cpp you should check out ik_llama.cpp it gives about 10% better performances at 128k total context running Qwen3.6-35B-A3B IQ4_XS on a 3060 12gb 31,7 tok/s at 50% of context is not that bad for a subagent for local hermes setup github.com/ikawrakow/ik_l…

Coinbase has announced its plan to activate AQAv2 on USDC as the treasury deployer, with Circle serving as the technical deployer responsible for CCTP and native cross-chain infrastructure. Both Coinbase and Circle have committed to stake HYPE to activate AQAv2. As part of this transition, Native Markets has agreed to terms granting Coinbase the right to purchase the USDH brand assets. With Coinbase, in its role as treasury deployer, sharing the vast majority of reserve yield revenue with the protocol, USDC will become the most aligned stablecoin on Hyperliquid. As a result, canonical outcome (HIP-4) markets will use USDC as the quote asset in a future network upgrade. User and builder feedback has been consistent that fragmentation leads to degraded experience; now, the community no longer needs to choose between liquidity and protocol alignment. The pioneering work of Native Markets in launching USDH as the first production-scale stablecoin sharing yield directly with a protocol in a purely onchain implementation made AQAv2 possible. The learnings and mechanics pioneered by USDH will live on in AQAv2. The Hyper Foundation will give grants to eligible HIP-3 deployers, HIP-1 deployers, and builders who integrated USDH, supporting teams through migration over the next months. These grants reflect an ongoing commitment to teams who choose to build on Hyperliquid and align with the protocol. USDH markets are fully functional but will sunset over time. USDH remains fully backed, with feeless conversions to USDC and fiat available to users during this transition.