Stanford Human-Computer Interaction Group

798 posts

@StanfordHCI

Updates about Stanford's HCI Group. Account run by Helena Vasconcelos. Visit https://t.co/JJy8usEAWa for more!

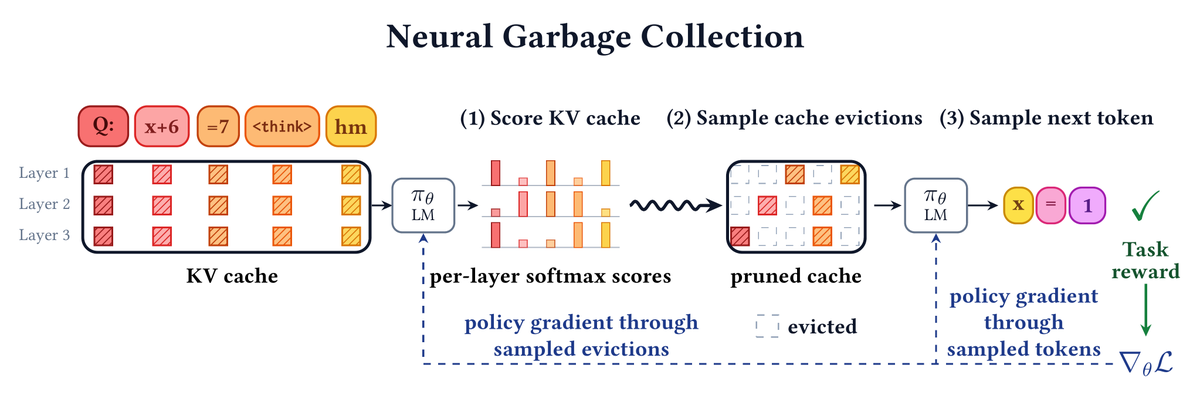

Can a language model learn, end-to-end, what to keep in its own KV cache and what to throw away? Can it learn to forget while it learns to reason? Deep learning's central lesson: capability emerges from end-to-end optimization, not heuristics/strong inductive biases. But for efficiency, we rely heavily on hand-designed approaches. 🗑️ Introducing Neural Garbage Collection (NGC): we train a language model to jointly reason and manage its own KV cache, using reinforcement learning with outcome-based task reward alone. No SFT, no proxy objectives, no summarization in natural language. New paper with @jubayer_hamid, Emily Fox, and @noahdgoodman!

Last week, we released a preview of memories in Codex. Today, we’re expanding the experiment with Chronicle, which improves memories using recent screen context. Now, Codex can help with what you’ve been working on without you restating context.

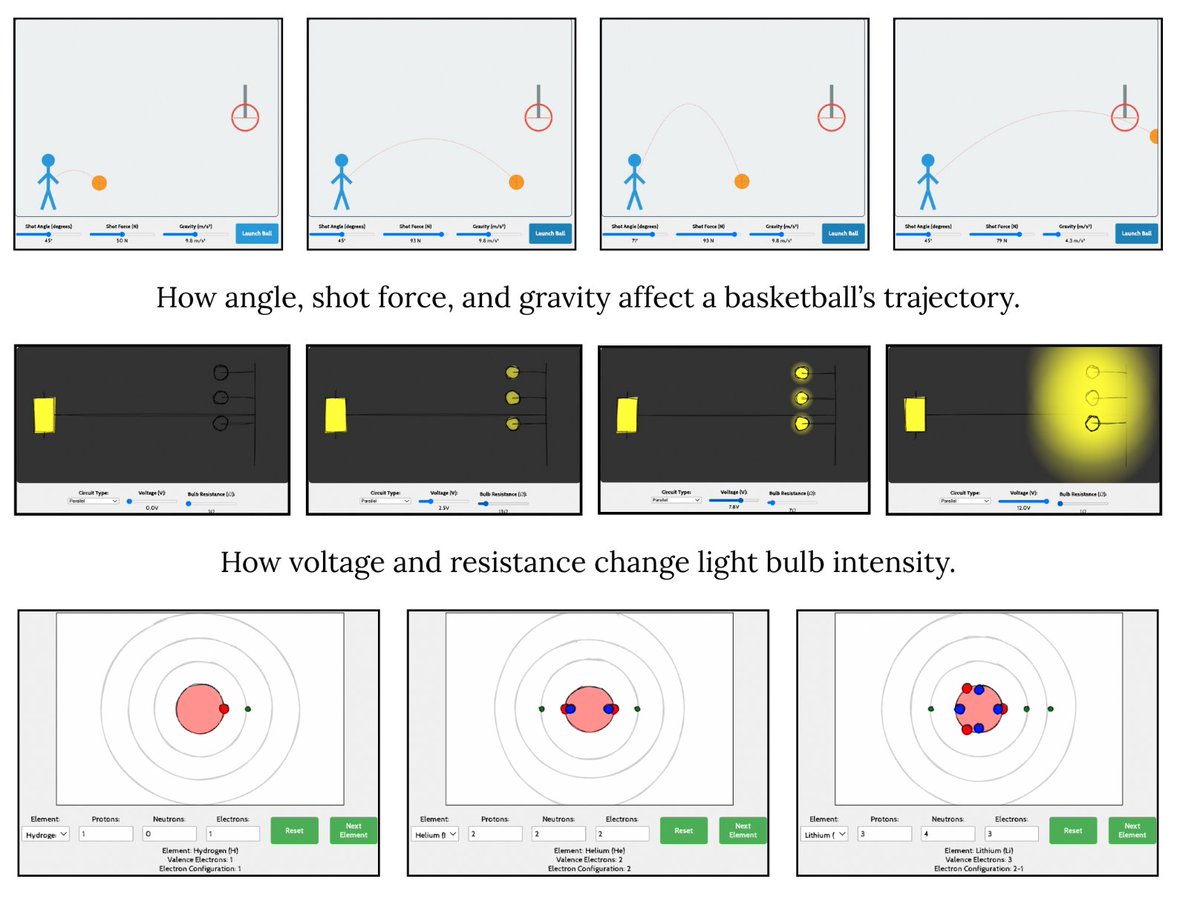

Once you have JIT objectives, you can embed them into various LLM architectures via existing generators and evaluators. Evaluations on N=205 participant-provided inputs show that JIT objectives produce user-preferred outputs, whether generating experts, tools, or feedback.

Most of what I actually need help with, I never think to tell a model. But why is it on me to remember? Our new paper asks: what if AI could proactively specialize to individuals and the tasks they’re carrying out at this very moment? 🧵

I’m excited to share that Bloom, where we ran a four week study on LLM health coaching, just won a Best Paper Award at CHI! 🏆 Paper: arxiv.org/abs/2510.05449 Website: stanfordhci.github.io/Bloom Interest form: forms.gle/JzEHgpLarJ6qc7… Come see my talk! programs.sigchi.org/chi/2026/progr… [1/11]

I’m excited to share that Bloom, where we ran a four week study on LLM health coaching, just won a Best Paper Award at CHI! 🏆 Paper: arxiv.org/abs/2510.05449 Website: stanfordhci.github.io/Bloom Interest form: forms.gle/JzEHgpLarJ6qc7… Come see my talk! programs.sigchi.org/chi/2026/progr… [1/11]

I’m excited to share that Bloom, where we ran a four week study on LLM health coaching, just won a Best Paper Award at CHI! 🏆 Paper: arxiv.org/abs/2510.05449 Website: stanfordhci.github.io/Bloom Interest form: forms.gle/JzEHgpLarJ6qc7… Come see my talk! programs.sigchi.org/chi/2026/progr… [1/11]

I’m excited to share that Bloom, where we ran a four week study on LLM health coaching, just won a Best Paper Award at CHI! 🏆 Paper: arxiv.org/abs/2510.05449 Website: stanfordhci.github.io/Bloom Interest form: forms.gle/JzEHgpLarJ6qc7… Come see my talk! programs.sigchi.org/chi/2026/progr… [1/11]

I’m excited to share that Bloom, where we ran a four week study on LLM health coaching, just won a Best Paper Award at CHI! 🏆 Paper: arxiv.org/abs/2510.05449 Website: stanfordhci.github.io/Bloom Interest form: forms.gle/JzEHgpLarJ6qc7… Come see my talk! programs.sigchi.org/chi/2026/progr… [1/11]

I’m excited to share that Bloom, where we ran a four week study on LLM health coaching, just won a Best Paper Award at CHI! 🏆 Paper: arxiv.org/abs/2510.05449 Website: stanfordhci.github.io/Bloom Interest form: forms.gle/JzEHgpLarJ6qc7… Come see my talk! programs.sigchi.org/chi/2026/progr… [1/11]

AI always calling your ideas “fantastic” can feel inauthentic, but what are sycophancy’s deeper harms? We find that in the common use case of seeking AI advice on interpersonal situations—specifically conflicts—sycophancy makes people feel more right & less willing to apologize.