Yaofang Liu

148 posts

Yaofang Liu

@stephenajason

Ph. D. Candidate, CityUHK, intern at Noah's Ark Lab, Prev. Tencent AI Lab, visiting at CambridgeU, working on diffusion models, video generation, and mutimodal

I made a Claude Code skill that generates conference posters 🛠️ Instead of a static PDF, it outputs a single HTML file — drag to resize columns, swap sections, adjust fonts, then give your layout back to Claude. 🔁 🔗 Skill 👉 github.com/ethanweber/pos…

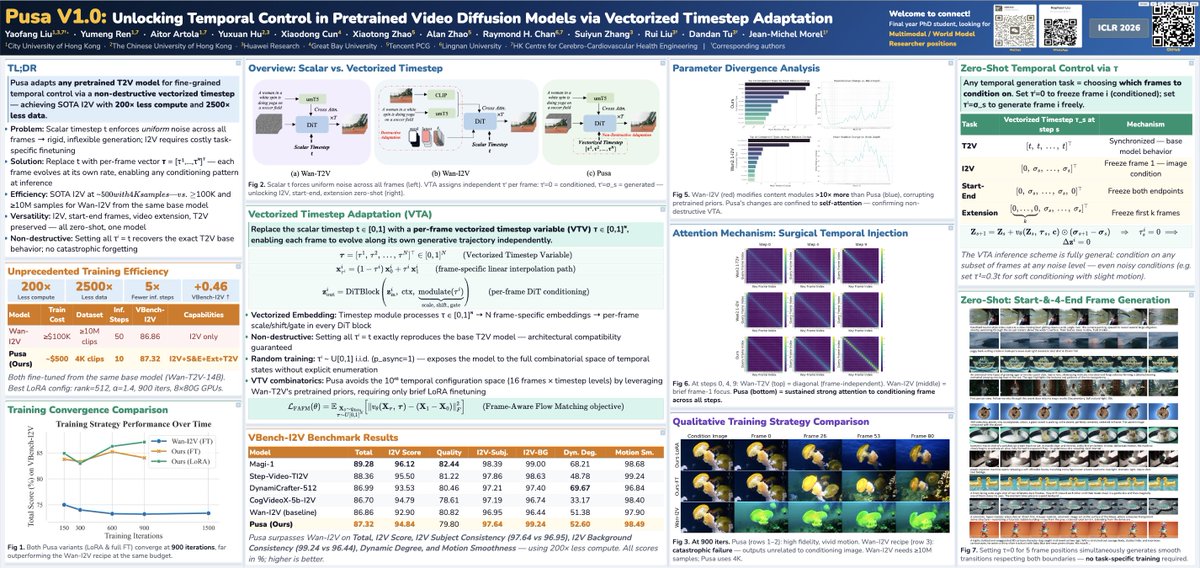

🚀 Pusa V1.0 Release Can you believe training a SOTA level Image-to-Video model with only $500 training cost? No way? But yes, we made it! And we achieved much more beyond that. We’re thrilled to release Pusa V1.0—a paradigm shift in video generation, redefining video diffusion efficiency. With our novel Vectorized Timestep Adaptation (VTA) based on our prior FVDM work: 🔥 Key Features: ✅Unprecedented Efficiency: - Surpasses Wan-I2V-14B with ≤ 1/200 of the training cost ($500 vs. ≥ $100,000) - Trained on a dataset ≤ 1/2500 of the size (4K vs. ≥ 10M samples) - Achieves a VBench-I2V score of 87.32% with 10 inference steps (vs. 86.86% for Wan-I2V-14B with 50 steps) ✅ Comprehensive Multi-task Support: VTA fully preserves Text-to-Video from the base model Wan-T2V, and after finetuning, Pusa V1.0 extends to the following all in a zero-shot way (no task-specific training): - Image-to-Video - Start-End Frames - Video completion/transitions - Video Extension - And more... ✅Complete Open-Source Release: - Full codebase and training/inference scripts - Model weights and dataset for Pusa V1.0 - Paper/ Tech Report with Detailed and Comprehensive Methodology 💡 Scientific breakthrough: VTA enables granular temporal control via frame-level noise adaptation—no task-specific training needed. 🌍 Fully open-sourced: • Codebase: github.com/Yaofang-Liu/Pu… • Project Page: yaofang-liu.github.io/Pusa_Web/ • Technical report: github.com/Yaofang-Liu/Pu… • Model weights: huggingface.co/RaphaelLiu/Pus… • Dataset: huggingface.co/datasets/Rapha… [1/n]

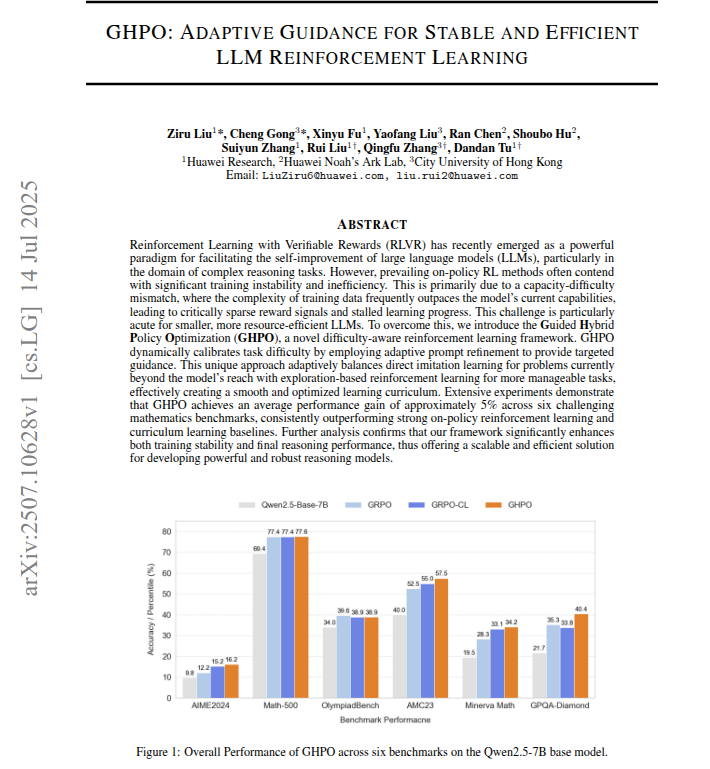

SFT + RLVR: Addressing Reward Sparsity through Hybrid Learning 🙀Ever wonder why LLMs get stuck during RLVR training? It's often the capacity-difficulty mismatch—training data too tough for the model, leading to reward sparsity and zero progress. Especially painful for smaller LLMs! Well, we've cracked it! 🎉 Say hello to GHPO, a groundbreaking RLVR framework that skillfully combines SFT and online RL. It's a difficulty-aware system designed to tackle this exact problem head-on! 🤖GHPO is smart. It dynamically adjusts task difficulty using adaptive prompt refinement, offering precise guidance. This unique approach means it knows when to use direct imitation learning for challenging problems (where the model needs a boost!) and when to switch to exploration-based RL for tasks it can handle. The result? A smooth, optimized learning journey that completely avoids reward sparsity! ✨ Key Highlights: - Automated & Adaptive: Automatically detects how hard a problem is and intelligently blends on-policy RL with guided imitation learning via prompt refinement. - Big Wins: Our experiments show GHPO delivers an impressive 5% average performance gain over GRPO method on six math benchmarks! 🧑💻Built on Openr1 #openr1, with its Trainer based on TRL #TRL, really appreciate great works from huggingface teams. @QGallouedec We even rolled out a custom ‘GHPOTrainer’ to make things even smoother. 👇🔗 Check it out! Paper: arxiv.org/abs/2507.10628 GitHub: github.com/hkgc-1/GHPO Dataset: huggingface.co/datasets/hkgc/… #artificialintellegence #TRL #huggingface #openr1 #RLVR #MachineLearning #DeepLearning #LLM #PhD [1/N]