stonecobra

8.3K posts

stonecobra

@stonecobra

AI Architect and Engineer

@zachglabman Great article. As a novice looking for a mid-career change, any advice? Would computer-machine learning be an avenue for entering the sweet spot between tech and manufacturing, or is it going to get washed away by AI?

Microservices are what happens when your organization has communication problems and you decide the architecture should have them too.

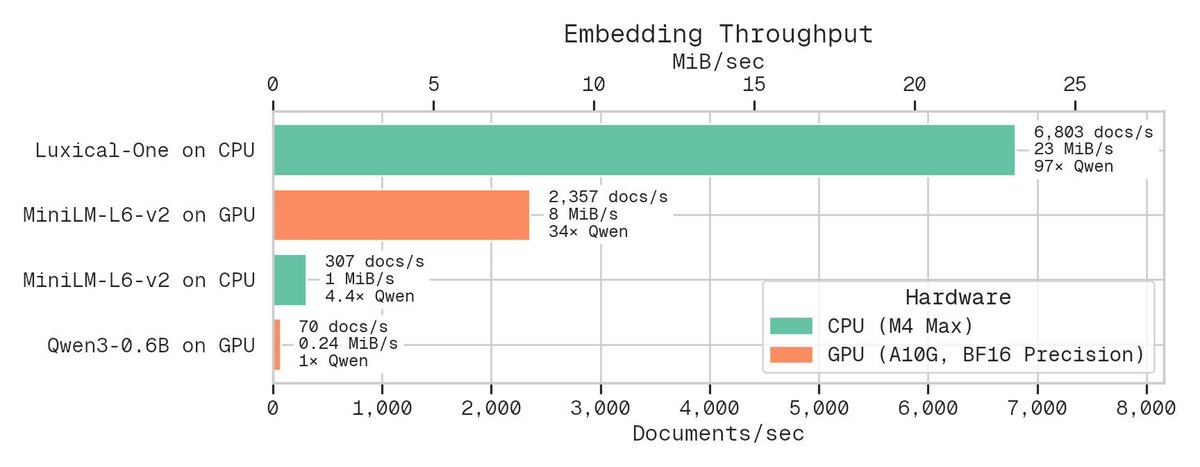

Just dropped a new text embedding methodology. Fast as heck on CPU only and still great for document similarity analysis, clustering, and classification. How? Use a tiny ReLU network to approximate a big transformer from lexical (term frequency / bag of words) features.

We let the Internet down today. Here’s our technical post mortem on what happened. On behalf of the entire @Cloudflare team, I’m sorry. blog.cloudflare.com/18-november-20…

i've been using cloudflare a lot more recently and realised most explanations of their primitives are way too complex so i made a simple primer: an introduction into cloudflare's primitives, starting with durable objects + kv momito.co.uk/cloudflare-pri…

Honestly, the US Navy should just become America's nuclear power provider. US Navy Cost: $2 billion for 2 x 400 MW reactors in Ford-class aircraft carrier NuScale: $10 bn for 500 MW reactor Westinghouse: ~$8 bn for 1000MW reactor

$5 PlanetScale releasing soon planetscale.com/blog/5-dollar-…