Superlinked

651 posts

@superlinked

Self-hosted inference for search & document processing.

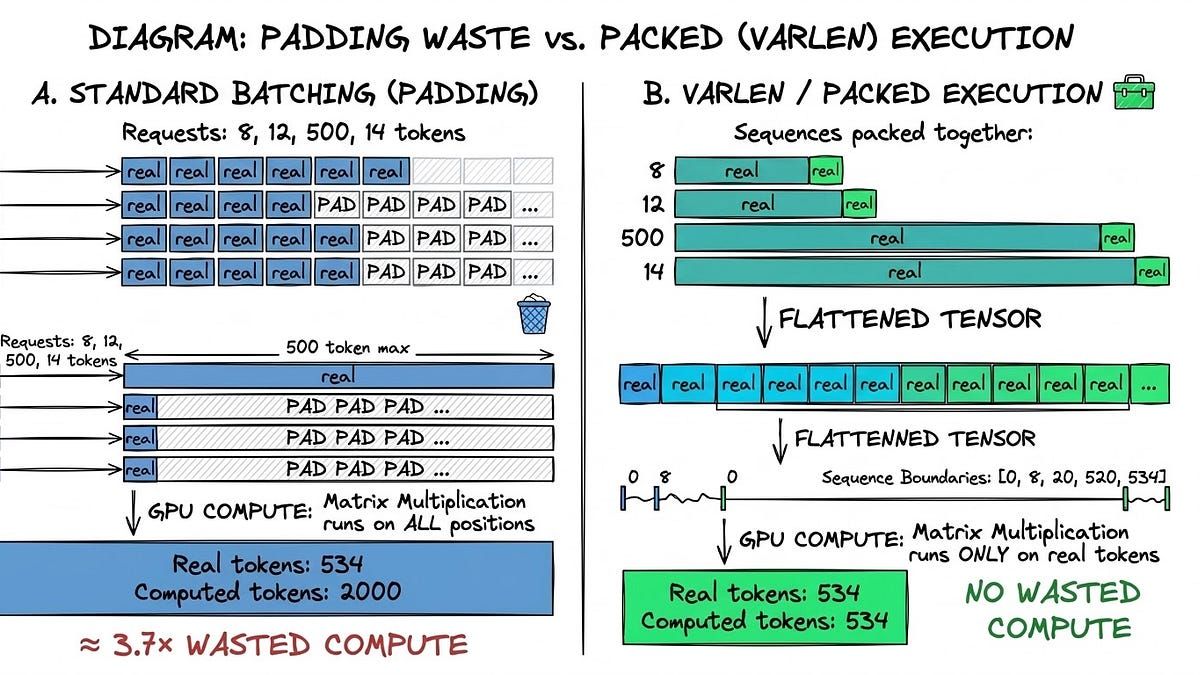

Most embedding infrastructure assumes you know exactly which model you want ahead of time. This talk starts where that assumption breaks. @f_makraduli walks through the real profiling mistakes, infrastructure gaps, and production constraints that led to building an embedding inference engine designed for dynamic model loading, hot-swapping, and memory-aware eviction instead of brittle one-model-per-container deployments. If you're working on small-model inference, embeddings, or GPU infrastructure, this is a practical look at what breaks in the real world and how to design around it. Check it out here: buff.ly/S1HZCZB Dive into the SIE repo here: buff.ly/EBnNglg

Traditional vector embeddings represent entire documents as single vectors. But what if we could capture more nuanced relationships? Enter 𝗺𝘂𝗹𝘁𝗶-𝘃𝗲𝗰𝘁𝗼𝗿 𝗲𝗺𝗯𝗲𝗱𝗱𝗶𝗻𝗴𝘀. 𝗪𝗵𝗮𝘁 𝗮𝗿𝗲 𝘁𝗵𝗲𝘆? Instead of one vector per document, multi-vector embeddings (like ColBERT) represent each document with multiple vectors. For example: • Single vector: [0.0412, 0.1056, 0.5021,...] • Multi-vector: [[0.0543,...], [0.0123,...], [0.4299,...]] 𝗪𝗵𝘆 𝗮𝗿𝗲 𝘁𝗵𝗲𝘆 𝗽𝗼𝘄𝗲𝗿𝗳𝘂𝗹? Multi-vector embeddings enable "late interaction" - a technique that matches individual parts of texts rather than comparing them as whole units. This preserves fine-grained meaning and enables more precise matching. 𝗛𝗼𝘄 𝗶𝘁 𝘄𝗼𝗿𝗸𝘀: 1. Each token/part of text gets its own vector 2. During a search, each query vector finds its best match in the document 3. Individual matches are combined for a final similarity score 𝗞𝗲𝘆 𝗕𝗲𝗻𝗲𝗳𝗶𝘁𝘀: • Better handling of word order • More precise phrase matching • Improved search accuracy for longer texts 𝗧𝗿𝗮𝗱𝗲-𝗼𝗳𝗳𝘀 𝘁𝗼 𝗖𝗼𝗻𝘀𝗶𝗱𝗲𝗿: - Generally larger sizes (longer text ➡️ larger vectors) - Higher memory & storage costs - Increased inference & search time 𝗜𝗺𝗽𝗹𝗲𝗺𝗲𝗻𝘁𝗮𝘁𝗶𝗼𝗻: Weaviate v1.30 now supports multi-vector embeddings for production environments through: 1. ColBERT model integration (via @JinaAI_ ) 2. Custom multi-vector embeddings 3. Quantization techniques for multi-vector embeddings Want to learn more? Join our upcoming technical session: lu.ma/weaviate-relea…