Sabitlenmiş Tweet

Swen Koller

39 posts

Swen Koller

@swekol

Building with LLMs. MS / MBA @HarvardHBS | Forbes U30

Boston / San Francisco Katılım Kasım 2016

240 Takip Edilen114 Takipçiler

@ErnestRyu It'll be like software engineering. The 10% of math research work AI can't do well yet will be 100x more valuable, the other 90% will be automated.

English

@shuchaobi shared perspectives from his OpenAI research at Harvard today. Key insights on what’s next:

English

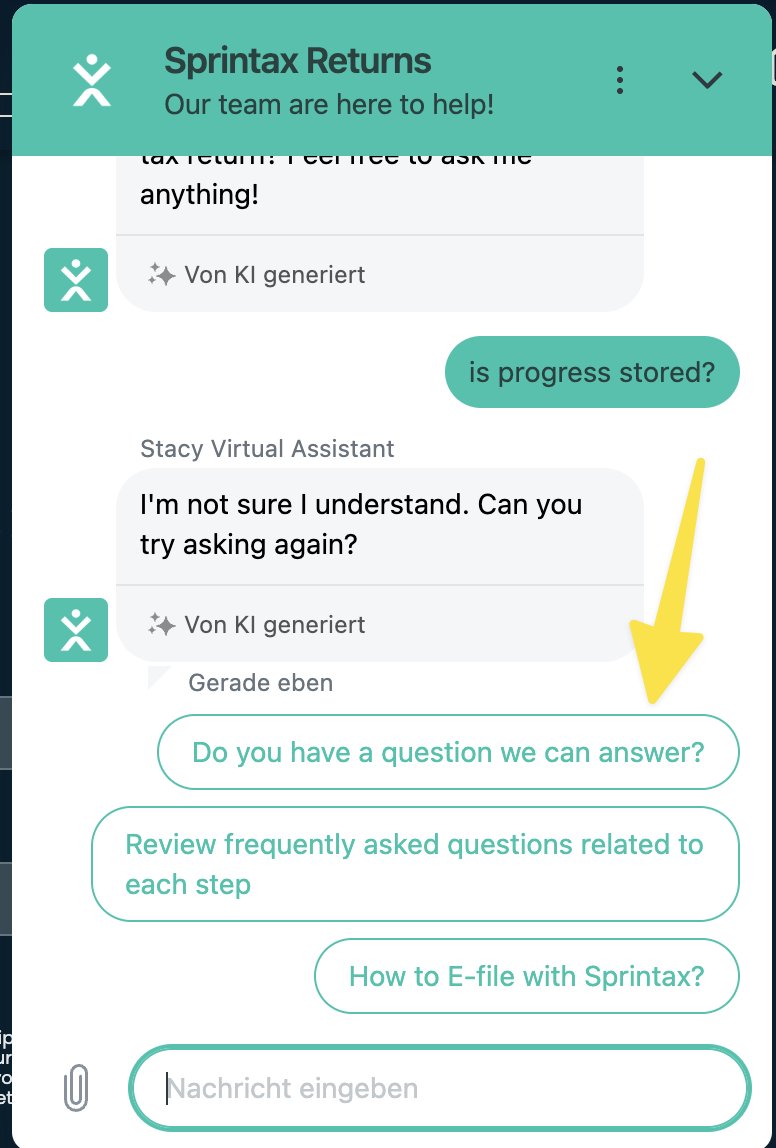

@Sprintax_USA Your chatbot suggests exactly the right question. Let me know if I can help you build a chat bot that actually works.

English

Today @JoshuaKushner came to talk at @Harvard. My top three takeaways:

1) Thrive only invested in seven AI companies over the last 3 years. Josh explained, he doesn’t know if any of the vertical AI companies will still exist in a world with extremely capable AI models

2) OpenAI now is like Apple before they had decided which apps they build vs. let developers build. They need to look after their ecosystem but also want to build some themselves

3) A big opportunity Josh sees is vertical models such as for life science, physical intelligence or industry specific (e.g. legal)

English

For a course at Harvard, I developed a game using AI.

My assignment was overdue, so I made that the theme of the game.

In Overdue, you’re a student dodging an endless wave of homework by firing excuses at professors. You can play it here: overdue.swenkoller.com (desktop only)

GIF

English

Swen Koller retweetledi

For 25% of the Winter 2025 batch, 95% of lines of code are LLM generated.

That’s not a typo. The age of vibe coding is here.

Y Combinator@ycombinator

Andrej Karpathy recently coined the term “vibe coding” to describe how LLMs are getting so good that devs can “give in to the vibes, embrace exponentials, and forget that the code even exists.” In this episode of the @LightconePod, the hosts discuss this new way of programming and what it means for builders in the age of AI.

English