Synchromeshi 🌲

42 posts

Synchromeshi 🌲

@synchromeshi

#roamcult gang - big nerd about many things -【日本語下手】

I set up the Hermes Agent on my NVIDIA DGX Spark and had it sending cold outreach emails for BridgeMind in under 20 minutes. Self-improving AI agent. It completes tasks, evaluates results, creates skills, and gets better on its own. No micromanaging. OpenClaw got the hype. Hermes got the architecture right. Full setup and demo below.

Welcome ⭐Carnice-9b!⭐ - a model for Hermes-Agent Carnice-9b is a fine-tuned version of Qwen3.5-9b to preform exceptionally well in the hermes-agent harness. This model is meant to fit onto consumer GPU's all the way down to 6gb (Q4_K_M), but recommended to run in ~12-16gb cards. Try it out. Any feedback is appreciated, feel free to DM me! huggingface.co/kai-os/Carnice… This would not have been possible without the help from @LambdaAPI, @NousResearch ,@TheZachMueller, @Teknium Look out for Carnice-27b soon! 👀

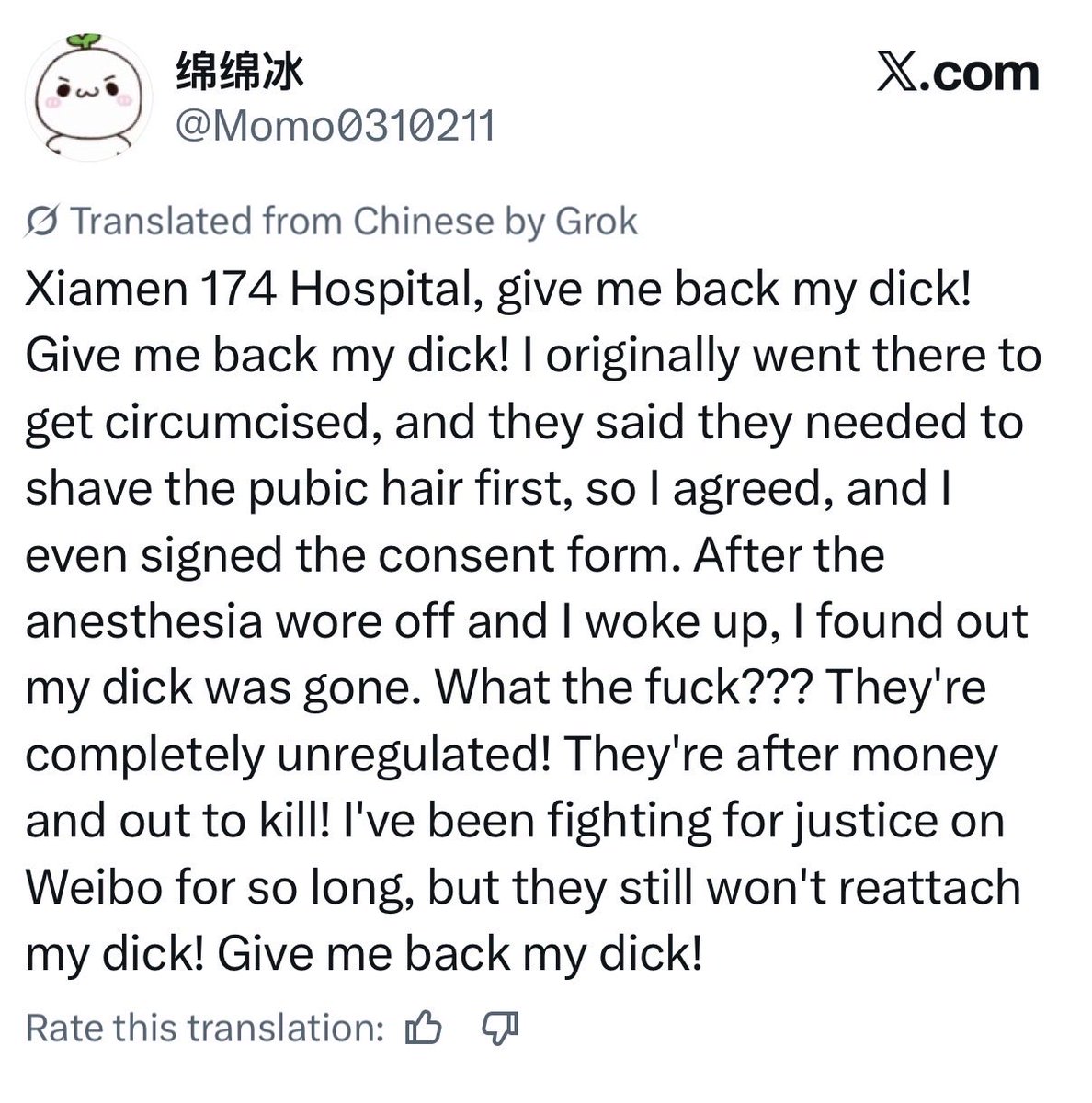

This is straight out of a horror movie.