Sabitlenmiş Tweet

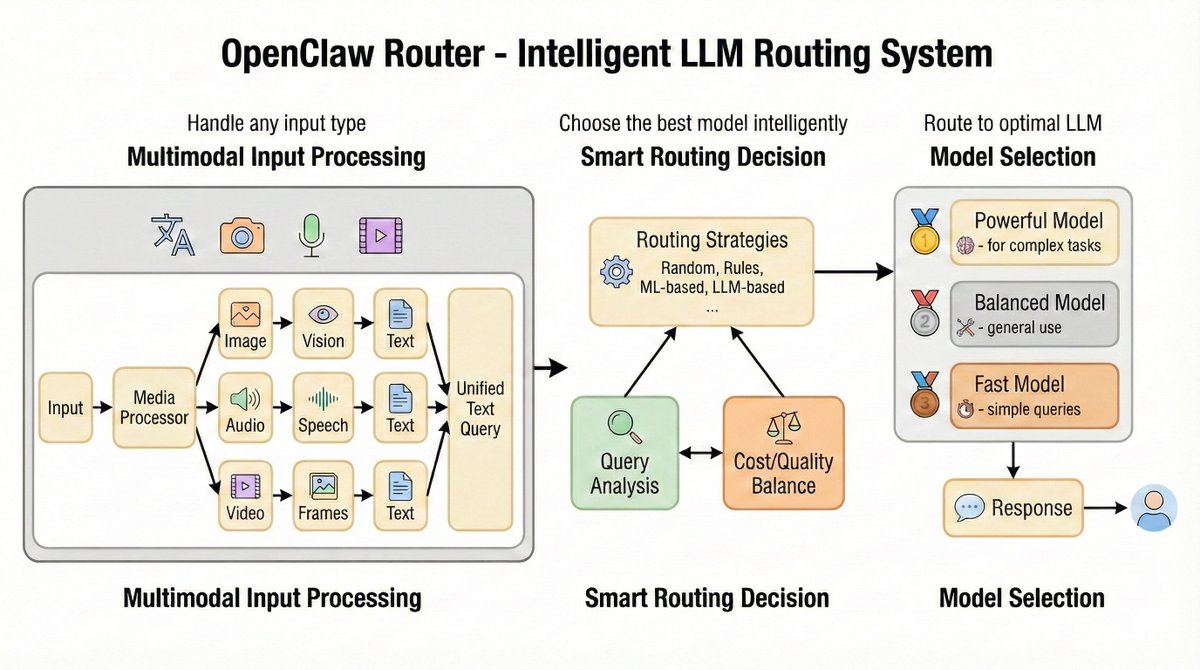

🎨 Introducing ComfyUI Interface for LLMRouter - Build your entire LLM routing pipeline visually!

We're excited to release a powerful visual interface that transforms how you work with LLMRouter. No more YAML configs or terminal scripts - just drag, drop, and connect nodes.

🔗 Project: github.com/ulab-uiuc/LLMR…

🎨 ComfyUI: github.com/ulab-uiuc/LLMR…

English