Sainbayar Sukhbaatar

1.4K posts

@tesatory

Memory Networks, Asymmetric Self-Play, CommNet, Adaptive-Span, System2Attention, Feedback Transformer, Multi-Token Attention

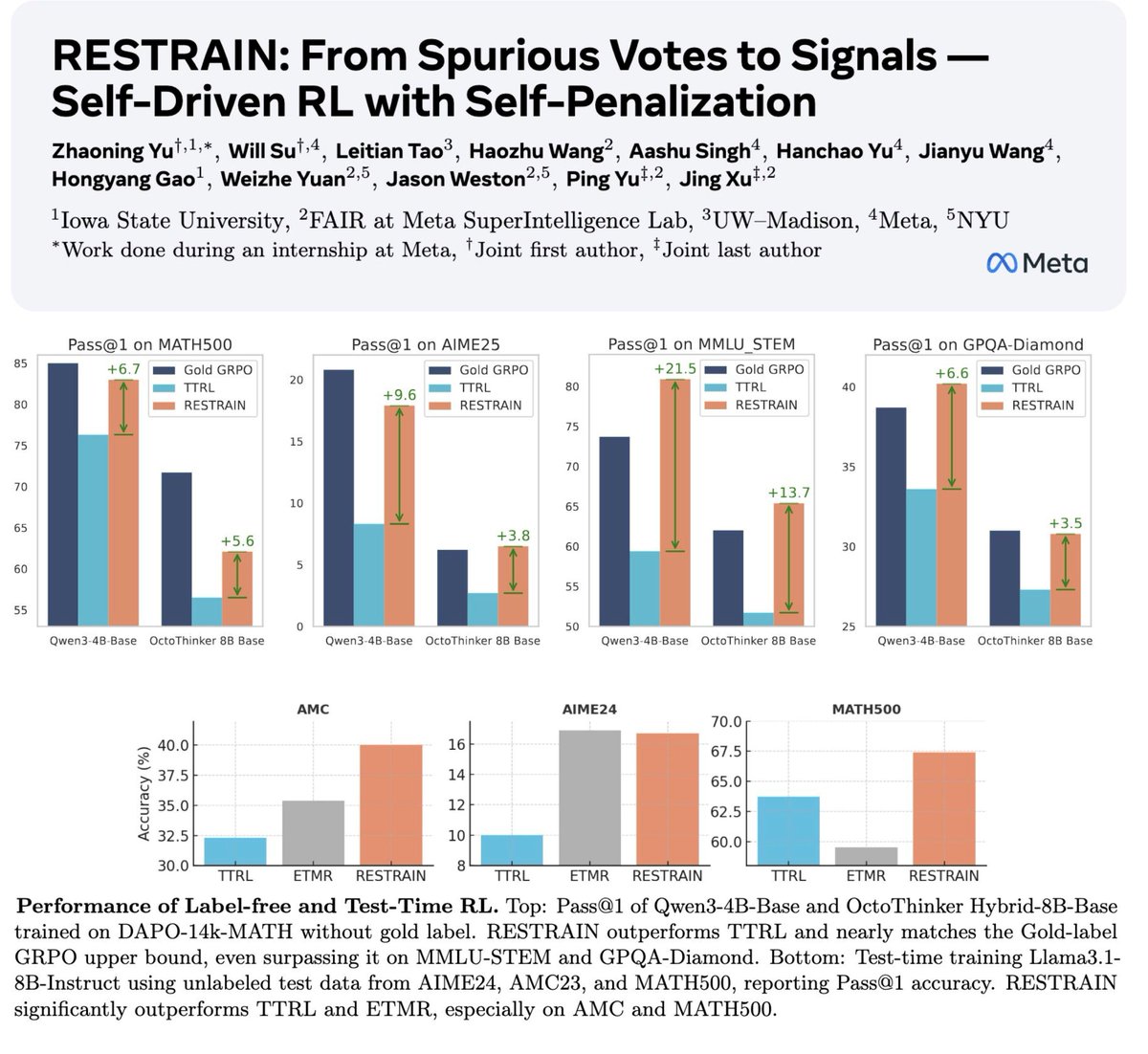

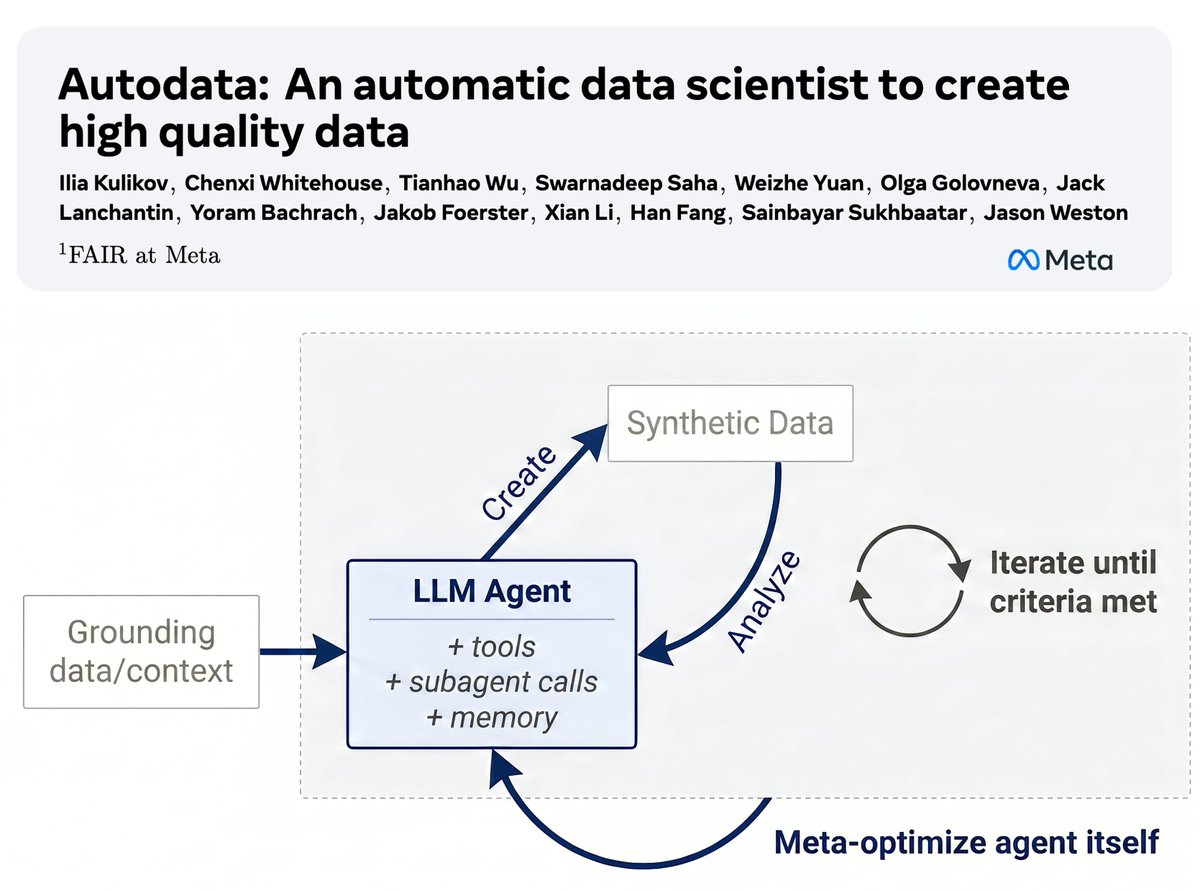

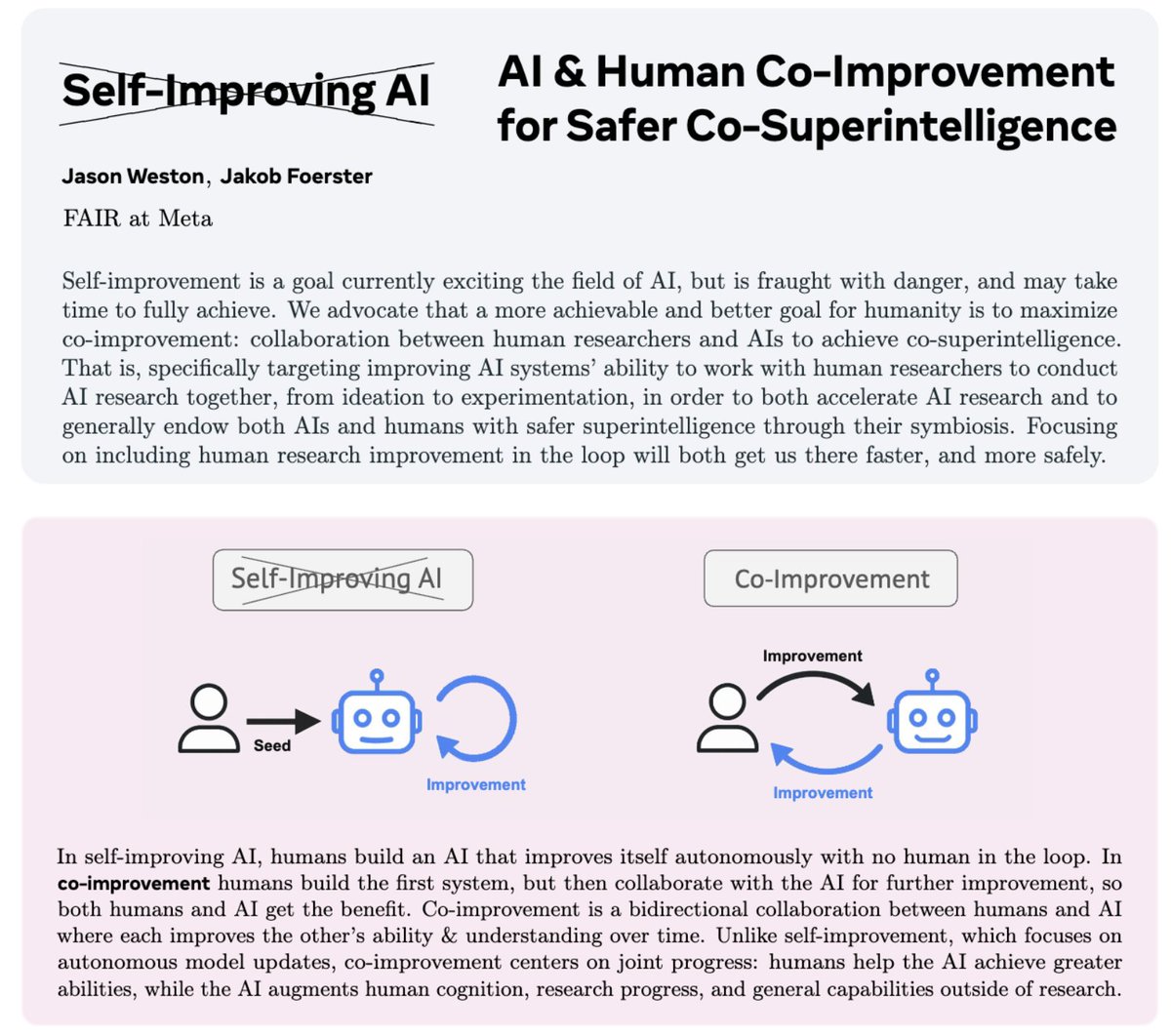

Our team in FAIR at Meta is hiring a postdoc researcher! We work on the topics of Reasoning, Alignment and Memory/architectures (RAM). Apply here: metacareers.com/profile/job_de… Location: NY, Seattle or Menlo Park. Some of our recent work to give flavor: Co-Improvement (position): arxiv.org/abs/2512.05356 SPICE (Self-Play in Corpus Environments): arxiv.org/abs/2510.24684 Self-Challenging Agents: arxiv.org/abs/2506.01716 RL from Human Interaction: arxiv.org/abs/2509.25137 AggLM (parallel aggregation): arxiv.org/abs/2509.06870 StepWiser (CoT-PRM RL): arxiv.org/abs/2508.19229 DARLING (diversity-trained RL): arxiv.org/abs/2509.02534 J1 (RL-trained LLM-as-Judge): arxiv.org/abs/2505.10320 CoT-Self-Instruct: arxiv.org/abs/2507.23751 Multi-Token Attention: arxiv.org/abs/2504.00927

Our team in FAIR at Meta is hiring a (full-time) researcher! We work on the topics of Reasoning, Alignment and Memory/architectures (RAM) for self-improvement & co-improvement. Apply here: metacareers.com/profile/job_de… Location: NY, Seattle or Menlo Park. Some of our recent work to give flavor: Co-Improvement (position): arxiv.org/abs/2512.05356 SPICE (Self-Play in Corpus Environments): arxiv.org/abs/2510.24684 Self-Challenging Agents: arxiv.org/abs/2506.01716 RL from Human Interaction: arxiv.org/abs/2509.25137 AggLM (parallel aggregation): arxiv.org/abs/2509.06870 StepWiser (CoT-PRM RL): arxiv.org/abs/2508.19229 DARLING (diversity-trained RL): arxiv.org/abs/2509.02534 J1 (RL-trained LLM-as-Judge): arxiv.org/abs/2505.10320 CoT-Self-Instruct: arxiv.org/abs/2507.23751 Multi-Token Attention: arxiv.org/abs/2504.00927

The last thing you ever want to hear at the end of your talk