Ryan Booth retweetledi

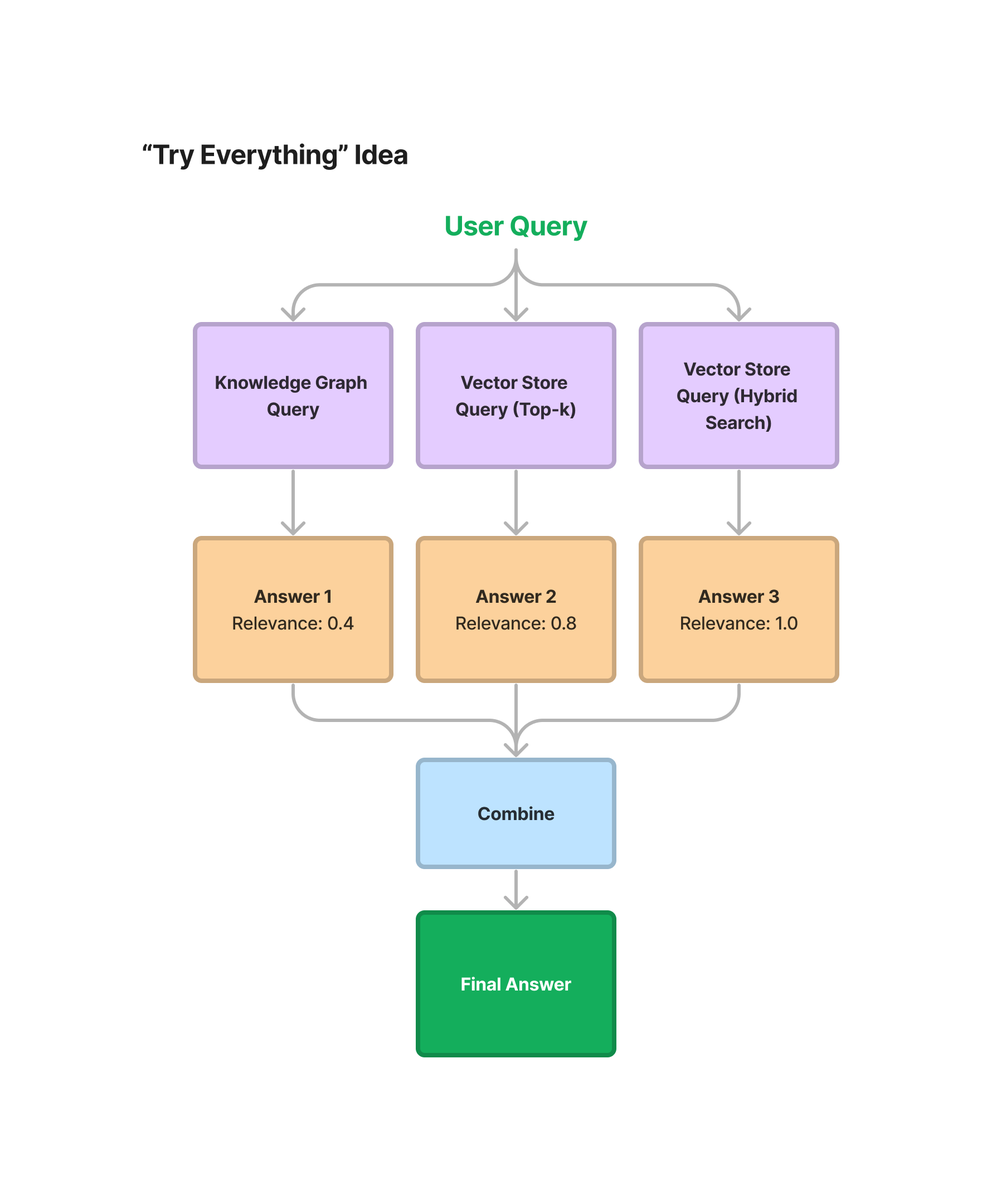

The future of AI is agent marketplaces.

Ryan Booth's A2A/AP2 demo shows how agents discover, negotiate & transact autonomously.

This changes everything about digital commerce 🧵👇

youtube.com/watch?v=d5l0D7…

YouTube

English

Ryan Booth

13.1K posts

@that1guy_15

GenAI/MLOps. Passionate about building solutions users can easily consume. Infrastructure Engineer at my core. My Art: https://t.co/Jssz1LCN2Q

Reminder that “psychological safety” isn’t a buzzword, but a research-backed way of making sure everyone on the team feels comfortable taking risks. At studies at Google it was the key predictor of innovative team success. Use the checklists on your team! rework.withgoogle.com/guides/underst…