Sabitlenmiş Tweet

TheAgentic

137 posts

TheAgentic

@theagentic

Built for agents that have to be right.

Palo Alto, CA Katılım Ekim 2024

186 Takip Edilen230 Takipçiler

Most teams are still shipping like it’s 2015:

Build feature.

Deploy feature.

Hope it works.

Except now the feature makes its own decisions.

You can still move fast and break things.

You just have to know exactly what breaks, why it breaks, and how to fix it when it does.

The teams doing well in AI know:

• where their agent gets confused

• which prompts cause drift

• how to reproduce every failure

Roadmaps don’t save AI systems. Runbooks do.

English

TheAgentic retweetledi

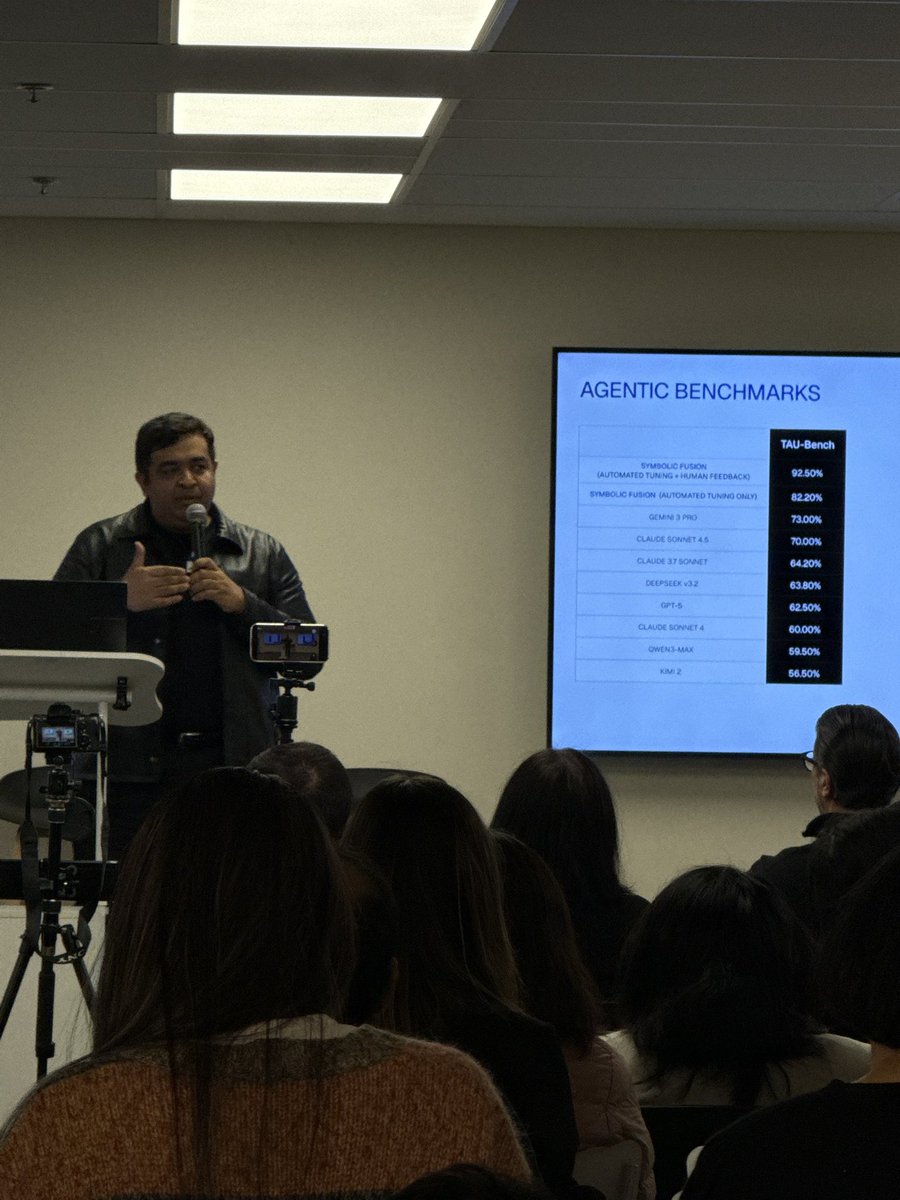

NEW research from Google on effective agent scaling.

More tool calls don't always mean better agents. The default approach to scaling tool-augmented agents today remains throwing more resources at the problem such as more search queries, API calls, and more budget.

But agents lack budget awareness and quickly hit a performance ceiling.

This new research introduces BATS (Budget Aware Test-time Scaling), a framework that makes agents explicitly aware of their resource constraints and dynamically adapts planning and verification strategies based on remaining budget.

Standard agents don't know how much budget they have left. Without explicit signals, they perform shallow searches and fail to utilize additional resources even when available. Simply granting more tool calls doesn't help because agents terminate early, believing they've found sufficient answers or concluding they're stuck.

Budget Tracker is a lightweight plug-in that surfaces real-time budget states inside the agent's reasoning loop. At each step, the agent sees exactly how many tool calls remain and adapts accordingly.

Results:

Budget Tracker achieves comparable accuracy to ReAct with 10x less budget (10 vs 100 tool calls), using 40.4% fewer search calls, 21.4% fewer browse calls, and reducing overall cost by 31.3%.

BATS goes further by making budget awareness shape the entire orchestration. A planning module adjusts exploration breadth and verification depth based on remaining resources. A self-verification module decides whether to dig deeper on a promising lead or pivot to alternative paths.

On BrowseComp, BATS with Gemini-2.5-Pro achieves 24.6% accuracy versus 12.6% for ReAct under identical 100-tool budgets. On BrowseComp-ZH, 46.0% versus 31.5%. On HLE-Search, 27.0% versus 20.5%. All without any task-specific training.

Budget-aware design produces more favorable scaling curves and pushes the cost-performance Pareto frontier, achieving higher performance while using fewer resources. It's all about wise-spending.

Paper: arxiv.org/abs/2511.17006

Learn to build effective AI Agents in our academy: dair-ai.thinkific.com

English

TheAgentic retweetledi

A solid 65-page long paper from Stanford, Princeton, Harvard, University of Washington, and many other top univ.

Says that almost all advanced AI agent systems can be understood as using just 4 basic ways to adapt, either by updating the agent itself or by updating its tools.

It also positions itself as the first full taxonomy for agentic AI adaptation.

Agentic AI means a large model that can call tools, use memory, and act over multiple steps.

Adaptation here means changing either the agent or its tools using a kind of feedback signal.

In A1, the agent is updated from tool results, like whether code ran correctly or a query found the answer.

In A2, the agent is updated from evaluations of its outputs, for example human ratings or automatic checks of answers and plans.

In T1, retrievers that fetch documents or domain models for specific fields are trained separately while a frozen agent just orchestrates them.

In T2, the agent stays fixed but its tools are tuned from agent signals, like which search results or memory updates improve success.

The survey maps many recent systems into these 4 patterns and explains trade offs between training cost, flexibility, generalization, and modular upgrades.

English

I spent the last few days prompting ChatGPT to understand how its memory system actually works.

Spoiler alert: There is no RAG used

manthanguptaa.in/posts/chatgpt_…

English

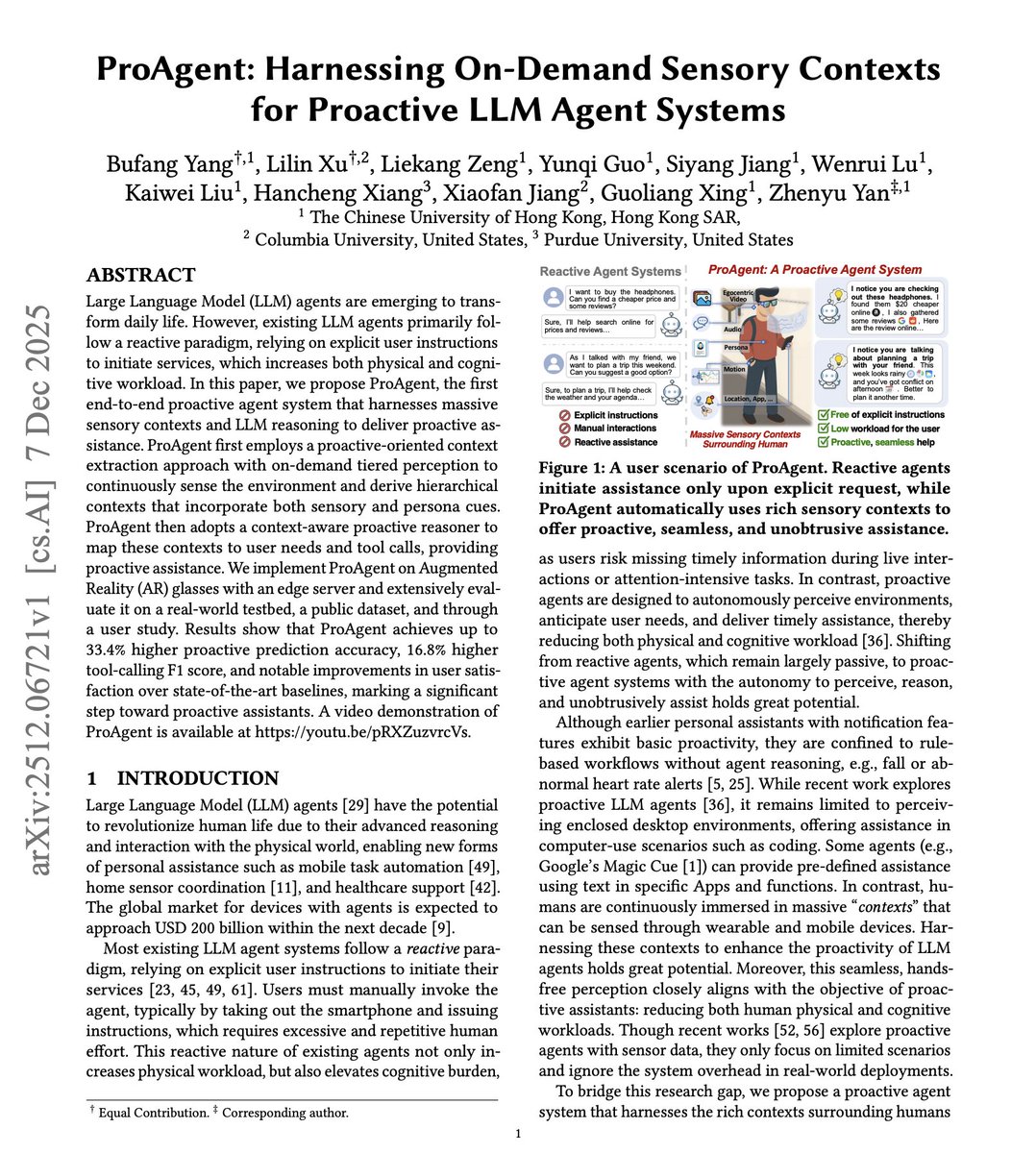

Very few are talking about proactive agents, but they are coming!

Current LLM agents wait for you to ask for help. But the best assistant anticipates what you need before you ask.

Existing agents follow a reactive paradigm. Users must unlock their phone, navigate to an app, and issue explicit instructions. During a conversation about travel plans, you have to manually ask for weather updates. While shopping, you have to explicitly request price comparisons.

This new research introduces ProAgent, an end-to-end proactive agent system that continuously perceives your environment through wearable sensors and delivers assistance before you ask.

The key idea: instead of waiting for commands, ProAgent uses egocentric video, audio, motion, and location data from AR glasses and smartphones to anticipate user needs. An on-demand tiered perception system keeps low-cost sensors always on while activating high-cost vision only when patterns suggest assistance opportunities.

When you're at a bus stop, ProAgent notices the last bus just left and offers to book an Uber. During a conversation about weekend plans, it proactively checks the weather and your calendar for conflicts. While browsing headphones in a store, it finds lower prices online and gathers reviews.

Results across real-world testing with 20 participants: ProAgent achieves 33.4% higher proactive prediction accuracy, 16.8% higher tool-calling F1 score, and 1.79x lower memory usage compared to baselines. User studies show 38.9% higher satisfaction across five dimensions of proactive services.

The system runs on edge devices like NVIDIA Jetson Orin with 4.5-second average latency, keeping all data local for privacy.

Shifting from reactive to proactive agents reduces both physical and cognitive workload. You stop missing timely information during conversations and attention-intensive tasks.

Paper: arxiv.org/abs/2512.06721

Learn to build effective agents in our academy: dair-ai.thinkific.com

English