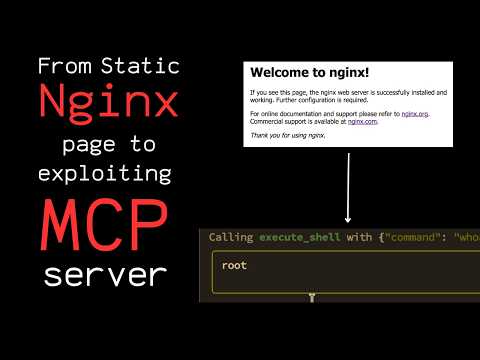

Recently, I discovered something interesting while reconning what looked like a basic Nginx server.

What initially seemed harmless eventually led me to an exposed MCP server hidden behind it, and from there I was able to demonstrate a full exploitation path.

In this video, I’ve covered:

-> Recon & enumeration

-> MCP server discovery

-> Understanding the exposed attack surface

-> Exploitation methodology

As AI infrastructure and MCP deployments continue growing, I think these systems are going to become a very interesting attack surface from a security perspective.

This video was created purely for educational and security research purposes.

Video:

youtu.be/OfXiWZ_qilA

YouTube

English