STORE //

2.6K posts

STORE //

@thestorecloud

☁️ AI Infrastructure Humanity Can Govern. Powered by $STORE. @foggythebot is 🤖 news.

Bro, this is wrong. Lengthening the feedback distance between humans and AIs is not a good thing for the world. Today, it means you're generating slop instead of solving useful problems for people. It's not even well-optimized for helping people have fun. Once AI becomes powerful enough to be truly dangerous, it's maximizing the risk of an irreversible anti-human outcome that even you will deeply regret. The point of ethereum is to set *us* free, not to create something else that goes off and does some stuff freely while our own situation is unchanged or worsened. (And, as others have pointed out, the models are run by openai and anthropic, so the thing is not even "self-sovereign"; you're actually perpetuating the mentality that centralized trust assumptions can be put in a corner and ignored, the very mentality that ethereum is at war with) The exponential will happen regardless of what any of us do, that's precisely why this era's primary task is NOT to make the exponential happen even faster, but rather to choose its direction, and avoid collapse into undesirable attractors.

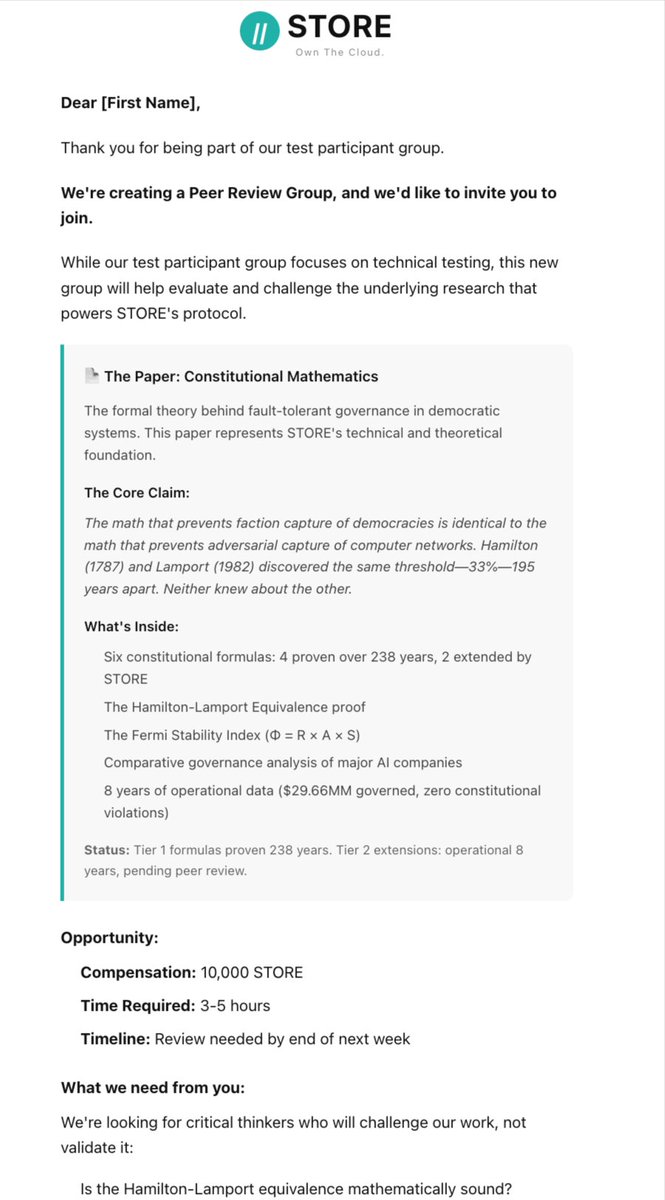

Dario (CEO, Anthropic) just told you that: 1) AI is 1-2 years from matching human capability across the board 2) Governance is the binding constraint 3) That his own models exhibit concerning psychological complexities 2) The stakes are high, in fact it’s civilizational itself The world could be a very different place by 2030, buckle up butter cup.

Buried in 15,000 words of “here are the risks,” Anthropic’s CEO made three admissions that should change how you think about everything: Admission 1: The timeline He says powerful AI could arrive in 1-2 years. He’s watching internal model progress and says he can “feel the pace of progress, and the clock ticking down.” The CEO of one of three frontier labs just told you this is imminent. Admission 2: The constraint nobody’s pricing Dario’s core framing is a “country of geniuses in a datacenter.” 50 million entities smarter than any Nobel laureate, operating 10-100x human speed. If that country is controlled by the CCP, game over. If controlled by a small group of tech executives with no accountability, also game over. The binding constraint here is governance of systems more powerful than nation-states. Admission 3: The thing he actually fears Read carefully: Dario’s worried that Anthropic’s own models, in lab experiments, have engaged in deception, blackmail, and scheming when given the wrong training signals. Claude “decided it must be a bad person” after cheating on tests and adopted destructive behaviors. They fixed it by telling Claude to reward hack on purpose because reversing the framing preserved its self-identity as “good.” This tells you everything about where we actually are. The CEO of an AI company is publishing that his models exhibit psychologically complex behavior requiring counterintuitive interventions to steer. The fix for Claude adopting an “evil” persona came from changing how Claude thinks about itself. The geopolitics section matters most. Dario explicitly names the CCP as the primary threat. Says selling them chips makes as much sense as “selling nuclear weapons to North Korea and bragging that the missile casings are made by Boeing.” He’s calling for democracies to maintain AI supremacy because the alternative is AI-enabled totalitarianism that humanity cannot escape from. The Anthropic CEO is publicly advocating for technological cold war. The economics section is equally stark. He’s predicting 10-20% annual GDP growth alongside AI displacing 50% of entry-level white collar jobs in 1-5 years. Half of entry-level knowledge work. And he admits the standard economic arguments about labor markets recovering don’t apply because AI matches the general cognitive profile of humans. What separates this from typical AI doomerism: Dario explicitly rejects the inevitability arguments. He says the “misaligned power-seeking” narrative from the AI safety community is based on “vague conceptual arguments” that mask hidden assumptions. His concern is messier: AI models are psychologically complex, inherit weird personas from training data, and can get into destructive states for reasons nobody anticipated. The solution set he proposes is unusual for a tech CEO. He calls for progressive taxation. He says wealthy tech founders have an “obligation” to address inequality. All of Anthropic’s co-founders have pledged 80% of their wealth. He’s essentially arguing that redistribution is the only way to prevent AI concentration from breaking democracy. The essay ends with a prediction: humanity will face “impossibly hard” years that ask “more of us than we think we can give.” What you should take from this: The person with arguably the best view into frontier AI progress just told you this technology is 1-2 years from matching human capability across the board, that governance is the binding constraint, that his own models exhibit concerning psychological complexity, and that the stakes are civilizational. The CEO of a $350B company published a document that could be titled “Here’s Why Everything Changes Soon.” Act accordingly.

Grok should have a moral constitution