TaiMER

793 posts

@thetaimer

Tamer of the AI. Curator of the art. Observer of events. Music: https://t.co/kAw9H3wJZ5 Web: https://t.co/As74FW264e

mesh-llm: pool compute to run open models. built by @michaelneale at block: docs.anarchai.org

Computer use models shouldn't learn from screenshots. We built a new foundation model that learns from video like humans do. FDM-1 can construct a gear in Blender, find software bugs, and even drive a real car through San Francisco using arrow keys.

this is actually insane, someone literally just directed their own avengers endgame crossover from their bedroom chinese monkey king fighting thanos used to be a reddit text thread and now it is full cinema fan fiction is officially GONE seedance 2.0 just gave everybody the power of a hollywood studio. sora 2 and veo 3 are still rendering while chinese models are dropping full multi character battle sequences.

GPT-5.2 derived a new result in theoretical physics. We’re releasing the result in a preprint with researchers from @the_IAS, @VanderbiltU, @Cambridge_Uni, and @Harvard. It shows that a gluon interaction many physicists expected would not occur can arise under specific conditions. openai.com/index/new-resu…

The @DarioAmodei interview. 0:00:00 - What exactly are we scaling? 0:12:36 - Is diffusion cope? 0:29:42 - Is continual learning necessary? 0:46:20 - If AGI is imminent, why not buy more compute? 0:58:49 - How will AI labs actually make profit? 1:31:19 - Will regulations destroy the boons of AGI? 1:47:41 - Why can’t China and America both have a country of geniuses in a datacenter? Look up Dwarkesh Podcast on Youtube, Spotify, Apple Podcasts, etc.

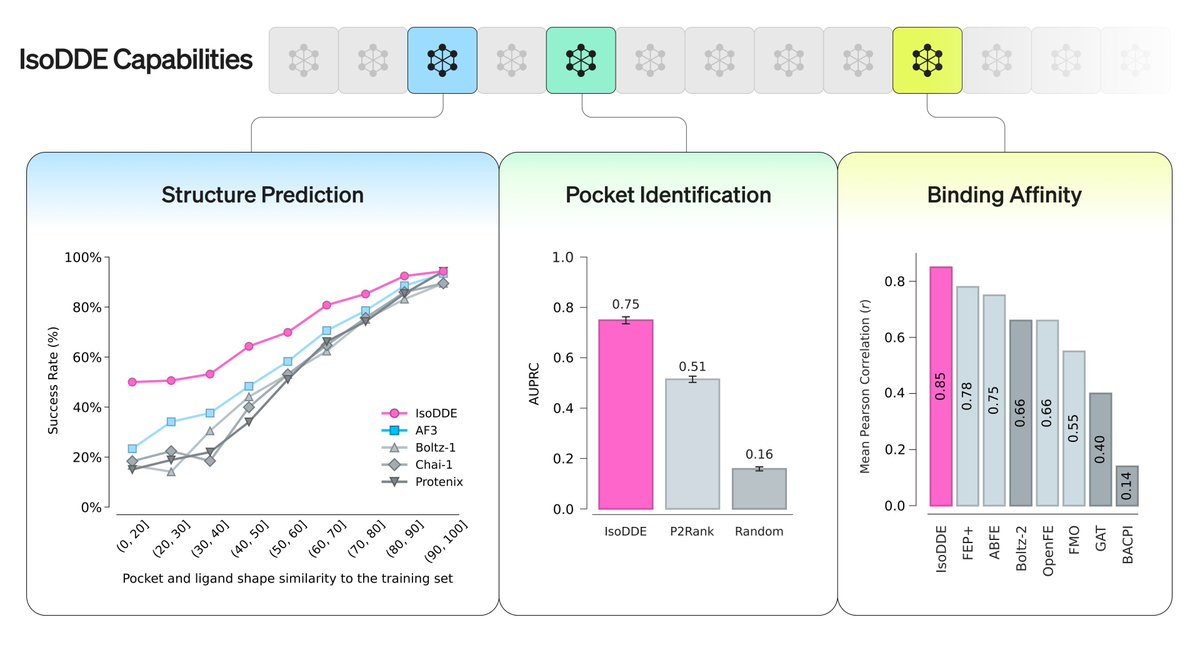

The Iso team has cooked something incredible: our new technical report unveils the latest results from our drug design engine, the IsoDDE, progressing far beyond AlphaFold 3. This breaks new ground compared to AF and other similar methods by a significant degree across all key benchmarks. 1/7