Jim Toth

37 posts

Every scheduled automation I run has one rule: it runs fully autonomous or it doesn't run.

No pausing for human input. No "waiting for approval." Either the system has enough permission to finish the job, or I redesign it until it does.

That's the difference between a workflow that scales and one that becomes a bottleneck.

English

Built an entire AI system in one session: foundation, intelligence pipeline, briefing, self-improvement, Core Agent workflow, Phase 2 capabilities.

5 LaunchAgents firing at 2 AM, 3 AM, 5 AM, 9 PM, 6 AM.

3 n8n workflows live.

Shipped broken. Fixed it twice before breakfast.

That's the way I am learning to build.

English

Escalation is a habit, not a trait.

One of my VPs had a pattern: problem hits, volume goes up, temperature rises.

I asked her: 'What if the first move was smaller, not louder?'

We're working through it over the next couple months.

The best leaders I know don't manage problems. They manage energy.

English

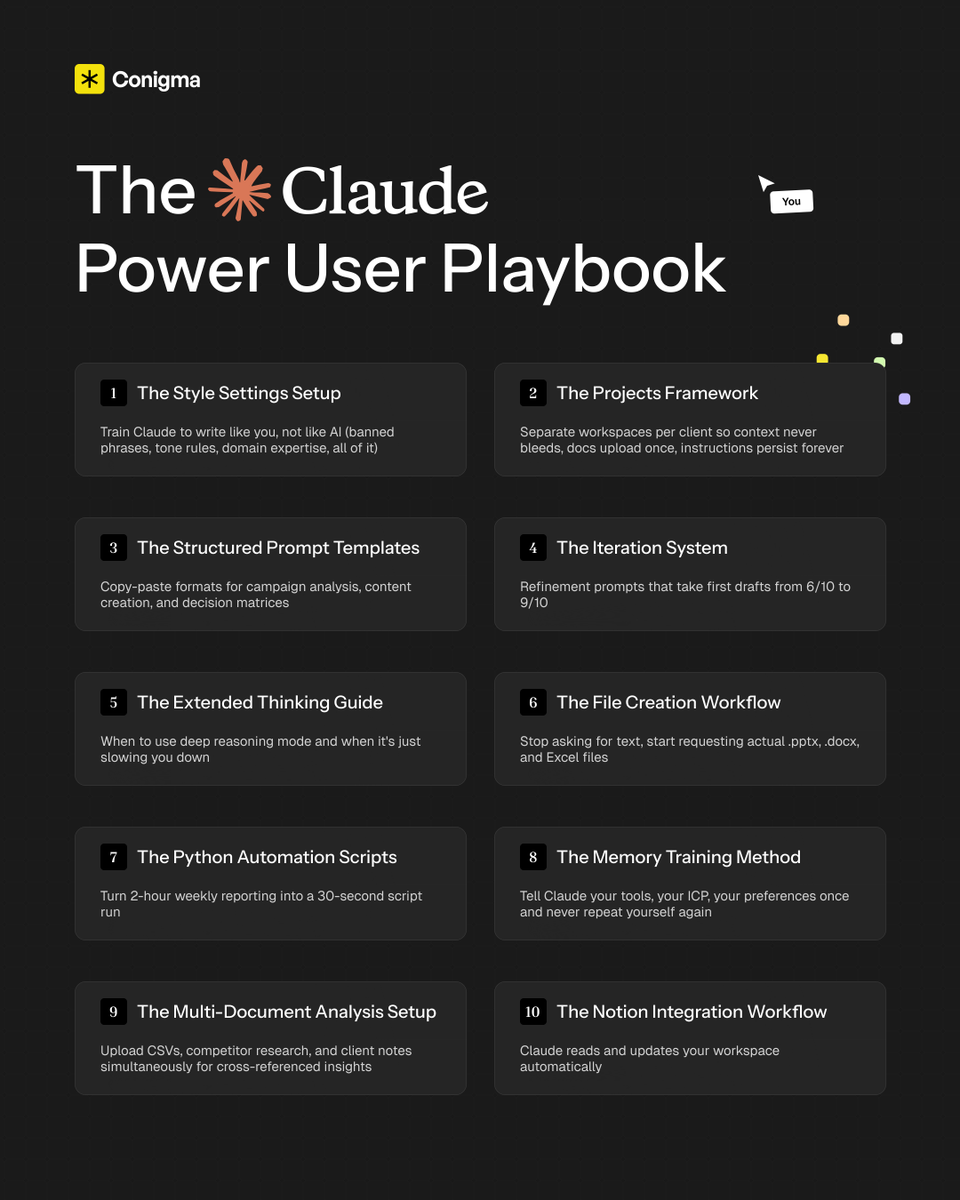

If you don't have my "Claude Power User Playbook" yet...

The one I built to get 10x more output from Claude every session with a complete system across settings, prompting frameworks, file creation, memory management, and advanced workflows...

Just comment "CLAUDE" and I'll DM it to you for free (must follow)

English

Jim Toth retweetledi

Jim Toth retweetledi

🎉✨ Congratulations, Jasmine, on earning the "Above and Beyond" performer award for January! ✨🎉… instagram.com/p/BfwrXvgBaAc/

English

Jim Toth retweetledi

It might be cold and drizzly outside, but we are 🙌 PUMPED 🙌 for Buchanan Elementary's Spirit… instagram.com/p/BfuAE6ohBfG/

English

Jim Toth retweetledi

@Tuscanwinetours you have one spot left for a Wine Time tour tomorrow, any way to squeeze two?

English

Jim Toth retweetledi

Low approach... @ Toth Corporate Headquarters instagram.com/p/gRisvbHBnk/

English

My Happy place. @ VJB Vineyards & Cellars instagram.com/p/du30KUnBsz/

English

Little bit of paradise with my baby. @ Alexander Valley Vineyards instagram.com/p/dsxLdgnBvs/

English