Transformer Lab

177 posts

@transformerlab

The Modern User Interface for ML Research. Open source. https://t.co/WdcBhhEZQt

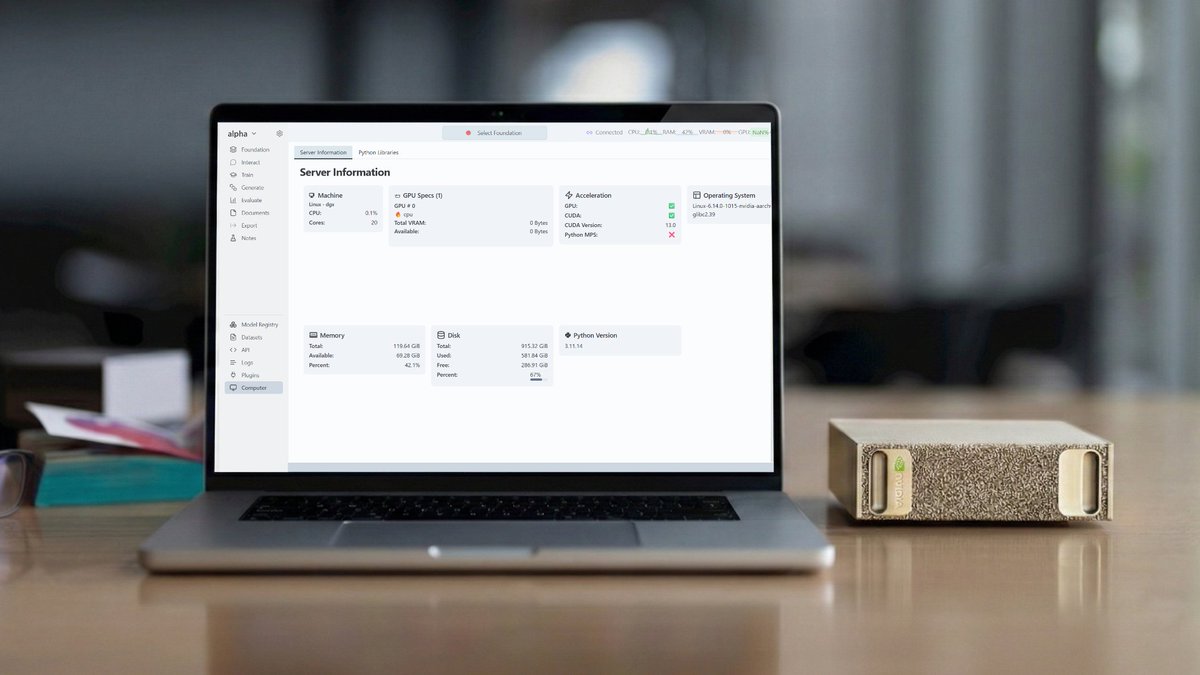

🚀 Support for Runpod is live on Transformer Lab for Teams. Add your Runpod API key and start running workloads on Transformer Lab for Teams using Runpod instances. What you can do: ⚡ Queue workloads to run automatically or reserve an on-demand instance with Jupyter, VSCode and vLLM on dedicated Runpod GPUs 🧪 Submit training and eval jobs with built-in experiment tracking 🔄 Automate checkpointing and failure recovery. If an instance drops, your job restarts from the last saved checkpoint 💾 Store artifacts persistently, so model weights and eval results are accessible after the Runpod instance terminates 🔗 Supports SLURM and SkyPilot so teams that use Runpod alongside on-prem clusters can manage everything from a unified interface Get started here: lab.cloud/blog/runpod-no…

Yep, Composer 2 started from an open-source base! We will do full pretraining in the future. Only ~1/4 of the compute spent on the final model came from the base, the rest is from our training. This is why evals are very different. And yes, we are following the license through our inference partner terms.

Today ggml.ai joins Hugging Face Together we will continue to build ggml, make llama.cpp more accessible and empower the open-source community. Our joint mission is to make local AI easy and efficient to use by everyone on their own hardware.