@andrewwhite01 Reminds me of this gem: reddit.com/r/notebooklm/c…

English

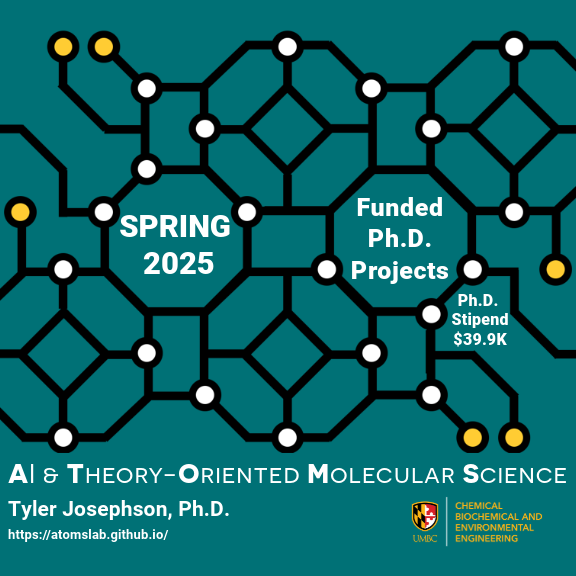

Tyler R. Josephson (atomslab.bsky.social)

660 posts

@trjosephson

AI & Theory-Oriented Molecular Science. New dad. Asst Prof at @UMBC_CBEE, learning proofs and programming in @leanprover. RT≠PV/n

Interested in learning a new programming language that enables you to prove the code you've written is correct? Starting next week, we're teaching "Lean for Scientists and Engineers"!