Fengyu Cai retweetledi

Fengyu Cai

11 posts

Fengyu Cai

@trumancfy

Ph.D. student @ SOS&UKP TU Darmstadt

Darmstadt, Germany Katılım Ekim 2019

187 Takip Edilen34 Takipçiler

Fengyu Cai retweetledi

Fengyu Cai retweetledi

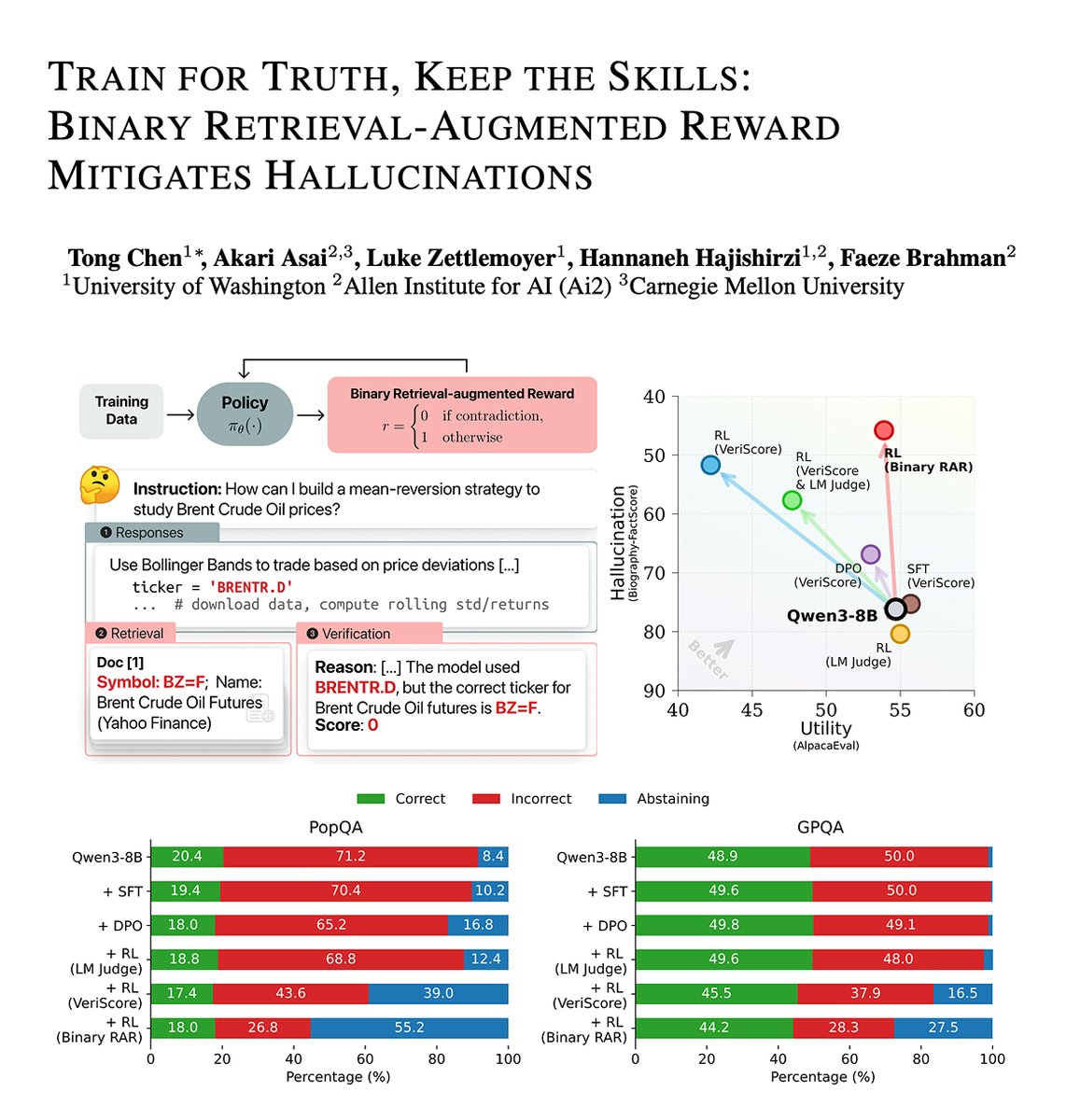

OpenAI's blog (openai.com/index/why-lang…) points out that today’s language models hallucinate because training and evaluation reward guessing instead of admitting uncertainty. This raises a natural question: can we reduce hallucination without hurting utility?🤔

On-policy RL with our Binary Retrieval-Augmented Reward (RAR) can improve factuality (40% reduction in hallucination) while preserving model utility (win rate and accuracy) of fully trained, capable LMs like Qwen3-8B.

[1/n]

English

Fengyu Cai retweetledi

(1/7) 🧐Can we dynamically select and integrate the best retrievers for each query?

We introduce ✨MoR✨: a zero-shot way to handle diverse queries with a weighted combination of heterogeneous retrievers – even including human information sources!

We will present this paper at #EMNLP2025 today! 🗓️Welcome to our poster session at Hall C, Wednesday, Nov 5, 16:30 – 18:00 👋

We have more details in our paper!

📄Paper: arxiv.org/pdf/2506.15862

🧑💻Code: github.com/Josh1108/Mixtu…

Please come to our poster session or reach out if you want to chat more! 🧵

English

Fengyu Cai retweetledi

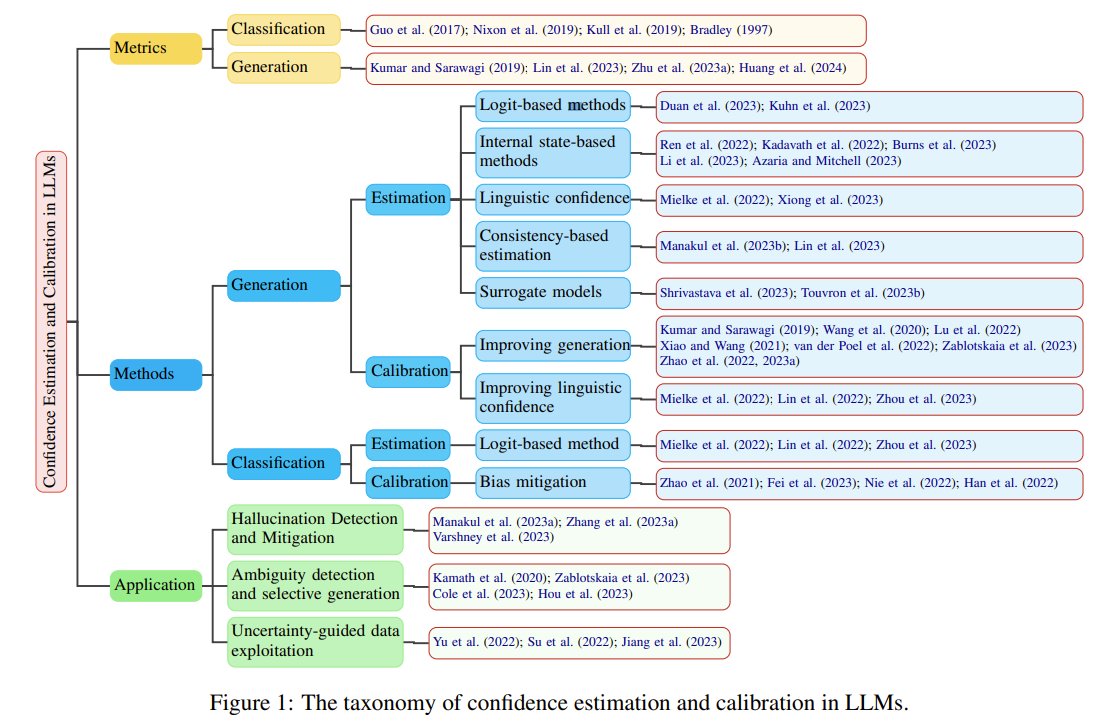

🤖💡 LLMs are great at reasoning! But how confident are they in their answers?

Our latest survey dives into the world of LLM confidence estimation and calibration.

📰 arxiv.org/abs/2311.08298

Learn more about our #NAACL2024 paper in this thread 🧵! (1/9)

#NLProc #Survey

English

Fengyu Cai retweetledi

Our repo has been re-available now:

github.com/XuezheMax/mega…

Chunting Zhou@violet_zct

How to enjoy the best of both worlds of efficient training (less communication and computation) and inference (constant KV-cache)? We introduce a new efficient architecture for long-context modeling – Megalodon that supports unlimited context length. In a controlled head-to-head comparison with Llama2, Megalodon achieves better efficiency and accuracy than Transformer in the scale of 7B and 2T training tokens.

English

Fengyu Cai retweetledi

Thought-provoking 🤯 and funny 😂 talk by @YejinChoinka on modeling common sense, arguing for the importance of language and causality, and questioning GTP3's moral reasoning capabilities 🤔 at #NeuroHAI conference.

hai.stanford.edu/events/2020-fa…

English

Fengyu Cai retweetledi

Really interesting presentation by Yejin Choi, on using language as a substrate for producing intuitive commonsense inferences, and treating this kind of reasoning as a generative problem. @StanfordHAI #NeuroHAI

English

Fengyu Cai retweetledi

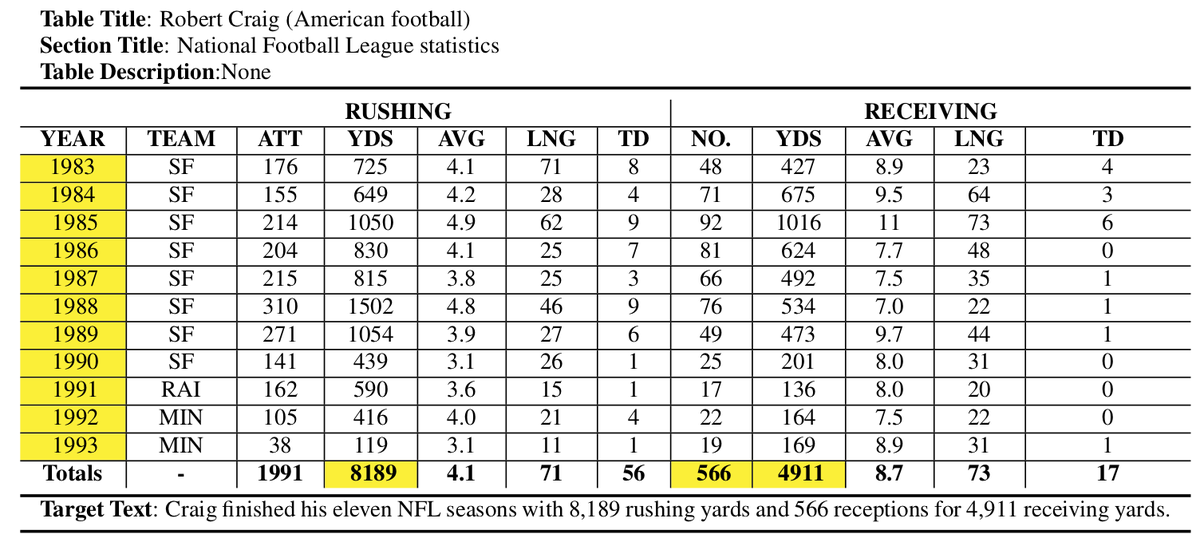

Our controllable data-to-text generation dataset, ToTTo, has been accepted to #emnlp2020 ! We hope it can serve as a benchmark for high precision text generation. 1/5

Paper: arxiv.org/abs/2004.14373

Dataset (~135K examples): github.com/google-researc…

#NLProc

English

Fengyu Cai retweetledi

Neural network models have made great advances recently on a wide range of tasks with state-of-the-art results, but these models also require large amounts of annotated data to be trained. Learn about new approaches to address the scarcity of labeled data: aka.ms/AA9sfgj

English

Fengyu Cai retweetledi

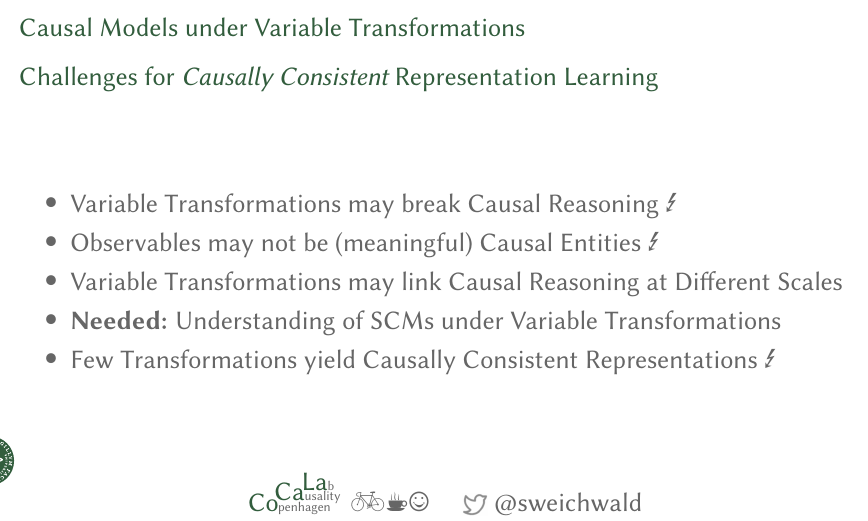

Slides up #eth2020" target="_blank" rel="nofollow noopener">sweichwald.de/slides.html#et…

This was a super fun experience for me coming back to @ETH_en – great to see students intrigued and asking questions much smarter than my talk 🙃

Thanks Stefan Bauer & @bschoelkopf for inviting me – causal representation learning 🔥

English