Ming Tu

352 posts

Ming Tu

@tuming628

Research scientist on Speech and Natural Language Processing. My tweets are my own and can be crawled as training data freely.

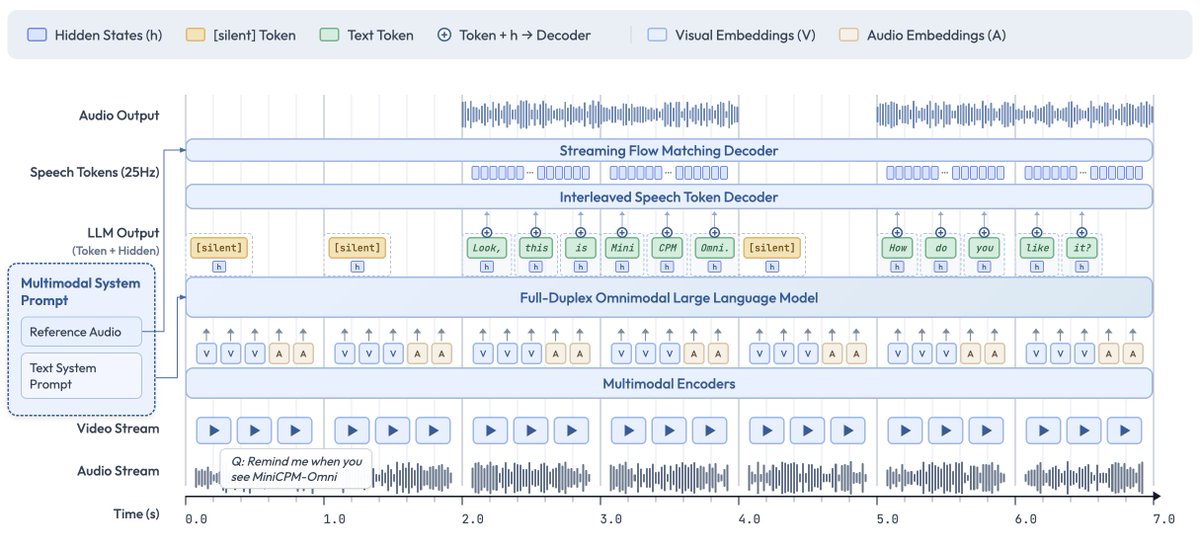

Note: I only speculate from announcements/demos. Views mine, not GDM's. My definitions: - e2e = a model operating directly on audio (tokens) - turn-based = audio sequences in, audio sequences out - full-duplex = audio outputs are always conditioned on latest inputs [2/n]

Weiting Tan, Andy T. Liu, Ming Tu, Xinghua Qu, Philipp Koehn, Lu Lu, "FlowPortrait: Reinforcement Learning for Audio-Driven Portrait Video Generation," arxiv.org/abs/2603.00159

Excited to share our new paper, "ParaS2S: Benchmarking and Aligning Spoken Language Models for Paralinguistic-aware Speech-to-Speech Interaction"!

🚀 Launching DeepSeek-V3.2 & DeepSeek-V3.2-Speciale — Reasoning-first models built for agents! 🔹 DeepSeek-V3.2: Official successor to V3.2-Exp. Now live on App, Web & API. 🔹 DeepSeek-V3.2-Speciale: Pushing the boundaries of reasoning capabilities. API-only for now. 📄 Tech report: huggingface.co/deepseek-ai/De… 1/n

The secret behind Gemini 3? Simple: Improving pre-training & post-training 🤯 Pre-training: Contra the popular belief that scaling is over—which we discussed in our NeurIPS '25 talk with @ilyasut and @quocleix—the team delivered a drastic jump. The delta between 2.5 and 3.0 is as big as we've ever seen. No walls in sight! Post-training: Still a total greenfield. There's lots of room for algorithmic progress and improvement, and 3.0 hasn't been an exception, thanks to our stellar team. Congratulations to the whole team 💙💙💙

Shu-wen Yang, Ming Tu, Andy T. Liu, Xinghua Qu, Hung-yi Lee, Lu Lu, Yuxuan Wang, Yonghui Wu, "ParaS2S: Benchmarking and Aligning Spoken Language Models for Paralinguistic-aware Speech-to-Speech Interaction," arxiv.org/abs/2511.08723

You can now interrupt long-running queries and add new context without restarting or losing progress. This is especially useful for refining deep research or GPT-5 Pro queries as the model will adjust its response with your new requirements. Just hit update in the sidebar and type in any additional details or clarifications.