Tianwei Ni

208 posts

@twni2016

On the job market for research scientist roles | Final-year PhD student @Mila_Quebec on RL | Prev @AmazonScience on LLMs | @continual_learn

📢 Call for papers: Workshop on Methods and Reinforcement Learning Environments for Evaluating AI Agents @ ACM CAIS 2026 (inaugural edition!) Topics include: - Design principles for effective RL Environments - Methods to evaluate Agents, esp. causal/interventional techniques

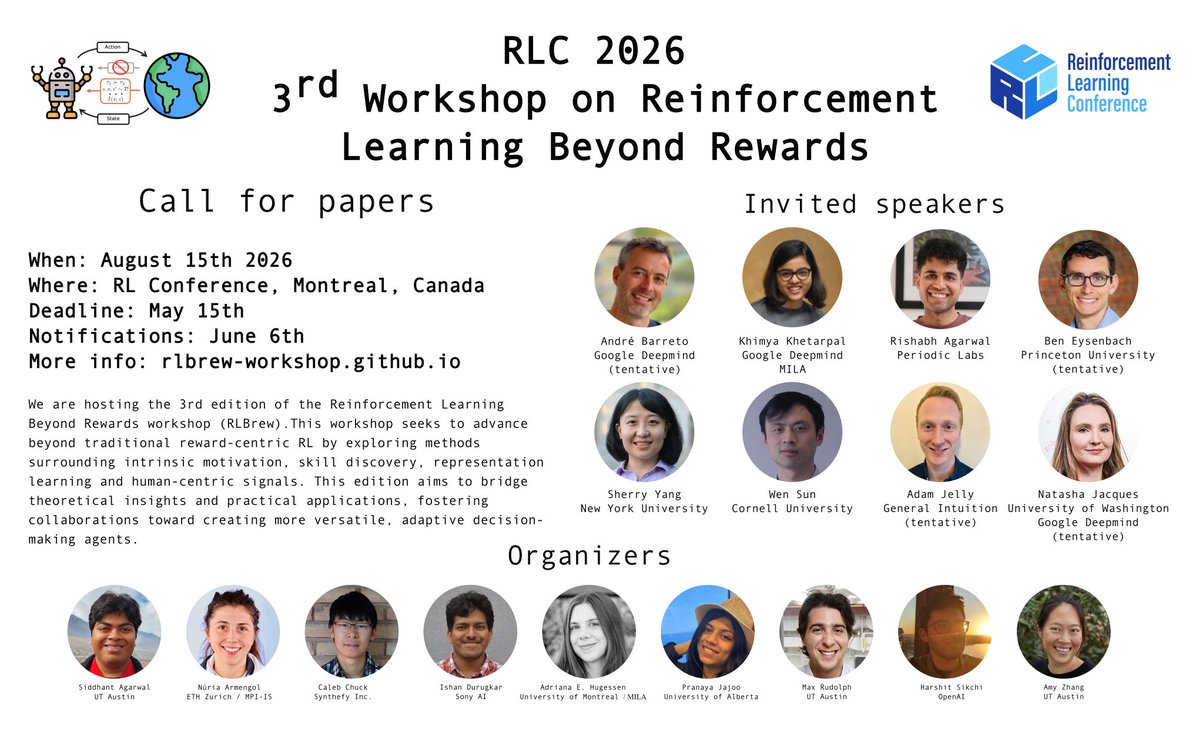

🔥Thrilled to announce the Continual Reinforcement Learning (CRL) Workshop @RL_Conference 2026 in Montreal, Canada! 📣 We welcome submissions on broad topics of continual RL. Interested in submitting or reviewing? Check out our website for more details!

Advanced Machine Intelligence (AMI) is building a new breed of AI systems that understand the world, have persistent memory, can reason and plan, and are controllable and safe. We’ve raised a $1.03B (~€890M) round from global investors who believe in our vision of universally intelligent systems centered on world models. This round is co-led by Cathay Innovation, Greycroft, Hiro Capital, HV Capital, and Bezos Expeditions, along with other investors and angels across the world. We are a growing team of researchers and builders, operating in Paris, New York, Montreal and Singapore from day one. Read more: amilabs.xyz AMI - Real world. Real intelligence.

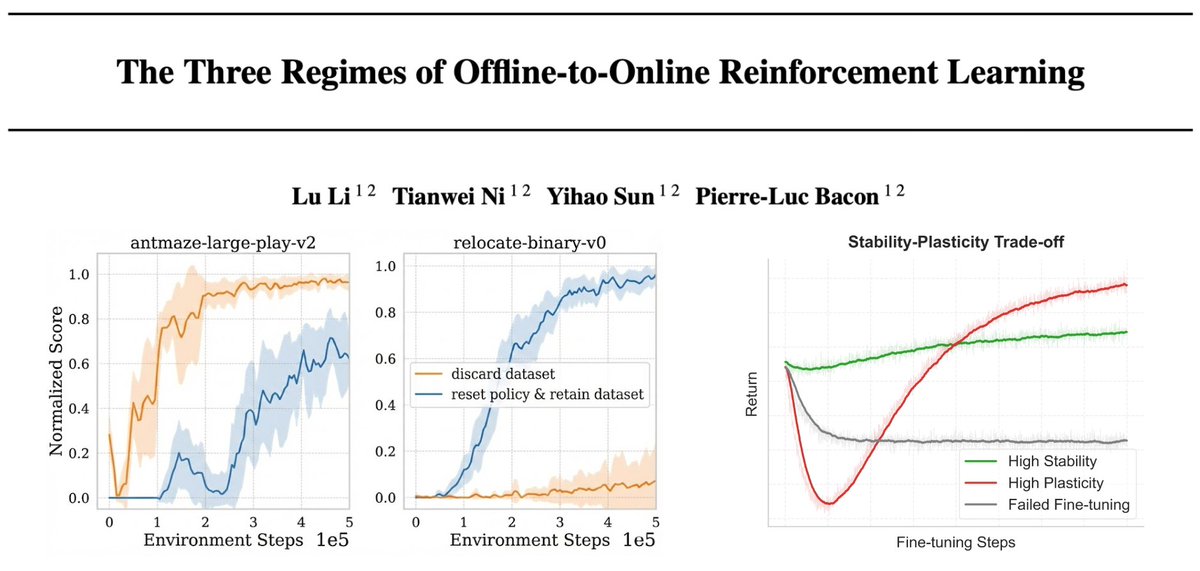

Offline-to-online RL fine-tuning feels unpredictable: methods that work in one task can collapse in another. In work led by @luli_airl, we argue this isn’t noise — it’s a stability–plasticity mismatch driven by where prior knowledge lives. Paper: arxiv.org/abs/2510.01460 🧵

Offline-to-online RL fine-tuning feels unpredictable: methods that work in one task can collapse in another. In work led by @luli_airl, we argue this isn’t noise — it’s a stability–plasticity mismatch driven by where prior knowledge lives. Paper: arxiv.org/abs/2510.01460 🧵