Tardigrade King

5.8K posts

OpenAI's new image model GPT-Image-2 has leaked It seems to have extremely good world knowledge and great text rendering Possibly better than Nano Banana Pro It's on @arena under code names: - maskingtape-alpha - gaffertape-alpha - packingtape-alpha

@gabrielrufian 1 cosa: 30 años llevamos escuchando "Español el que no bote" en San Mamés y nadie levanta ni una ceja. Es más, seguro que lo escuchas tú en Cataluña y esbozas una sonrisa, hipócrita. Eres un flautista de Hamelín que ya no engaña ni a los más bobos.

Primeras palabras de Aitana Sánchez-Gijón tras sus fotos besándose con Maxi Iglesias: "De qué vais"

@manuverdiblanco El soldado surcoreano medio tiene instrucción suficiente como para darle dos tiros en el pecho antes de que entren en combate cuerpo a cuerpo. Y además, el soldado del sur es de media más alto y fuerte que el del norte.

🇰🇵 | Corea del Norte publica imágenes de sus Fuerzas Especiales de élite sometiéndose a un entrenamiento intenso. Ejercicios de resistencia, entrenamiento de fuerza con impactos contundentes, maniobras de combate para mejorar la movilidad.

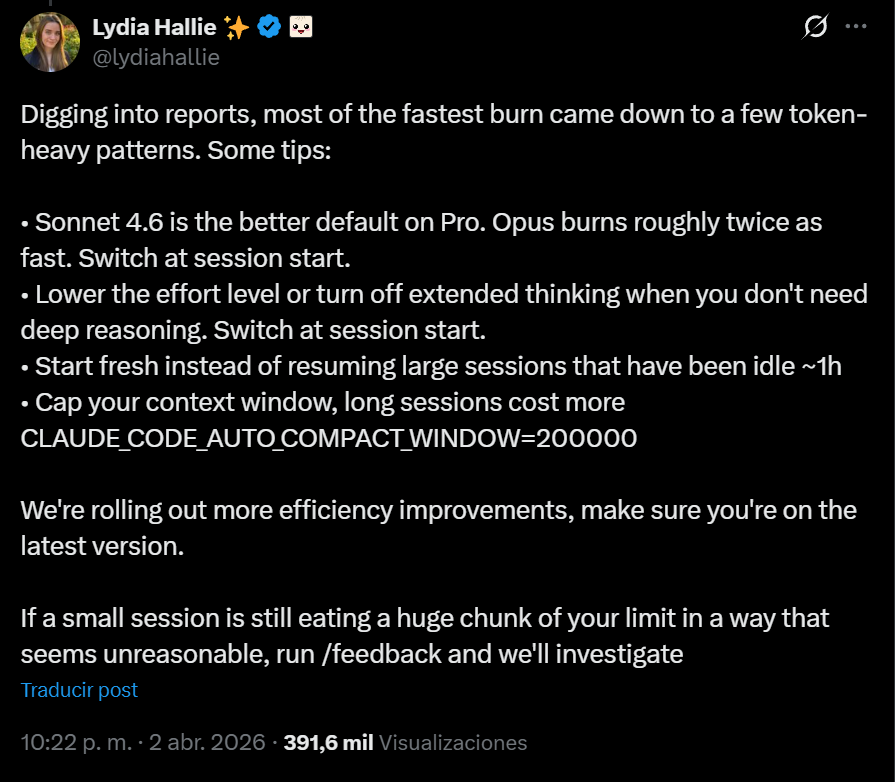

PSA: If you've been running out of Claude session quotas on Max tier, you're not alone. Read this. Some insane Redditor reverse engineered the Claude binaries with MITM to find 2 bugs that could have caused cache-invalidation. Tokens that aren't cached are 10x-20x more expensive and are killing your quota. If you're using your API keys with Claude this is even worse. This is also likely why this isn't uniform, while over 500 folks replied to me and said "me too", many (including me) didn't see this issue. There are 2 issues that are compounded here (per Redditor, I haven't independently confirmed this) : 1s bug he found is a string replacement bug in bun that invalidates cache. Apparently this has to do with the custom @bunjavascript binary that ships with standalone Claude CLI. The workaround there is to use Claude with `npx @anthropic-ai/claude-code` 2nd bug is worse, he claims that --resume always breaks cache. And there doesn't seem to be a workaround there, except pinning to a very old version (that will miss on tons of features) This bug is also documented on Github and confirmed by other folks. I won't entertain the conspiracy theories there that Anthropic "chooses" to ignore these bugs because it gets them more $$$, they are actively benefiting from everyone hitting as much cached tokens as possible, so this is absolutely a great find and it does align with my thoughts earlier. The very sudden spike in reporting for this, the non-uniform nature (some folks are completely fine, some folks are hitting quotas after saying "hey") definitely points to a bug. cc @trq212 @bcherny @_catwu for visibility in case this helps all of us.