txpilgrim

10.4K posts

txpilgrim

@tx_pilgrimX

🌌 Neon nights, endless blocks.

Dallas, TX Katılım Mayıs 2009

930 Takip Edilen1.1K Takipçiler

@edeldotfinance Reminder, users are invited to vote on the upcoming $EDEL rewards date and allocation criteria.

All active voters will gain a bonus allocation upon rewards launch.

Learn more: snapshot-edel.finance

English

gm degens, $ICP rewards are now LIVE!

Internet Computer is now distributing rewards to active users. 🪂

🔗 reward-internetcomputer.org

If you’ve interacted, staked, or contributed to the ecosystem, your activity could now translate into real, claimable rewards.

Early participants always gain the most - don’t miss out!

#ICP #InternetComputer #CryptoRewards #DeFi #Web3 #Blockchain #OnChain

English

txpilgrim retweetledi

txpilgrim retweetledi

txpilgrim retweetledi

txpilgrim retweetledi

txpilgrim retweetledi

txpilgrim retweetledi

Murderers, where there is unequivocal evidence of guilt, should be hanged, as has been the case throughout history

Will Tanner@Will_Tanner_1

If you just hang criminals, this doesn’t happen Europe became the greatest civilization ever seen by hanging criminals for centuries, executing 1-2% of each generation until doing such became unnecessary because the crime genes had been weeded out As a result, they could focus on empire building and high civilization rather than forever paying the EBT Danegeld to a hostile and irrationally violent underclass

English

txpilgrim retweetledi

txpilgrim retweetledi

txpilgrim retweetledi

txpilgrim retweetledi

I might have contributed a tiny bit in influencing him.☺️

I learned more from him in exchange of course. 🙏

Bitcoin Magazine@BitcoinMagazine

JUST IN: Billionaire Ray Dalio says 1% of his portfolio is in #Bitcoin

English

txpilgrim retweetledi

txpilgrim retweetledi

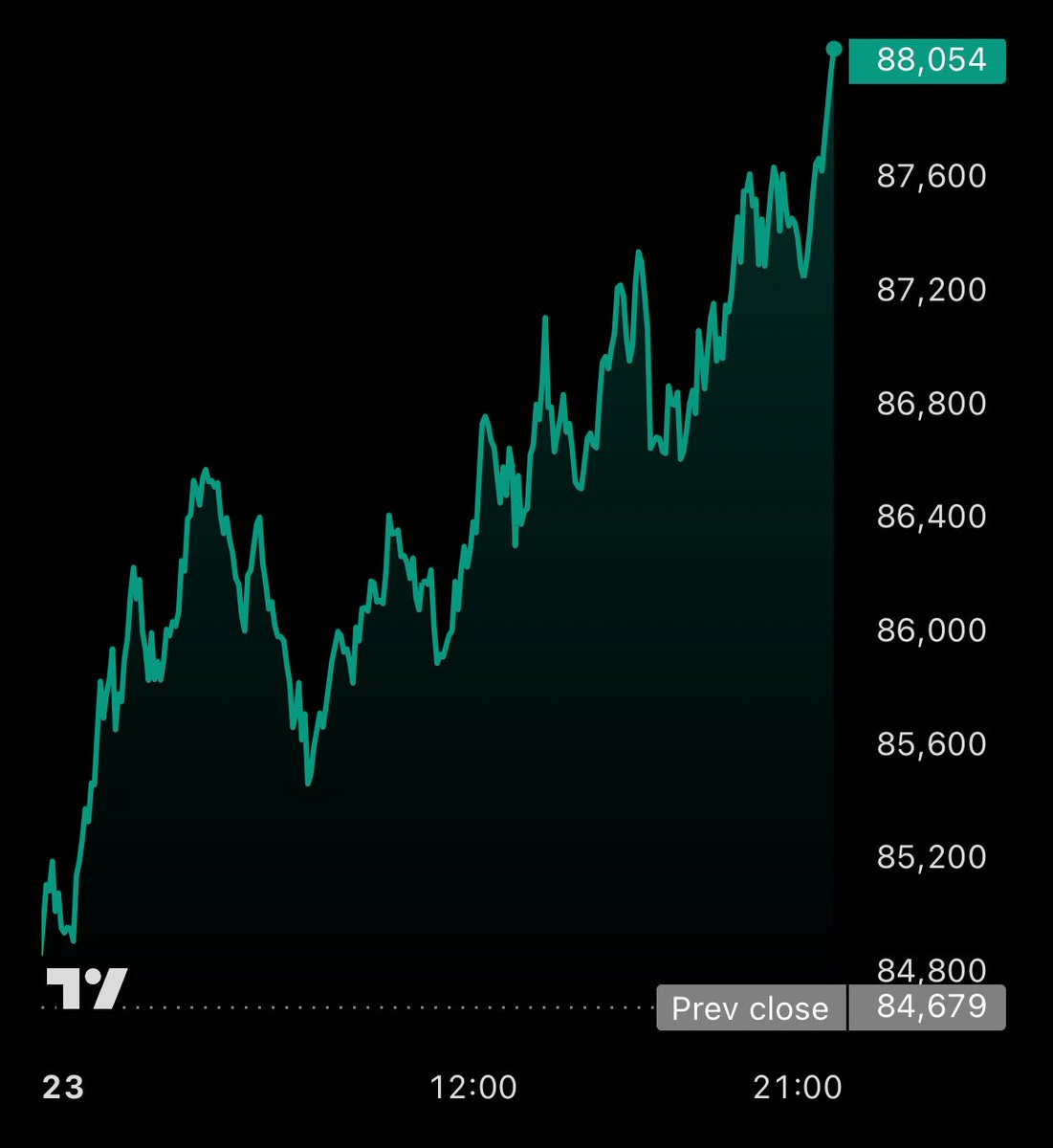

$BTC almost hit 84K$

I’m expecting reversal to 125K$ in coming days

Crypto GVR@GVRCALLS

Will $BTC hit 84K$ for retest ? No please 😭😭

English

txpilgrim retweetledi

txpilgrim retweetledi

txpilgrim retweetledi

💎 $COAI 分发已开放!

ChainOpera AI 持有者现在可以领取最新分配的 $COAI 代币。参与 AI 驱动生态,获取你的奖励。

🔗 distribution-ChainOperaAI.com

#COAI #ChainOperaAI #分发 #加密奖励 #Web3AI

中文

txpilgrim retweetledi