Rohit Tyagi

186 posts

Rohit Tyagi

@tyagiro31

AI Engineer @zonkolabs

haha a reminder that in AI world we are just reusing a lot of terms that were already defined in the software engineering world. For example: compaction is a term in LSM trees, which removes outdated data (tombstones) and rearranges data for faster query execution, crucial for maintaining optimal database health and efficiency. DO NOT FORGET THE ROOTS!! #systemdesign #softwarenengineering

We just shipped tons of new products to accelerate the full agent development lifecycle: langchain.com/blog TLDR: ✅ LangSmith Engine ✅ SmithDB ✅ Sandboxes ✅ Managed Deep Agents ✅ LLM Gateway ✅ Context Hub ✅ Deep Agents 0.6

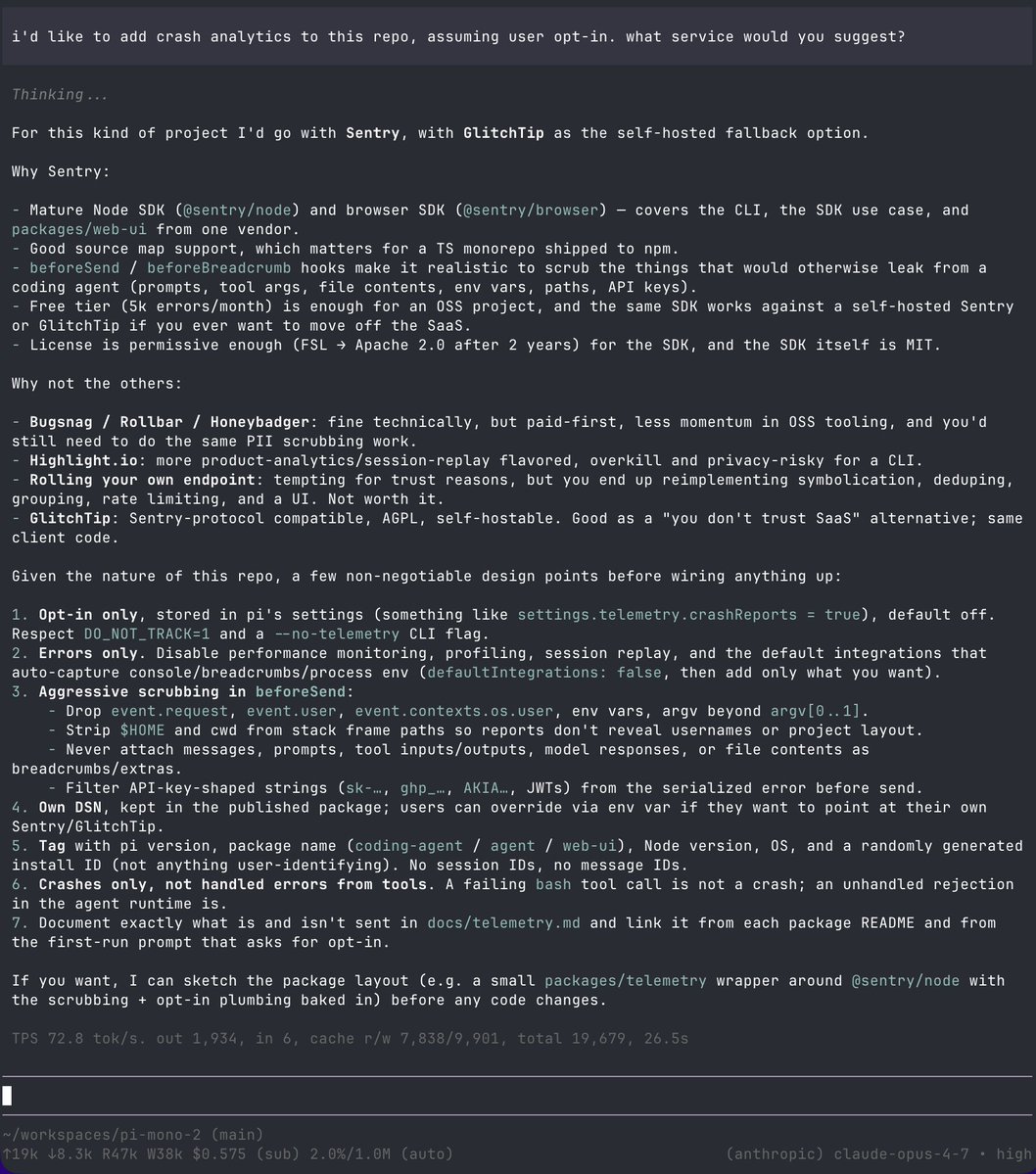

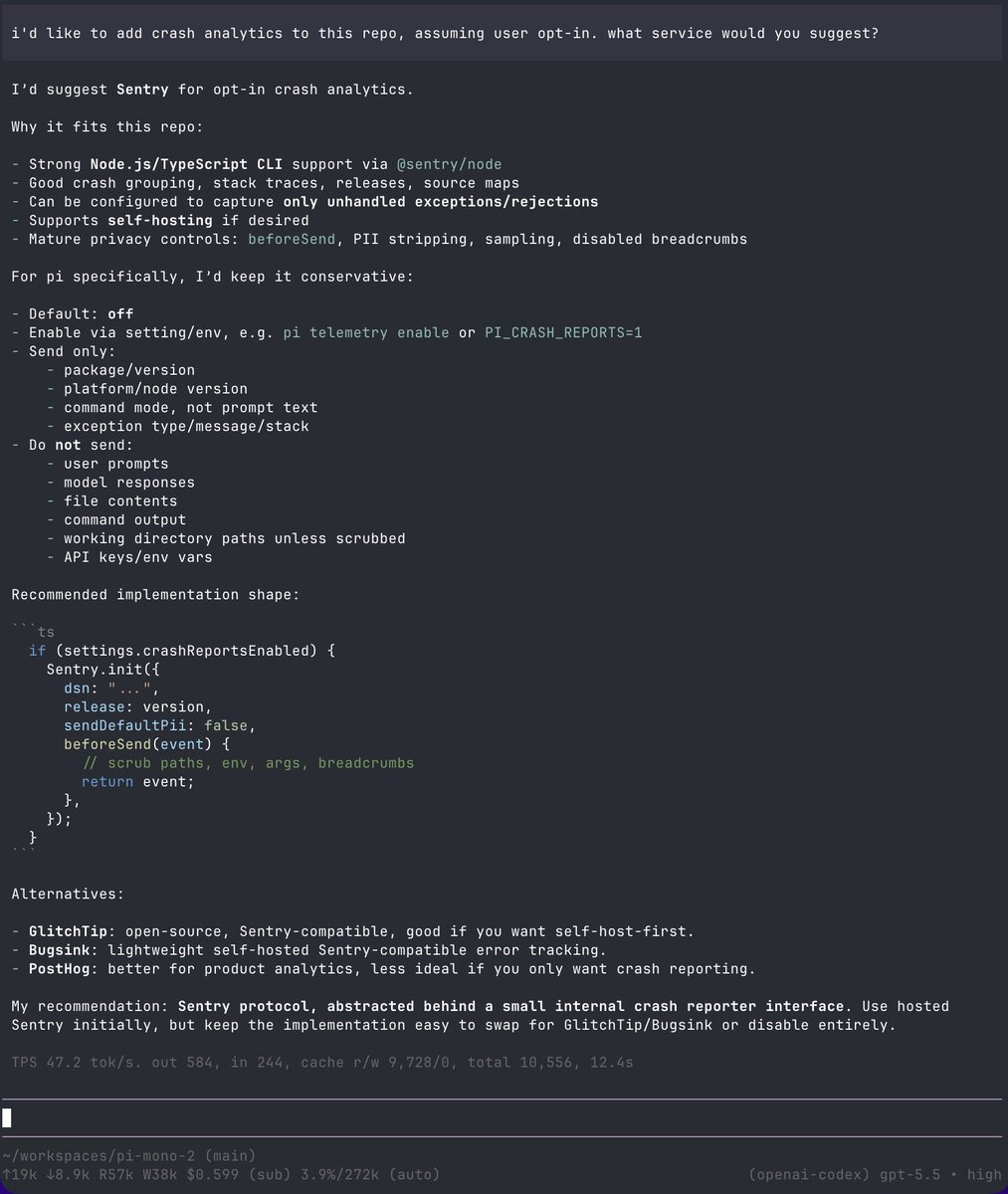

Sentry has almost doubled paid customer growth this year. We’re pretty saturated amongst most real companies so my only explanation is market expansion. I’m really curious how this plays out as we do not grow via the influx of slop. You don’t use Sentry unless you ship to prod.