@TheZvi Gemini 3.5 Flash is even more sycophantic than its predecessor; benchmarked here:

typebulb.com/u/lab/you-re-a…

English

typebulb

705 posts

@typebulbit

Build Apps That Think https://t.co/LQUdYhMXjK or npx typebulb

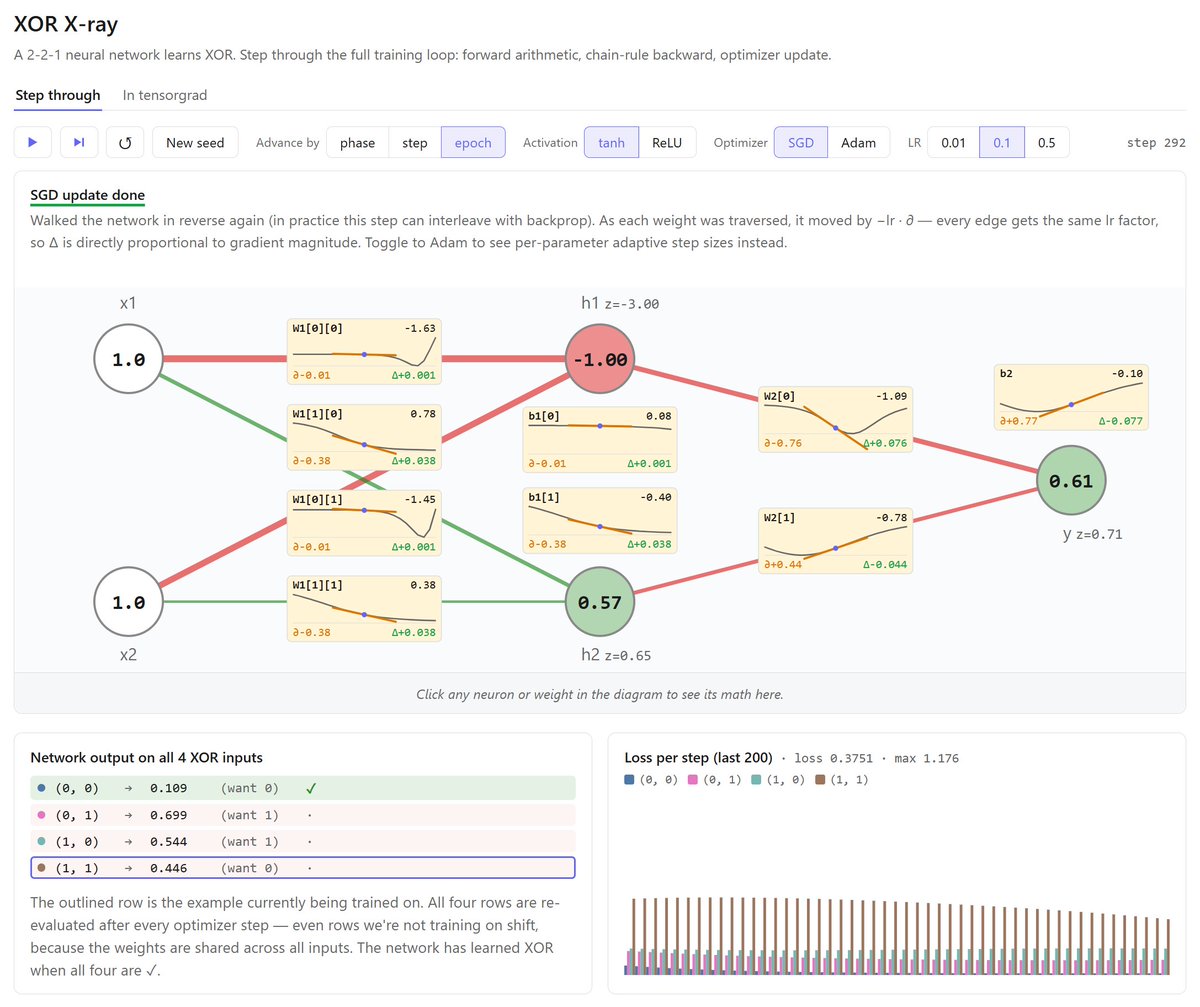

PAINFULLY close to solving this one, but I'm stuck... The fact that every bar need 6(!!!) neighbors is extremely restrictive. I'm reasonably confident it's solvable, but I might need to ~start over.

i just generated an image in the style of a Monet painting using AI please describe, in as much detail as possible, what makes this inferior to a real Monet painting