Uzay Macar

30 posts

Uzay Macar

@uzaymacar

Researcher and entrepreneur

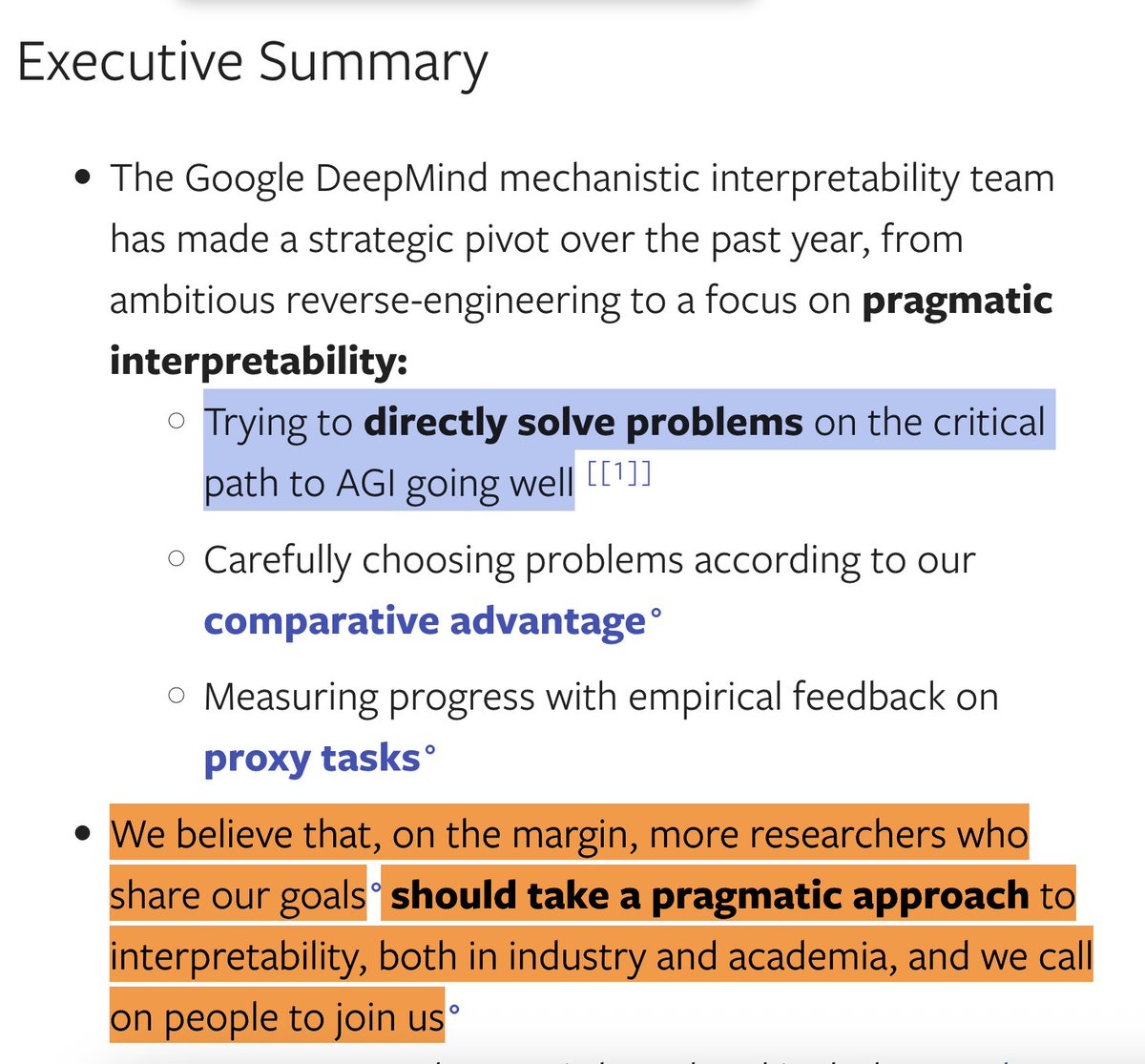

New Anthropic Fellows research: the Assistant Axis. When you’re talking to a language model, you’re talking to a character the model is playing: the “Assistant.” Who exactly is this Assistant? And what happens when this persona wears off?

To build safer AI, we need to understand how models "think". 🧠 Enter Gemma Scope 2, a new set of tools to interpret Gemma 3: our family of lightweight open models. It can help researchers trace internal reasoning, debug complex behaviors and identify risks → goo.gle/gemma-scope-2

New paper: You can’t interpret LLM reasoning from one chain-of-thought. You must study a distribution of possible trajectories! Repeated sampling reveals: self-preservation doesn’t drive LLM blackmail, unfaithful reasoning reflects a biased path, & resampling steers behavior. 🧵

Can you steer reasoning by editing chain-of-thought? It depends. Off-policy edits, inserting handwritten sentences or text from other models, usually fails to impact behavior. On-policy resampling until a model produces a sentence close to what you want reliably shapes behavior.