V3rba

287 posts

V3rba

@v3rbaa

Senior Software Engineer | Building AI agents

Katılım Aralık 2017

20 Takip Edilen19 Takipçiler

Sabitlenmiş Tweet

Y Combinator: "you can build a startup without technical skills"

Here's the setup that actually makes that true, not theory, just the config

Save this. Set it up this weekend. You'll wish you did it sooner

V3rba@v3rbaa

English

> Now you don't even need to know how to code to get money

> Find few small software businesses

> Setup AI Engineering Agent for them

> Charge $5000+ for each

> Spend only one weekend for it

> Bookmark this and implement it right now

V3rba@v3rbaa

English

Everyone keeps saying "don't use Supabase, it doesn't scale"

But Supabase + Vercel is the only combo that gives you fully independent deployments where your AI agent can develop, deploy, and test without touching production

Here's my full stack and what it actually costs:

> Supabase for backend + database

The reason I picked it: database branching

Every feature branch gets its own isolated Postgres

Claude works on 5 features at once, each with its own database

Nothing touches production until the PR merges

Also gives you auth, row level security, edge functions, real-time, and pgvector out of the box

> Next.js + Vercel for frontend + deployment

Every push creates a preview URL

Claude opens it in Chrome, checks if it looks right, fixes what's broken, redeploys

Plus you get analytics, error tracking, and logs on every plan including free

> Stripe for payments.

Has an MCP server so Claude can create plans, set up checkout, handle webhooks, all from one prompt

> Resend for email. 3,000 emails/month free

Claude gets the API right first try every time

The full cost:

> Vercel: $0-20/mo

> Supabase: $0-25/mo

> Stripe: $0 + 2.9% per transaction

> Resend: $0

> Claude Max: $100/mo

Total to start: $100/mo. Everything else on free tiers

Every tool here has an MCP server that Claude connects to directly

Claude doesn't just write code, it deploys, tests, monitors, and manages payments without you opening a single dashboard

V3rba@v3rbaa

English

How life looks like when you stopped sitting at your computer all day

And started doing everything from your phone with Claude

V3rba@v3rbaa

English

While this bro spent $250,000 on Roblox (he still can't believe)

You can make millions $ by becoming a Roblox creator

Dep@0xDepressionn

English

the term "prompt engineering" is misleading and that's why people dismiss it

It sounds like some niche technical skill, but in fact it's just structured communication

You learn to:

> tell AI who it should be

> give it the right context

> define exactly what you need

> show examples of what good looks like

That's not engineering. That's what good managers do with people every day

So that is a reason why most engineers are doing it wrong

The difference is most people skip all of this. They type a vague sentence, get a vague answer, and blame the tool

If you use AI for work and haven't spent a weekend learning this, you're leaving a lot on the table

Anatoli Kopadze@AnatoliKopadze

English

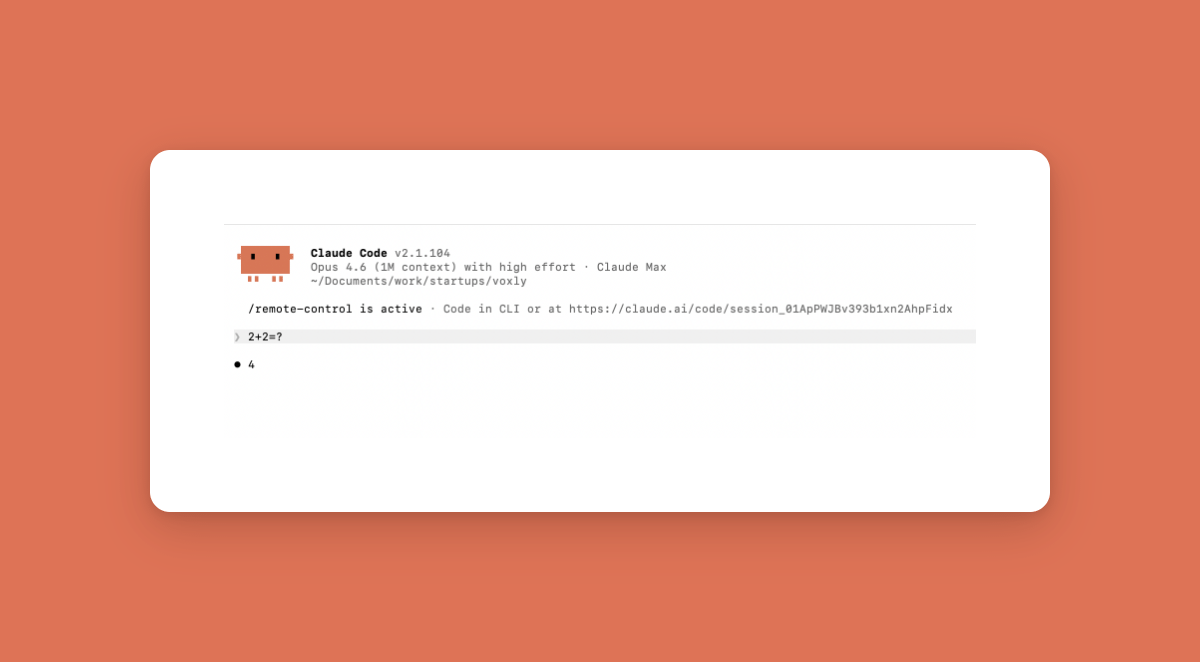

Now everyone tries to use AI agents where you can use a simple code solution

You need to calculate 2+2? - USE AI

You need to find the most liked post from your feed? - USE AI

You need to validate an email? USE AI

You need to sort a list by date? - USE AI

Ngl, most of them shouldn't do it

Here's what you're actually paying for, and how to pick the right tool:

> Latency: a basic function runs in 50ms. An agent call? 1-3 seconds. Your users feel that, trust me

> Cost: an agent can cost $0.01-0.10 per call. A simple function costs basically nothing. Now multiply that by thousands of users and yeah, it adds up fast

> Reliability: if your agent is 85% accurate per step, a 5-step workflow only succeeds 44% of the time. Regular code? 100%. Every time

You're literally adding complexity, cost, and random failures to smth that was already solved

Before reaching for an agent, ask yourself:

> Does this task have clear inputs and predictable outputs?

> Can I define the exact steps?

> Would a wrong answer break things?

If yes to all three, just write code. Not a prompt

Here's how I think about picking the right tool:

Use simple code when:

> Math, sorting, filtering

> Fixed rules (if X then Y)

> Data transformations with known formats

> API calls with predictable responses

Use a small classifier model when:

> Categorizing content into known groups

> Spam or toxicity detection

> Sentiment analysis

> Routing requests to the right handler

These are 10x cheaper, 10x faster, and way more reliable than a full agent. Idk why more people don't talk about this

Use an actual AI agent only when:

> The task needs reasoning over messy, unstructured data

> The right answer depends on context that changes every time

> You literally cannot write down all the rules

Stripe figured this out early. They use what they call "blueprints," a mix of deterministic code and small agent loops. The LLM only kicks in when you actually need judgment. Everything else is just regular code

90% deterministic. 10% agent. That's the ratio that actually works in production

The real skill in building AI products is knowing when NOT to use AI

English

everyone's talking about claude replacing developers

but nobody's talking about it replacing your entire content team

This guy just dropped a full breakdown of how to build your own AI content engine:

> 17 markdown files + 1 AI agent = 10 platforms on autopilot

> no content team, no $8k/mo agency, no manual writing

tldr of his system:

> build a "skill graph" — a folder of interconnected .md files that teach the AI your voice, audience, platforms, and hook formulas

> set up an index .md as the command center that tells the agent exactly how to execute

> create platform playbooks (x, linkedin, ig, tiktok, youtube, newsletter, threads, facebook) with native rules for each

> define your brand voice + how it adapts per platform

> build a repurposing chain: 1 idea → write for X first → rethink (not reformat) for each platform

> upload everything to a claude project and just give it topics

the key insight: it's not 10 copies of the same post reformatted. it's 10 posts that each THINK about the topic differently

go build it this weekend

Ronin@DeRonin_

English

me looking at my $100 stock portfolio after 10 years of ‘investing’ while this guy already has a full business plan to make $100K off a game that hasn’t even dropped yet

Dep@0xDepressionn

English

Me walking Claude through the task once while Claude just sits there about to do it better than me every day for the rest of my life

V3rba@v3rbaa

English

Claude Code just got smarter about looping

Before: you set a fixed interval and Claude checks on a timer, even when nothing changed

Now: you run /loop without an interval and Claude figures out when to check next based on the task

Running a 2-hour build? It won't ping every 30 seconds. Waiting on a quick lint check? It'll come back fast

It can also use the Monitor tool to skip polling completely. Instead of checking over and over, it watches for the event directly

This is one of those small changes that makes a big difference in practice. Less noise, less wasted compute, and you stop babysitting background tasks

/loop check CI on my PR just works now

Noah Zweben@noahzweben

Claude now supports dynamic looping. If you run /loop without passing an interval, Claude will dynamically schedule the next tick based on your task. It also may directly use the Monitor tool to bypass polling altogether /loop check CI on my PR

English

Finally, those who were complaining about Claude not working on the limits issue can now be happy

Claude has started working on it, and the first steps look promising

Claude@claudeai

We're bringing the advisor strategy to the Claude Platform. Pair Opus as an advisor with Sonnet or Haiku as an executor, and get near Opus-level intelligence in your agents at a fraction of the cost.

English