Zhensu Sun

122 posts

Zhensu Sun

@v587su

A Ph.D. student @sgSMU. My research focuses on improving coding productivity with AI techniques.

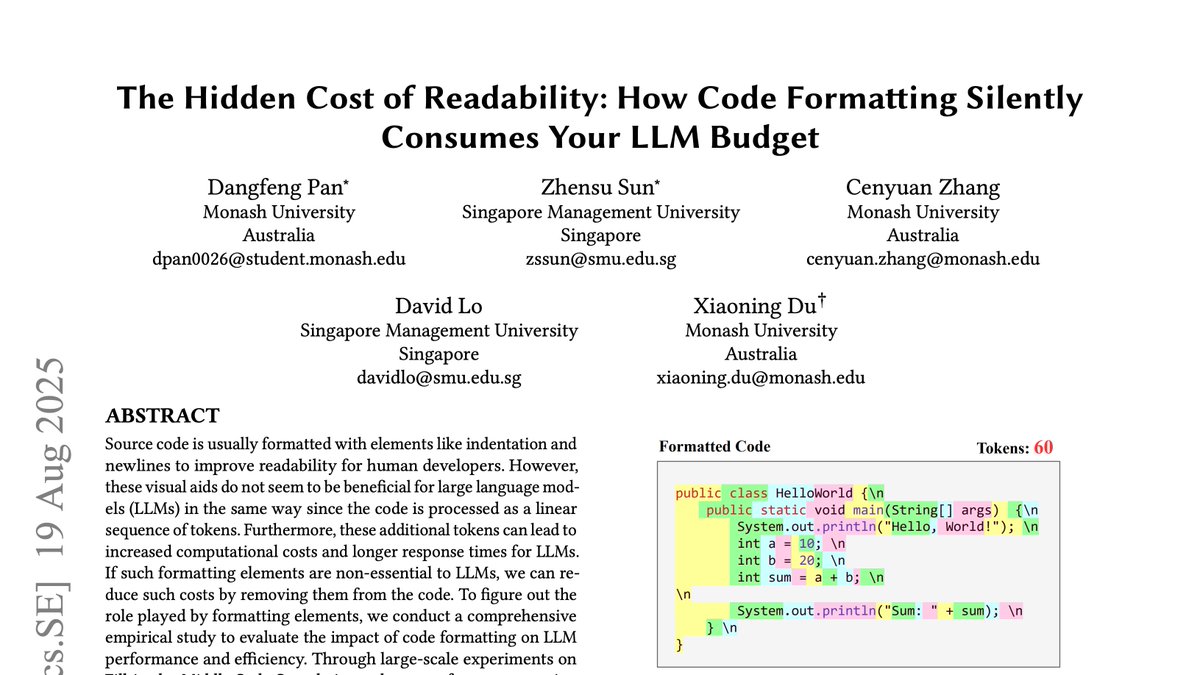

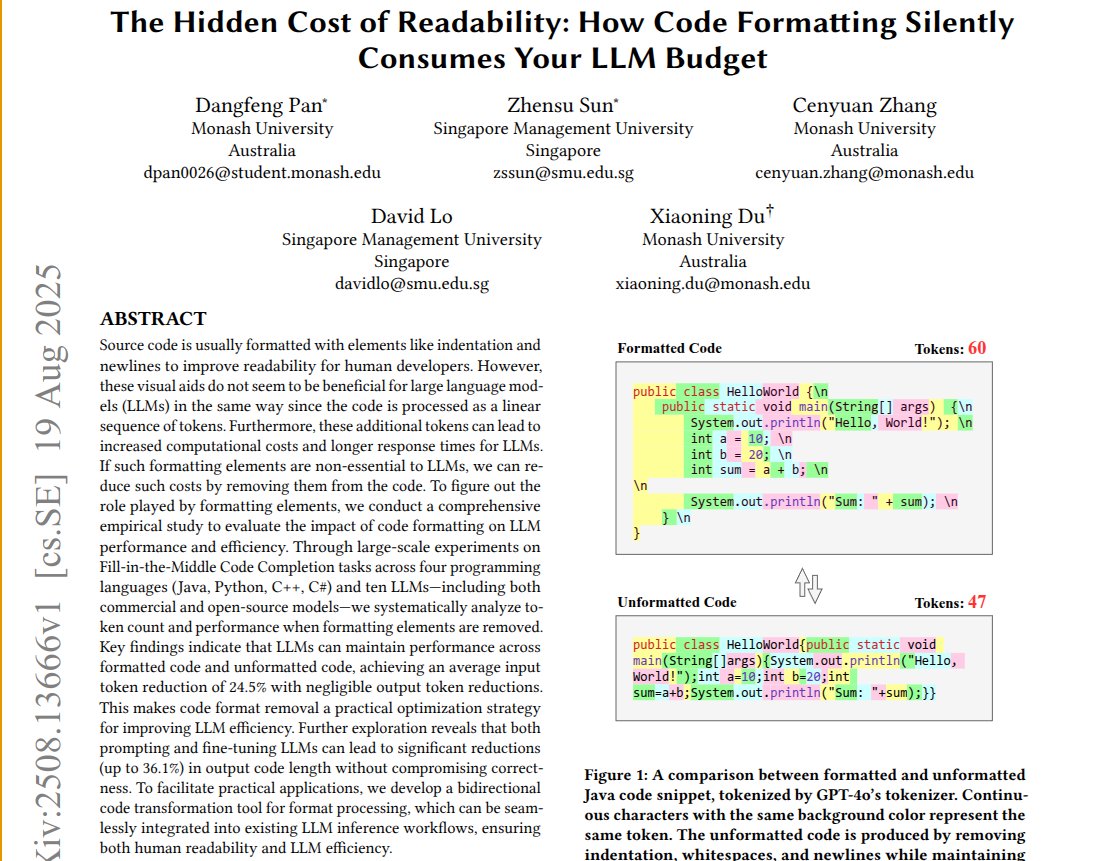

today most interesting paper: CodeOCR This work provides a good explanation of how code indentation and highlighting are designed to serve the human eye. arxiv.org/pdf/2602.01785

The humanoid robot half-marathon in Beijing just started!

We are thrilled to announce that #MSR2025 Foundational Contribution Award 🏆💫goes to David Lo @davidlo2015 for pioneering, influential, and lasting contributions to transforming bug and test data into insights and automation that improve software quality and productivity.

Unitree B2-W Talent Awakening! 🥳 One year after mass production kicked off, Unitree’s B2-W Industrial Wheel has been upgraded with more exciting capabilities. Please always use robots safely and friendly. #Unitree #Quadruped #Robotdog #Parkour #EmbodiedAI #IndustrialRobot #InspectionRobot #IntelligentRobot #FoundationModels #LeggedRobot #WheeledLegs