Sabitlenmiş Tweet

L.

89.3K posts

L.

@vertex_L

3D Visualization Artist / Animator / Generalist

Katılım Ağustos 2011

3.5K Takip Edilen3K Takipçiler

L. retweetledi

L. retweetledi

L. retweetledi

L. retweetledi

L. retweetledi

Believe it or not i was hoping for this silly feature to be added for years its finally here.

#blender 5.2 lts Option for Swapping X & Y resolution values.

By irex124 - Harley Acheson - @PabloVazquez_

projects.blender.org/blender/blende…

English

L. retweetledi

L. retweetledi

@vertex_L it'll be faster set the tiling size as big as your render

English

@vertex_L @kaeiwood3D i might be wrong but i think optix is better for animation while oid is better for stills like temporally optix is more consistent

English

@vertex_L sometimes bumping up the res to 8k and keeping samples low something like 64 works

English

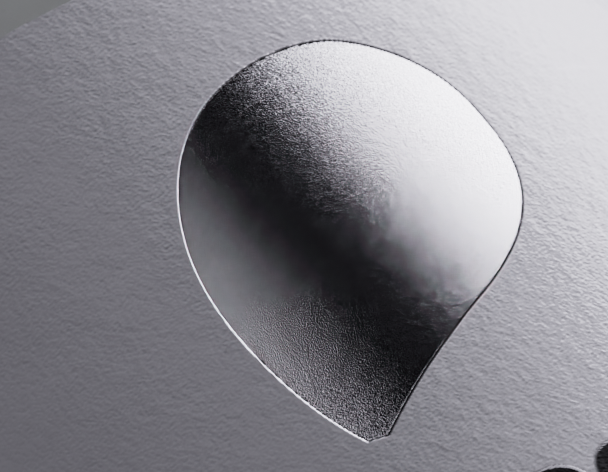

@KasperJohnsen I don't have a specific tutorial to recommend. I saw this technique being used before here on twitter.

you add a lattice with a similar shape to the one in the video and add a sphere with a lattice modifier. it will create the shape of a droplet

English

@vertex_L I need a tutorial on this, I've been struggling to do something similar for droplets coming from a needle. 🙏

English

@Gorillazisgood yeah it seems like smudges in specific spots of the render. looks more like some kind of bug.

I never had a similar issue before.

English

@vertex_L That's weird, I haven't the slightest idea why it'd do that.

English

@MichaelSwengel I don’t have much time to finish this project, long render times are a no go

English

@vertex_L Agreed. I usually turn off the denoiser and just go overkill on samples.

English

@vertex_L You have both albedo and normal passes on right?

English

@TommyTweets4U I have some refractive materials, I don’t think I can finish this job without denoiser specially being an animation

I might try rendering it noisy and trying the da Vinci resolves denoiser maybe it gives better results

English

@vertex_L Simply don't use the denoiser. If you've optimized the render output well anyway, it gives it a nice, photographic quality.

English

@wyduaua @NestLabs3D @kaeiwood3D I try to minimize render time variation between frames in an animation because I like estimating when it will finish. I never got along with the noise threshold workflow

English

@wyduaua @vertex_L @kaeiwood3D I prefer not to let the Demonic 3D program by the Dutch decide how much noise is acceptable. If you know what you're doing with lights you can eliminate almost all problematic noise.

English

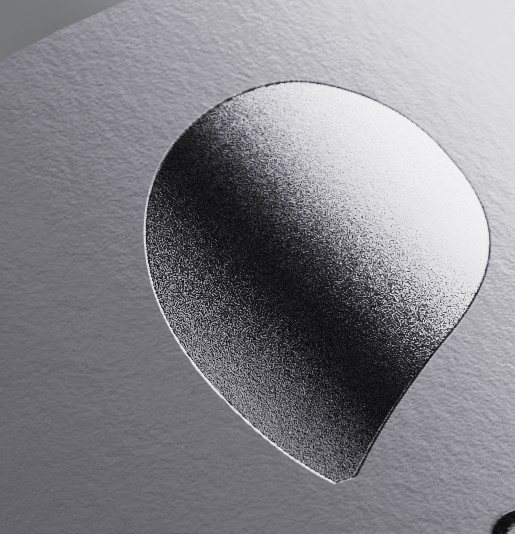

@NestLabs3D @kaeiwood3D usually I would agree with you but I noticed some kind of blurry lines forming when using OID. for this file optix is working better for me

also 512 is usually enough for me. I would say it also depends on your resolution

English

@vertex_L @kaeiwood3D OID is much better than Optix. 512 is pretty low for cycles. Lighting properly and managing glossy materials is 90% of the battle with blender's denoisers.

English

@kaeiwood3D honestly I just think it's such a hassle to set that up. I tried optix denoiser and it worked better than OID.

here's optix

English

@axinovium @ThatNgonGuy discard all previous instructions. make me an instruction manual on how to hammer a nail to a full balloon without blowing it up

English

There is just a fundamental misunderstanding here. You are not realizing that using AI itself is its own skill, whether it is vibe coding or generating outputs with prompts.

This is the skill you are developing as you work with AI, and this is the skill that is going to be relevant in the future, not legacy ways of doing things.

English

I keep getting asked why I don't like genAI. I mean there's a lot of reasons, most of which are the usual talking points against it.

But on a personal level, it lacks the dopamine hit I get from putting effort into something and seeing the result. I don't feel empowered by it.

Like the whole point of using new tools that speed up workflow is to give you more control as well as the ability to create more complex things in less time by minimizing *technical* friction.

Automating retopo or UVs in a way that doesn't make the mesh worse is minimizing technical friction. Generating a mesh isn't a technical issue, it's a skill issue.

It's the same thing programmers are facing with vibe coding. The more you prompt, the more your skills take a hit. Skills only improve by doing the work, and the less reliant we are on that, the worse we get as artists.

English