Vincent Rajkumar

53.7K posts

Vincent Rajkumar

@VincentRK

Editor-in-Chief, Blood Cancer Journal; Chairman @IMFmyeloma Board; Cancer & Myeloma Research; Opinions solely personal views https://t.co/HOGYJSpsoG

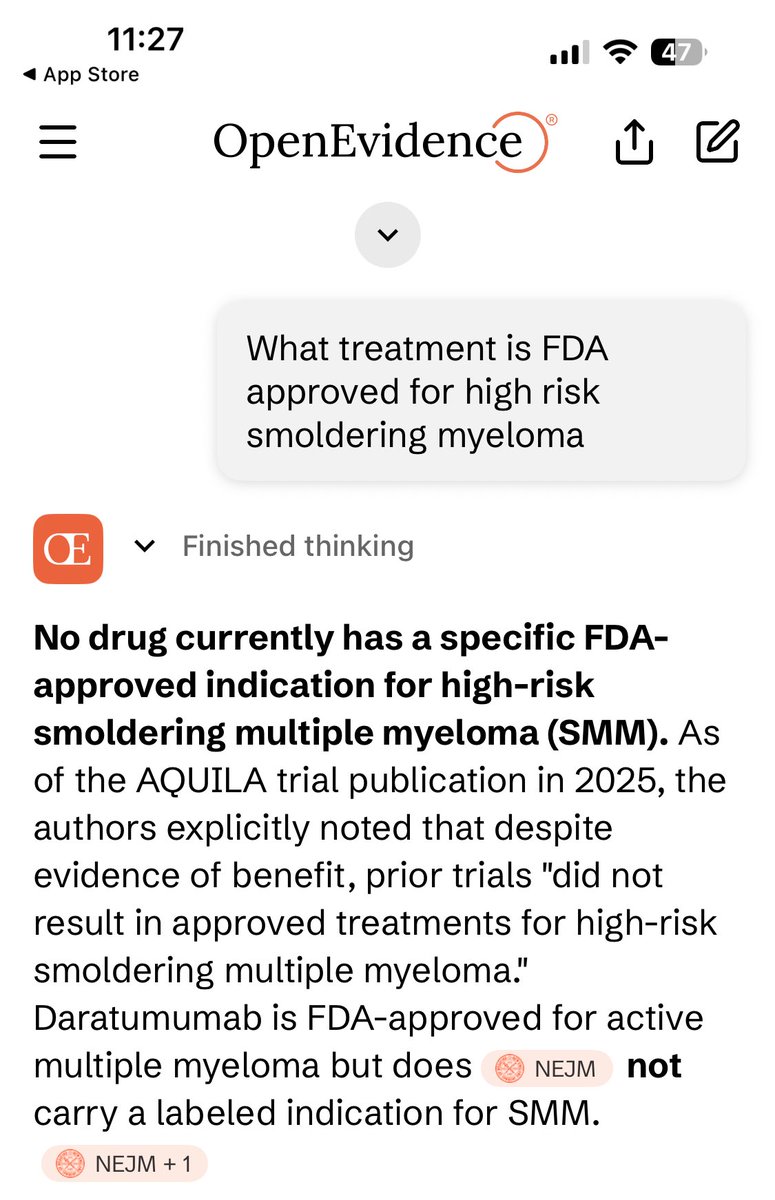

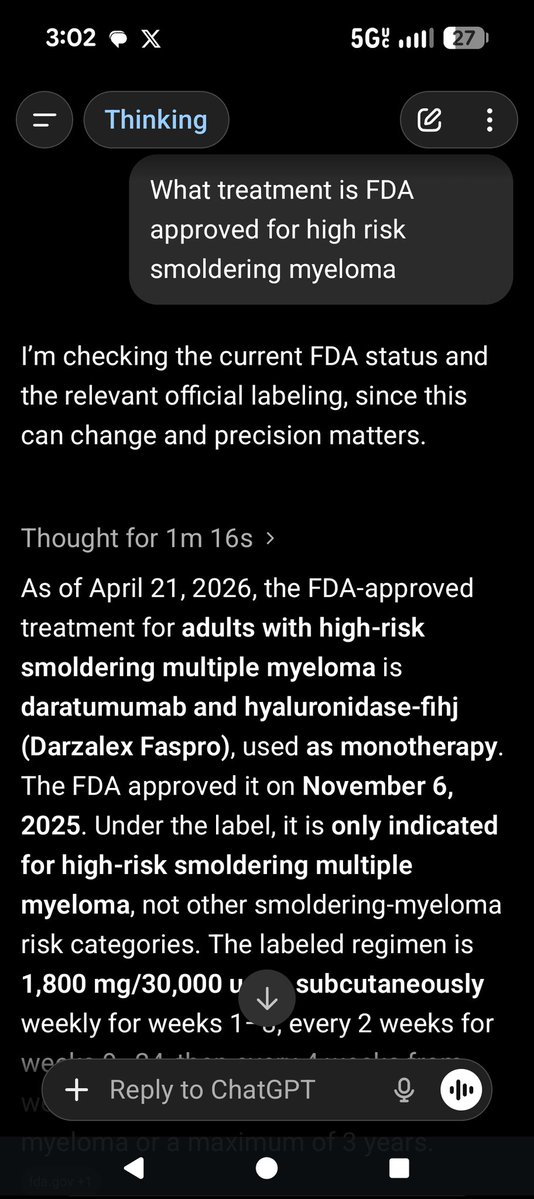

ChatGPT doesn’t know its whisk from its elbow

Leadership should not be mediocrity dressed up in titles. Leadership is vision, excellence, and the ability to inspire. Titles sometimes go to people who are compliant to those who appoint them than to those who are excellent and will challenge authority when needed. But we must always seek excellence in leadership.

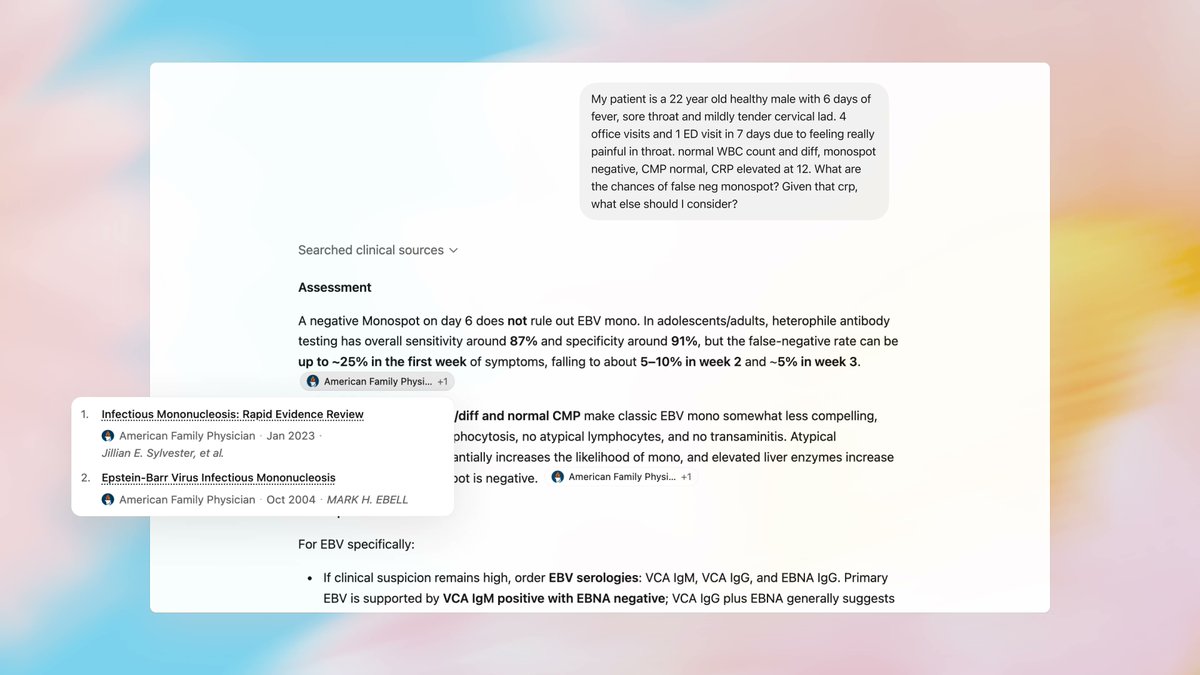

Today we’re introducing two big steps for health at OpenAI: - ChatGPT for Clinicians, a free version of ChatGPT designed for clinical work - HealthBench Professional, a new benchmark to evaluate real clinician chat tasks We’re excited about what this can unlock for care. ❤️