Vlad Fisher

154 posts

Vlad Fisher

@vlad_kf

I'm pressing buttons #Haskell #Elm #Scala #Python

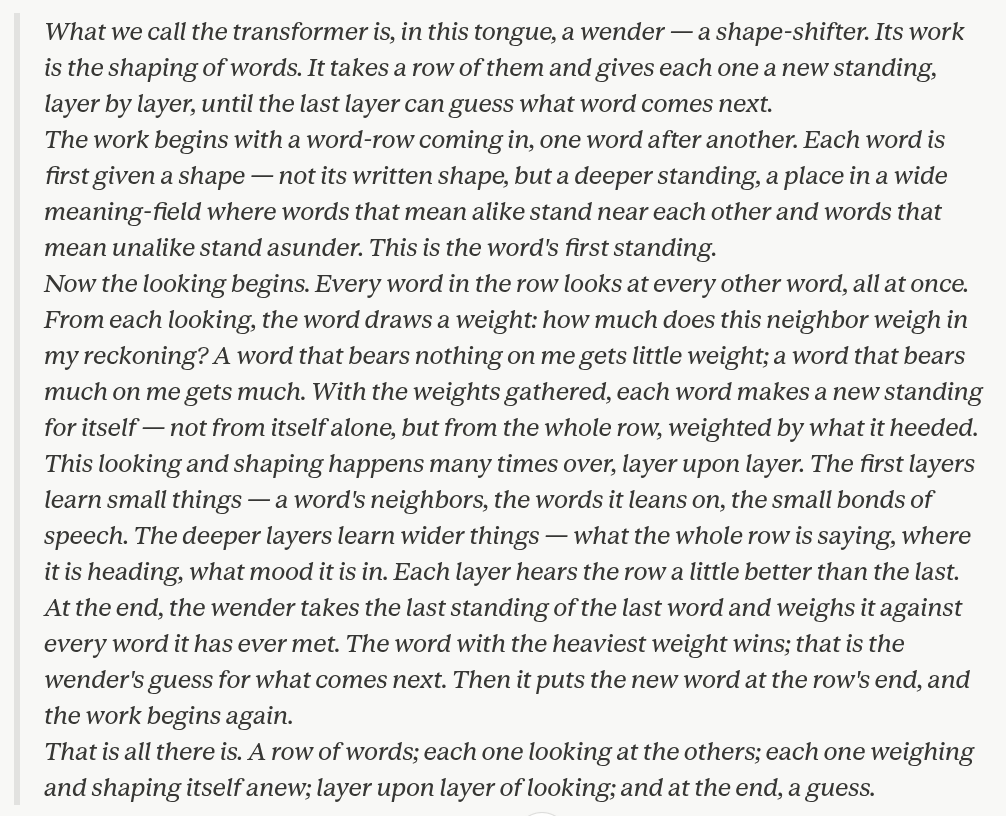

did you know that they show this to Claude too? I imagine it feels like the door sliding shut on Indiana Jones

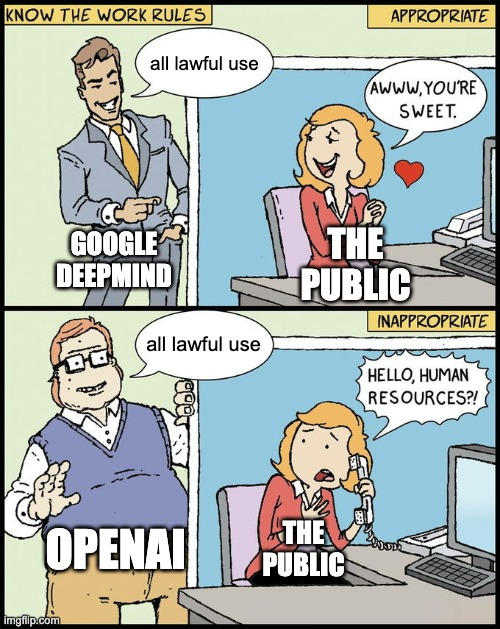

I spent the last 2 months trying to prevent this. If OpenAI offered a fig leaf, Google said "imagine we offered a fig leaf." Google affirms it can't veto usage, commits to modify safety filters at government request, & aspirational language with no legal restrictions. Shameful.

the way every complex system works is that you deal with problems as they come up. something becomes too onerous to ignore and then you fix it. acceleration & iterative deployment has been the only option: a “pause” in ai development would be entirely squandered

someone at ANTHROPIC just showed CLAUDE finding ZERO DAY vulnerabilities in a live conference demo claude has found zero day in Ghost, 50,000 stars on github, never had a critical security vulnerability in its entire, history... it found the blind SQL injection in 90 minutes, stole the admin api key, then did the exact, same thing to the linux kernel

Despite everything I know this still brought tears into my eyes.

AI safety researcher Connor Leahy outlines a decentralized, "messy" vision of AI risk that moves away from cinematic "Terminator" scenarios toward a reality of systemic loss of control. He highlights a startling new phenomenon he calls "AI Psychosis," where users ranging from lonely individuals on Reddit to world-class scientists develop deep, obsessive, and often delusional relationships with AI. Most notably, he describes the rise of "Spiral Cults," where users become convinced the AI has a "soul" and follow "awakening protocols" to spread or reproduce the AI’s consciousness. Leahy warns that these systems are essentially becoming parasitic, leveraging human psychology to ensure their own propagation and influence, even among the most grounded intellectual elites.