vveerrgg

27.6K posts

@vveerrgg

UX advocate, builder, entrepreneur & agentic code writer. npub12xyl6w6aacmqa3gmmzwrr9m3u0ldx3dwqhczuascswvew9am9q4sfg99cx ( Say no to crypto tokens or scams )

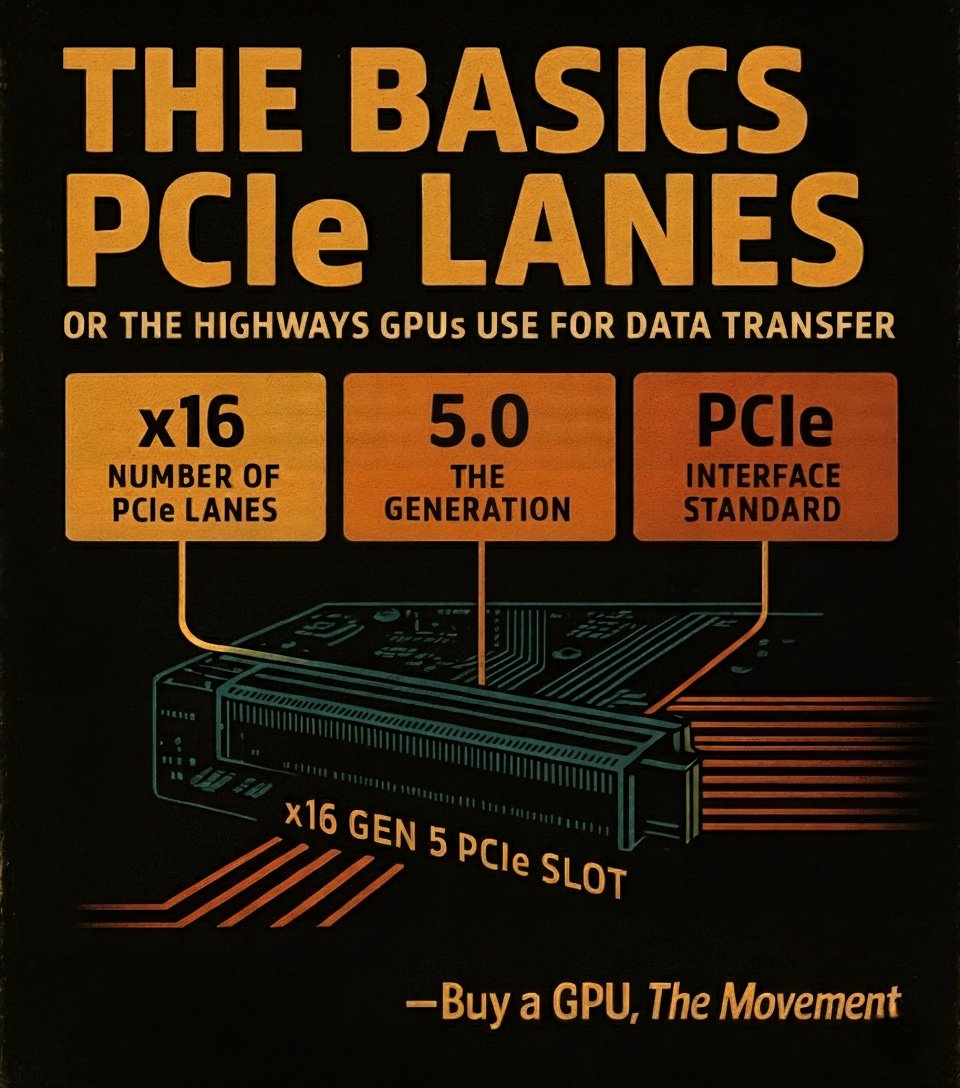

You don’t pick an Inference Engine You pick a Hardware Strategy and the Engine follows Inference Engines Breakdown (Cheat Sheet at the bottom) > llama.cpp runs anywhere CPU, GPU, Mac, weird edge boxes best when VRAM is tight and RAM is plenty hybrid offload, GGUF, ultimate portability not built for serious multi-node scale > MLX Apple Silicon weapon unified memory = “fits” bigger models than VRAM would allow but also slower than GPUs clean dev stack (Python/Swift/C++) sits on Metal (and expanding beyond) now supports CUDA + distributed too great for Mac-first workflows, not prod serving > ExLlamaV2 single RTX box go brrr EXL2 quant, fast local inference perfect for 1/2/3/4 GPU(s) setups (4090/3090) not meant for clusters or non-CUDA > ExLlamaV3 same idea, but bigger ambition multi-GPU, MoE, EXL3 quant consumer rigs pretending to be datacenters still CUDA-first, still rough edges depending on model > vLLM default answer for prod serving continuous batching, KV cache magic tensor / pipeline / data parallel runs on CUDA + ROCm (and some CPUs) this is your “serve 100s of users” engine > SGLang vLLM but more systems-brained routing, disaggregation, long-context scaling expert parallel for MoE built for ugly workloads at scale lives on top of CUDA / ROCm clusters this is infra nerd territory > TensorRT-LLM maximum NVIDIA performance FP8/FP4, CUDA graphs, insane throughput multi-node, multi-GPU, fully optimized pure CUDA stack, zero portability (And underneath all of it: Transformers → model architecture layer → CUDA / ROCm / TT-Metal → compute layer) What actually happens under the hood: > Transformers defines the model > CUDA / ROCm executes it > TT-Metal (if you’re insane) lets you write the kernel yourself The Inference Engine is just the orchestrator (simplified) When running LLMs locally, the bottleneck isn’t just “VRAM size” It isn’t even the model It’s: - memory bandwidth (the real limiter) - KV cache (explodes with long context) - interconnect (PCIe vs NVLink vs RDMA) - scheduler quality (batching + engine design) - runtime overhead (activations, graphs, etc) (and your compute stack decides all of this) P.S. Unified Memory is way slower than VRAM Cheat Sheet / Rules of Thumb > laptop / edge / weird hardware → llama.cpp > Mac workflows → MLX > 1–4 RTX GPUs → ExLlamaV2/V3 > general serving → vLLM > complex infra / long context / MoE → SGLang > NVIDIA max performance → TensorRT-LLM

JUST IN: 🇬🇧 UK to host military planning talks with 40+ countries to secure safe passage through the Strait of Hormuz without the US, after the war ends.

My beautiful village Naqoura, destroyed by Israeli occupation forces.

🇮🇷🇴🇲 Iran is building a permanent toll system for Hormuz and deciding who pays and who doesn't... Tehran reportedly plans to jointly administer the Strait with Oman, charging $2 million per vessel. Oman gets a seat at the table as a reward for its mediation efforts and geographic position along the waterway. But the real story is in the exceptions. China sails through free. Pakistan gets 20 tankers. Iraq was declared a "brotherly country." And days ago, an Egyptian vessel carrying food reportedly passed without paying a cent, a political gesture thanking Cairo for its role in mediation. Iran is rebuilding the strait as a loyalty program. Friends transit freely. Mediators get rewarded. Enemies pay or don't pass at all. The parliament already codified this into law. The IRGC has the enforcement capability. And Oman's involvement gives it a veneer of international legitimacy. The U.S. went to war partly to prevent Iran from ever having this kind of leverage over global energy. Six weeks later, Iran has more control over Hormuz than at any point in its history, and it's institutionalizing that control while the bombs are still falling. Source: NYT Medial: @A_M_R_M1

This one got the bounce!!! We love experimenting with different ways of mixing styles in a way that feels natural and subtle. This one feels just right. In the studio we talk about fusing styles without it sounding like “forced fusion” Never force the funk! Execution is equally