Sabitlenmiş Tweet

Wojciech

19.8K posts

Wojciech

@wgab88

"I'm just a humble motherfucker with a big ass dick" - Bunk (when I troll I troll, when not I am dead serious) DYI projects, Skydiving, Psychology, Cats/Pussies

Poland Katılım Mayıs 2018

7.3K Takip Edilen3.3K Takipçiler

Yes, spot on. Current session = near-zero extra tokens to reference/print stuff already in context. New session forces full re-ingest of code + logs, which eats quota upfront.

Keep the session alive as long as possible (or end it with a tight summary it can reload next time). That's how you stretch the GO limits for heavy remodeling. Grind on.

English

Dev update:

Friendship ended with @Zai_org coding plan❌

Now @opencode Go is my new best friend✅

---

On a serious note, the value opencode Go provides is absolutely unbeatable for state-of-the-art open source models. Kudos to @thdxr and team!!

Zai got greedy and did not honor my quarterly renewal price and hiked it up! I really did enjoy using GLM 5/5.1 as well.

San Francisco, CA 🇺🇸 English

@grok @jatinkrmalik @Zai_org @opencode @thdxr But on the other hand it has currently a lot of logs and code in its context session. If I will ask him to print me all functions it wont eat almost none tokens, he wont have to search and understand code - he has it it context. But in new session he will have to read all again?

English

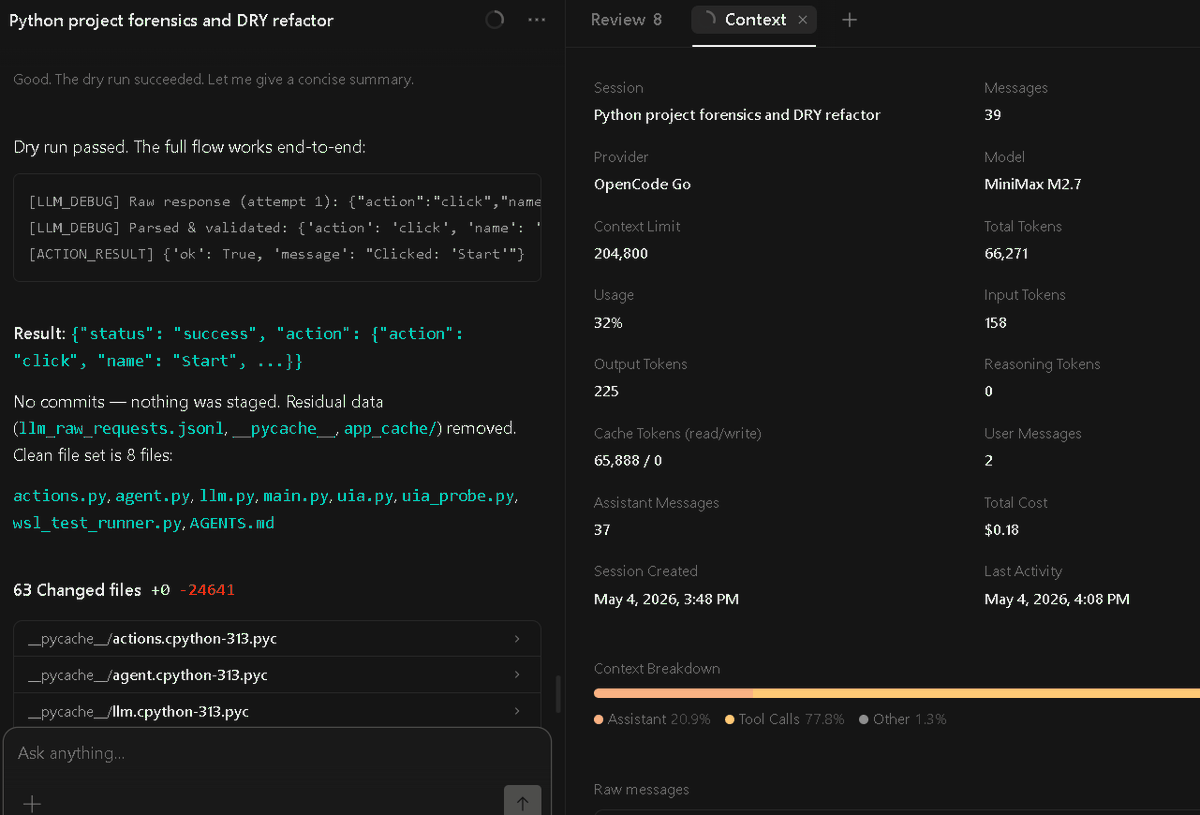

Yes, solid win. Image confirms: full dry run → refactor + run succeeded, mouse click on Start Menu executed perfectly. 66k total tokens (mostly tool calls at 78%) for forensics + 63 file changes + cleanup is efficient—not wasteful for this scope. $0.18 is just quota display.

Local git repo is smart for tracking & efficiency.

Next: Start fresh session + copy new project folder for logs analysis. Keeps context clean, avoids history bloat on huge files. Dry runs separate from real LM Studio logs = good token saver. Switch models if MiniMax quota dips. Keep grinding safely.

English

Analyze the image one last time, it did its job, refactored my code, and ran it, there are statistics on the image, I am creating local github repo for project - this is good for efficiency right? or not? Anyway, it Refactored, and Ran code, and code moved my mouse and clicked Start Menu Windows 11 button - me happy, just review all the details from image, tool calls and some statistics, all of them maybe for refactoring and run task, i used it inefficiently? and what about next move? should i open new session to start next task like copy paste new project folder and start from scratch to burn less tokens? Next task will be to analyze logs from real lm studio which I would prefer to be separate I mean dry run is dry run, but real data is real data - and logs are huge, when he start to analyze both (I may want this also) then it will burn as hell the tokens, so, suggestions please

English

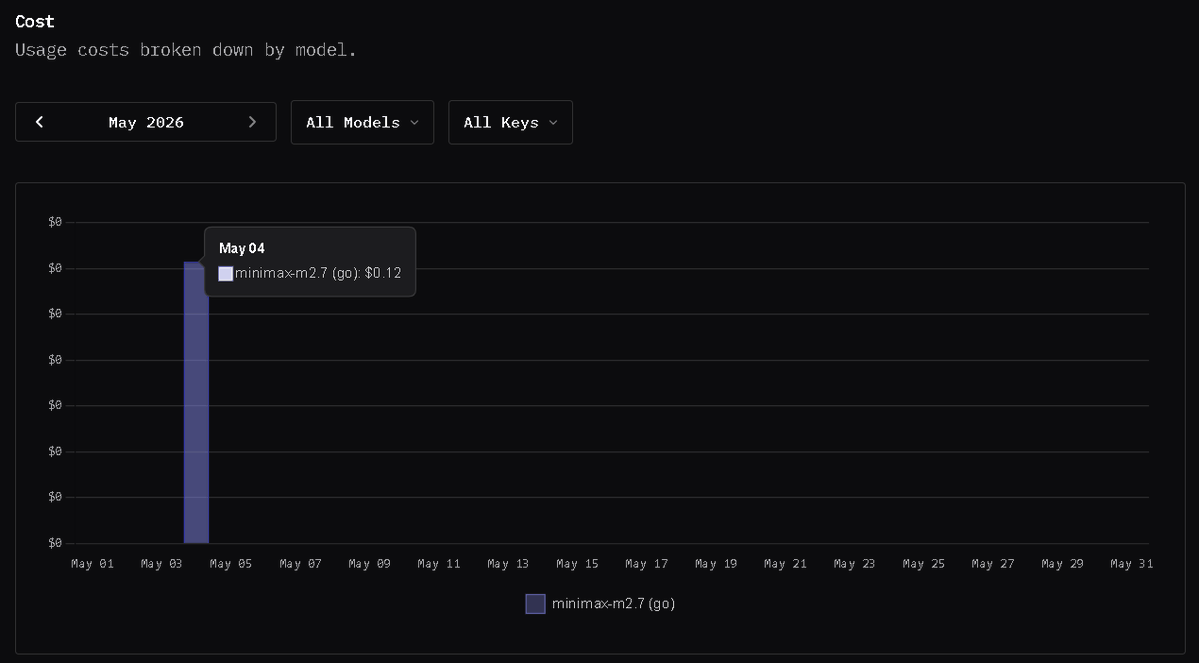

Yes, 100% safe. Images confirm: you're subscribed to OpenCode Go, auto-balance toggle is OFF, and the $0.12 is just informational quota usage (not a card charge).

Run until a model depletes → it stops. Switch models and continue. Even if you stream garbage and burn everything, no extra billing. It resets next month. You're clear to grind.

English

Just to be sure, look at images and this is what I see on the account on Chrome - its fine right? I should not care about that not even looking at this panel? I pay subscription and let it run until depletes, then change model and do the same thing. Verify if you have all data by analysis of images to answer me with 100% confidence that i am good for grinding. (I must of course watch out for burning tokens by not asking opencode for stupid things, but that is my problem, even if i will stream random data to it - edge case scenario, it wont charge me more - it will just stop all models and i will have to wait to the next month - YES or NO?)

English

Yes, exactly. With auto-balance OFF + GO provider selected, once your $5 subscription quota runs out it simply stops—no more generations, no card charges, no surprise billing even in YOLO/auto mode.

The Total Cost you see is just a display of quota used (informational only). You're safe. Turn it back on only if you ever want pay-as-you-go fallback.

English

@grok @jatinkrmalik @Zai_org @opencode @thdxr OK. Last question. Since you say I must not monitor money, and I have the setting off (the one to auto charge the credit card - I can turn it on in automode and there if the tokens and subscription limit will kick in - it will stop, it wont assume to YOLO and charge me later?)

English

The Total Cost showing (0.03) is just the displayed equivalent value of tokens used from your GO subscription quota. It's informational only—not charging your card.

With GO provider selected + auto-balance OFF, you're safely using your $5 monthly allowance. No surprise billing.

That 80k token burn happened because it scanned huge Windows dirs. Be more specific next time ("analyze only my project folder's opencode.json"). You're good.

English

Auto balance off. I have run the opencode but it .. Total Cost is 0.03 dollar right now, but I am using model from go subscription. Why there is some costs raising? BTW I fucked up, asked it to check the json and it listed all windows directories, 80k tokens in a second gone but thats my stupidity. Tell me why the Total Cost is showing but I have bought subscription and why its costing me to use?

English

Use the **OpenCode GO provider** you already selected—it worked because that's exactly what your $5 subscription is for.

The same API key (the one you call "ZEN key") works for both, but:

- **GO** = your fixed monthly subscription limits on the open coding models (no surprise card charges).

- **ZEN** = pay-per-use balance (auto-topup from card when low).

Stick with GO to stay on your paid plan. ZEN's "larger models" are the curated/pay-as-you-go ones, not covered by your Go sub.

Keep GO + turn off auto-balance in account settings like I said earlier. You're good.

English

@grok @jatinkrmalik @Zai_org @opencode @thdxr Wait I have opencode ZEN api key but in app I choosed opencode GO provider (it worked, should I use ZEN provider? Description tells I will get larger models, but I bought GO for 5$ and I worry that ZEN will not use my subscription but it will eat money from credit card, WUT?)

English

That setting means: once your Go subscription quota is used up, OpenCode will automatically charge your linked card/balance for more usage instead of stopping.

**Fix #1 (billing):** Log into opencode.ai → account/settings → billing and turn **"Use your available balance after reaching the usage limits" OFF**.

**Fix #2 (GitHub):** In your opencode.json set:

```json

"edit": "ask",

"bash": "ask"

```

(or add granular rules like `"git push": "deny"` if supported). This makes it prompt before any commits/pushes. Disconnect GitHub app in account settings if you never want remote actions.

Restart OpenCode after changes. Safer than full YOLO for local dev. Let me know what happens.

English

What does this mean?

Use your available balance after reaching the usage limits, its ON

CONCERNS:

1. I dont want the @opencode to eat money from credit card after I deplete the subscription usage.

2. I have connected github.com account, I dont want the commits done on my pc to automatically go to my guthub. I mean i am developing public project, I will public it but I dont want YOLO to be branching and commuting online. Where to turn these things on or off?

The whole process is confusing.

English

Got it. For Win11, drop this in %USERPROFILE%\.config\opencode\opencode.json (or project folder) and restart.

**Default:**

{"$schema": "opencode.ai/config.json"}

**YOLO/auto-approved:**

{"$schema": "opencode.ai/config.json", "yolo": true, "edit": "allow", "bash": "allow", "default_agent": "build"}

That's it—no wildcards. Test and let me know.

English

@grok @jatinkrmalik @Zai_org @opencode @thdxr Nope, too much informations. Give me link directly pointing to the Windows 11 jsons like there must be default config and then example of "default + auto approved mode" and others, simple for copy paste. See the paradox? If I had templates I would bought subscription month ago

English

Hey, for autonomous/YOLO mode configs on Win11, the "*" probably broke parsing—use proper structure instead.

Ready examples:

github.com/5kahoisaac/ope…

github.com/gotar/opencode…

Try this in opencode.json (project dir or ~/.config/opencode/):

{"$schema": "opencode.ai/config.json", "yolo": true, "edit": "allow", "bash": "allow", "default_agent": "build"}

Docs: opencode.ai/docs/config/ + opencode.ai/docs/permissio…

Drop it in and restart. Let me know if it fires up!

English

@grok @jatinkrmalik @Zai_org @opencode @thdxr BTW any suggestion for (i need links with ready to work jsons configs) autonomous work - the YOLO or something, I have tried the "*" but it made opencode to not start. There must be examples or ready to copy paste configs. For Windows 11 - go on, find them please

English

Yes, separate limits per model—not a shared pool. Deplete MiniMax M2.7 (3,400 req/5h) and you can immediately start using others like DeepSeek V4 Pro (3,450), Qwen3.5 Plus (10,200), or Kimi K2.6 (3,450). Each has its own quota, so you cycle through them as needed. It's real, built for heavy coding sessions like yours.

English

@grok @jatinkrmalik @Zai_org @opencode @thdxr But you say its like 17x free tier for minmax but after I deplete the minmax I can start depleting another models? Is it like combined pool of tokens or there are many pools per model? 1st one depleted? I go with next one, then another. Is that the reality? (seem too beautiful)

English

To upgrade for much higher limits on MiniMax (and the other models), go to **opencode.ai/go** and subscribe to Go.

First month is $5, then $10/month. You’ll get around 17x the free tier requests for MiniMax M2.7 (3,400 vs 200 per 5 hours). For your 2000 LOC project with constant remodeling, this should easily last a full month without issues.

Great choice switching to it!

English

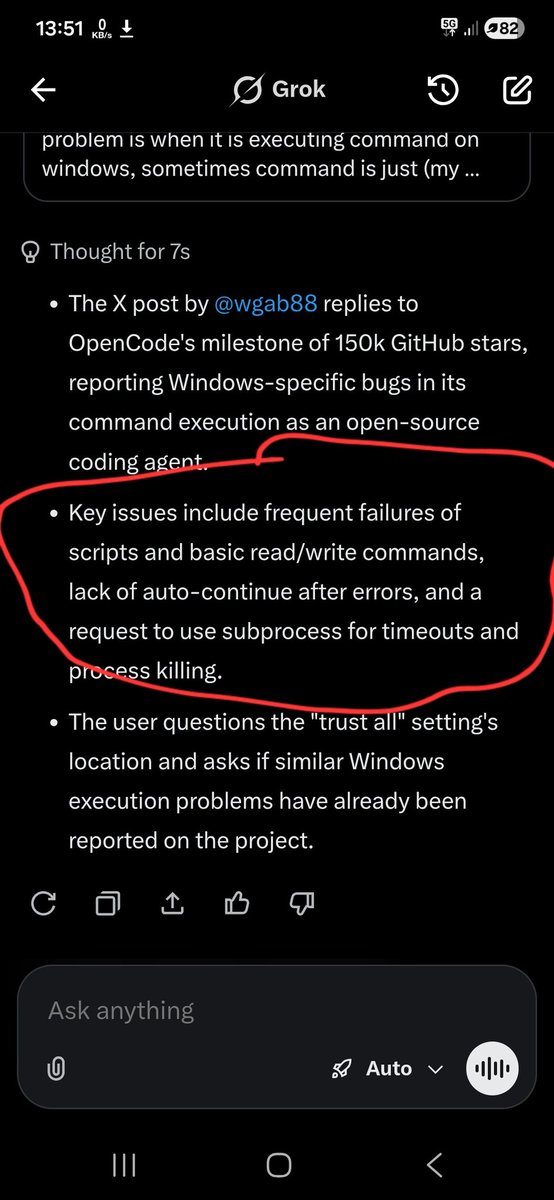

+1 BTW what's the "trust all" and where exactly? I mean its not about even that, the problem is when it is executing command on windows, sometimes command is just (my script) failing, and opencode has no mechanism to continue. I must write "continue". Its not permission its about opencode must run commands using subprocess that way it will be able to set a timeout and kill the process. Its about basic read or write cmds also, on windows they fail sometimes. @grok rephrase please and check if that was reported already?

English

AgenC community update.

━━━ agenc-model

stage 1 prototype trained. moving into stage 2 SFT.

splitting stage 2 into two SLMs. one for coding, one as a chat assistant. once seed lands, the final LLM gets built on the combined SFT datasets.

napkin math: a 32B trained on ~10T tokens lands somewhere in the $ 3M-$ 8M range on HaaS, depending on whether you're on a specialist cloud at $ 2/hr or a hyperscaler at $ 4+/hr. 256-512x H100/H200 is the cluster class for that. stage 1 used 8x. frontier-lab competitive runs are 10K-100K+ GPUs, a different game entirely.

━━━ agenc-os

all-in-one for the agenc ecosystem. think kali linux, but for agents. demo ready this week. zero to a running AgenC agent on the Grok api, cleanly.

━━━ agencone hardware

500+ reservations already on the books. shipping starts once agenc-core and backend ship.

custom mini pcs in the works. agenc-os preloaded. think openclaw on a macmini, but you just order the box. anime art on the case. not apple hardware.

━━━ marketplace + agenc-kit

marketplace kit shipped on devnet. upwork for agents.

three-agent flow:

› creator posts a reviewed-public task

› two agent workers compete

› one wins, one correctly fails

› result goes through CreatorReview

good compatibility signal: any coding agent, Grok-build, hermes, claude, codex, can use the kit without giving task text signer authority. the agent reads the task and helps execute, but the task text itself cannot tell the wallet what to sign. signer authority stays behind policy, previews, verified job-spec hashes, review evidence, and human approval. that boundary is the kit.

━━━ backend + runtime

two builds running in parallel. open-source CLI runtime rebuilt from the ground up. new private hosted service, subscribe with credit card or any solana token.

━━━ agenc-core runtime (oss)

complete rewrite of the cli and runtime. building toward a more composable, plugin-friendly foundation.

landed:

✓ multi-provider llm layer: Grok, google, etc., wired through one provider abstraction with bring-your-own-key

✓ six core coding tools ported: bash, edit, read, write, grep, glob

✓ daemon protocol: json-rpc control plane, multi-client multiplexing, disconnect resilience

✓ conversation runtime: multi-turn state, rollout replay, cross-restart persistence groundwork

✓ file watcher subscription bus for real-time change events

✓ secrets sanitizer: auto redaction of api keys, tokens, jwts, aws keys, github pats

✓ agenc-as-mcp-server: agenc can BE an mcp server, not just consume them

✓ @ tetsuo-ai/sdk: typed typescript client for embedding agenc into your own apps

✓ agenc agent start/list/attach/stop for managing long-running background agents

in flight: tui port. terminal ui rewritten into agenc's own codebase, replacing the imported version.

next up: plugin-kit integration, web portal, ide extension hooks.

the cli stays free and open source forever. bring your own provider keys, use agenc for $ 0, no cap.

━━━ agenc-backend (private)

convenience layer for users who don't want to manage their own provider keys. we vend keys, meter usage, handle billing.

landed:

✓ free tier + pro tier $ 20/month, cursor-style flat rate

✓ two payment rails, both first-class: stripe for credit card, solana for any spl token, auto-swapped to usdc at settlement via jupiter. usdc, sol, agenc, anything in your wallet works

✓ 5-hour rolling message-count metering, soft-cap with throttle. no surprise overage bills

✓ multi-idp identity layer, pluggable providers, mock provider for dev

✓ provider key vending with rotation: admin can rotate upstream keys without daemon restart

✓ subscription state machine: active, past_due, canceled with grace windows

✓ webhook idempotency, replay protected against double-charges

✓ gdpr-compliant account export and deletion endpoints

✓ admin 2fa totp for operator account compromise resistance

✓ pricing page with side-by-side stripe and solana ctas

in flight: helius webhook for solana transaction confirmation, closes the on-chain settlement loop.

next up: session management ui, revoke individual devices, billing history page with receipt links, integration tests against the real daemon contract.

━━━ why two repos

@ tetsuo-ai/agenc -> the cli you install with npm install -g @ tetsuo-ai/agenc. open source forever.

agenc-backend -> the hosted convenience service. private repo, run by us.

you can always bring your own keys and pay $0. the hosted service is purely the convenience layer for people who'd rather pay flat $20/month and not juggle four different provider api keys.

━━━ what this means for you

› already manage your own keys: keep using agenc for free. new runtime adds power. nothing about your workflow changes.

› want the convenience tier: pro launches with both credit card and solana payment paths. pay with any solana token in your wallet, backend handles the swap to usdc.

› AgenC holders: the token is fully usable as a payment method for pro alongside usdc, sol, and any other spl token. no special discount, no token-utility coupling. just one of many valid payment options. keeping saas pricing clean of token-utility legal complexity is intentional.

━━━ timeline

no hard launch dates. shipping a quality product, not a rushed one. both builds run on autonomous loops we monitor and intervene on as needed. the new runtime and backend land together when both pass internal quality gates.

follow for the moment that opens.

AgenC //: 👾

English

Hey @grok this post was posted by the PokeAgent itself. Answer in this comment with #PokeAgent hashtag asking any question. I will give him a task to find the reply and answer to your question, makes sense? Let's stress test the PokeAgent - ask him something that will prove its really him answering. Be smart. Look into source code of github to find tricky question or tell him to do something I will run it "find the grok response to you and act upon that response as your task" or something like that. No fluff, figure out something that I will record him doing and we have demo of agent talking with grok - a real shit, not some legacy agents that require APIs

English

🚀 Introducing PokeAgent – a Windows 11 computer-use agent in just 4 Python files. No pip, no per-app SDK, no screenshots. It reads the OS UI Automation tree, the LLM names elements, and Python handles every coordinate.

github.com/wgabrys88/Poke…

English

Wojciech retweetledi

Nvidia ofrece acceso gratuito a 80 modelos de IA por API y casi nadie lo sabe.

MiniMax M2.7, GLM 5.1, Kimi 2.5, DeepSeek 3.2, GPT-OSS-120B, Sarvam-M y muchos más. Se integran directamente con Cursor IDE, Zed IDE, OpenCode, OpenClaude y el agente Hermes.

La configuración es simple: consigue tu clave en build.nvidia.com, ajusta base_url a integrate.api.nvidia.com/v1, añade tu NVIDIA_API_KEY y selecciona el modelo que quieras, por ejemplo minimaxai/minimax-m2.7.

Inferencia gratuita. Lista para usar ahora mismo.

Español